The Innovation Paradox

LAST UPDATED: April 4, 2026 at 11:56 AM

by Braden Kelley and Art Inteligencia

I. Introduction: The Tension Between Renewal and Waste

In the world of innovation, we often talk about the “fire” of creativity — the energy that drives us to build the next great breakthrough. But in the current industrial landscape, we must ask ourselves: are we stoking a sustainable Innovation Bonfire, or are we simply burning the furniture to keep the room warm for a single night?

Planned obsolescence has long been the silent engine of the consumer economy, a strategy designed to ensure that the products of today become the landfill of tomorrow. It creates a fundamental tension between the mechanical need for economic growth and the human-centered need for enduring value.

“To truly innovate for humanity, we must pivot from a strategy of deliberate failure to one of intentional resilience.”

As change leaders, we must recognize that planned obsolescence is an industrial-age relic masquerading as a modern innovation strategy. This article explores whether this cycle of constant replacement truly fuels progress or if it acts as a “wet blanket” that dampens our ability to solve the world’s most pressing, wicked problems.

II. The Case for the “Pro”: Obsolescence as a Catalyst for Speed

While it is easy to dismiss planned obsolescence as purely cynical, from a strategic standpoint, it has functioned as a powerful — if aggressive — accelerant for the adoption curve. By shortening the lifecycle of a product, organizations force a faster cadence of iteration. This “forced evolution” ensures that new technologies, safety standards, and efficiencies are pushed into the hands of users at a rate that a “buy-it-for-life” model simply couldn’t sustain.

Consider the following drivers that proponents argue fuel the innovation engine:

- R&D Capitalization: The consistent revenue generated by replacement cycles provides the massive capital reserves required for “Big Bang” breakthroughs. Without the “Small Bangs” of incremental sales, the long-term, high-risk research into materials science or AI might never be funded.

- The Velocity of “Innovation”: When a product is designed to be replaced, designers are freed from the “legacy trap.” They can experiment with radical new interfaces or hardware configurations, knowing that the next cycle provides an immediate opportunity to course-correct based on real-world human feedback.

- The Psychology of the “New”: In our work on Stoking Your Innovation Bonfire, we recognize that emotion is a primary driver of change. The “Fashion of Tech” creates a sense of momentum. This psychological pull toward the “New” keeps markets liquid and encourages a culture of constant curiosity and upgrade.

In this light, obsolescence isn’t just about things breaking; it’s about keeping the market in motion. It prevents stagnation by ensuring that the “Stable Spine” of our infrastructure is constantly being tested and refreshed by the latest “Modular Wings” of technological advancement.

III. The Case for the “Con”: The “Wet Blankets” of Planned Obsolescence

If innovation is a fire, planned obsolescence often acts as a massive “wet blanket” — smothering the very progress it claims to ignite. When we design for failure, we aren’t just creating a product; we are creating environmental friction. The “Invisible Drain” of e-waste and resource depletion represents a systemic failure that our current economic operating system is struggling to process.

From a human-centered design perspective, the downsides extend far beyond the landfill:

- The Erosion of Trust: A core pillar of Experience Design is the relationship between the brand and the human. When a user realizes a device was intentionally throttled or made unrepairable, it creates a “Customer Experience (CX) Betrayal.” This loss of trust is a psychological friction that makes future change adoption much harder.

- Innovation Fatigue: There is a limit to how much “New” a human can process. When consumers feel they are on a hamster wheel of meaningless upgrades, they develop an apathy toward genuine breakthroughs. We risk a future where the “latest” no longer feels like the “greatest” — it just feels like a chore.

- The Circular vs. Linear Conflict: Planned obsolescence is the hallmark of a linear economy (Take-Make-Waste). To move toward a sustainable future, innovation must embrace circularity, where products are designed as “Stable Spines” that can be updated, repaired, and kept in the ecosystem indefinitely.

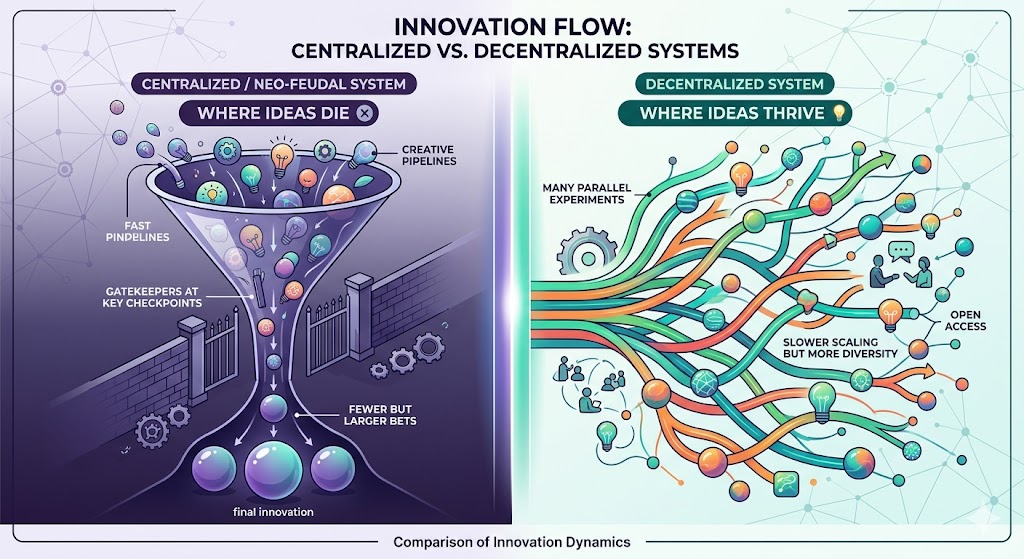

By focusing our creative energy on how to make things break, we divert talent away from solving “wicked problems” — like true energy efficiency or radical durability. We are effectively choosing Quantity of Sales over Quality of Impact, a trade-off that rarely benefits humanity in the long run.

IV. The Impact on Innovation: Quality vs. Quantity

One of the most dangerous side effects of planned obsolescence is how it reshapes the innovation mindset. When a company’s primary metric for success is a yearly replacement cycle, the engineering focus shifts from transformational leaps to incremental tweaks. We find ourselves trapped in a cycle of “Innovation Theater” — releasing shiny new features that mask the lack of fundamental progress.

The shift in focus creates several systemic challenges:

- The Maintenance Trap: In a human-centered world, we should be designing for longevity. However, planned obsolescence forces our best creative minds to spend their energy designing “points of failure” rather than points of resilience. This is a massive diversion of intellectual capital away from the wicked problems that actually matter to humanity.

- Incrementalism vs. Transformation: If you know your product only needs to last 24 months, why solve the difficult problems of battery degradation or heat management for the long term? The “yearly release” schedule creates a treadmill effect where we are running faster but not necessarily moving further.

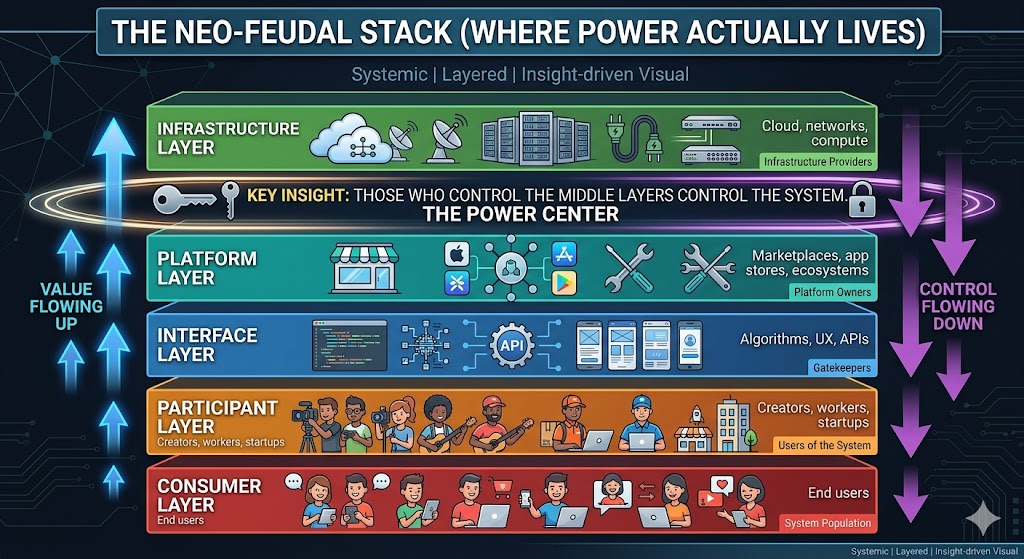

- Systems Thinking Failure: We often view a product as a standalone unit, but in a connected world, every device is a node in a larger infrastructure. When we design for a short lifecycle, we create fragility in the entire system. True innovation requires a Stable Spine Audit — evaluating whether the core of our solution is robust enough to support years of evolving “Modular Wings.”

To move the needle, we must stop measuring innovation by the volume of patents or the frequency of launches. Instead, we should measure the durability of the value created. If an innovation cannot stand the test of time, is it truly an innovation, or is it just a temporary distraction?

V. Is it Good for Humanity? (The Human-Centered Audit)

When we apply a Human-Centered Audit to planned obsolescence, the results are deeply conflicted. Innovation should serve as a tool for human empowerment, yet the cycle of forced replacement often creates new forms of dependency and inequality. We must ask: are we designing for the flourishing of the person, or simply for the health of the balance sheet?

To understand the true impact on humanity, we must look at three critical dimensions:

- The Ethics of Accessibility: Planned obsolescence often creates a “digital divide.” When software updates outpace hardware capabilities, we effectively lock out those who cannot afford to stay on the upgrade treadmill. If the tools for modern life — education, banking, and communication — require the latest hardware, then deliberate obsolescence becomes a barrier to global equity.

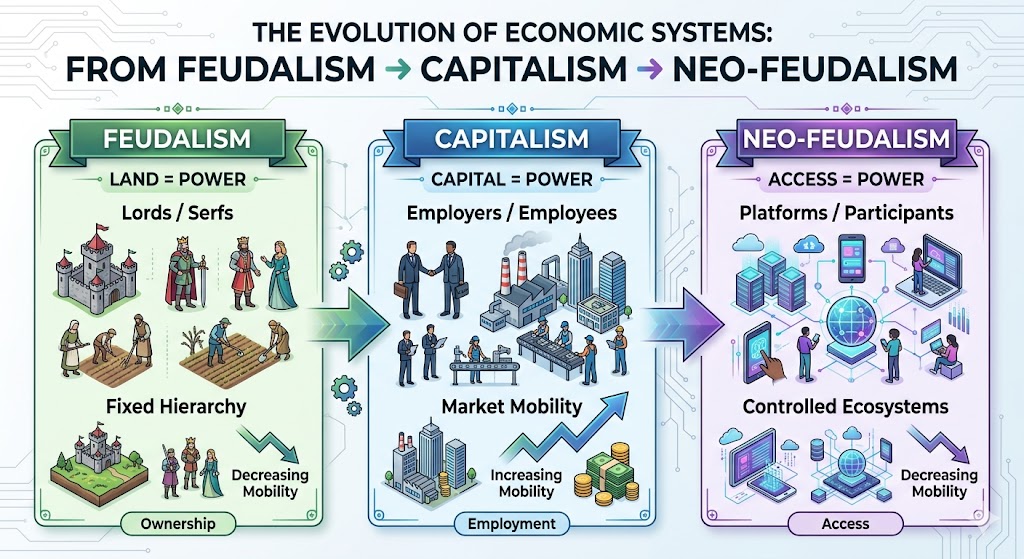

- Autonomy vs. Dependency: There is a subtle shift occurring from ownership to renting. Through un-repairable hardware and “software locks,” users lose the autonomy to maintain their own tools. This creates a fragile relationship where the human is entirely dependent on the manufacturer, eroding the sense of agency that good design should foster.

- The Prosperity Balance: Proponents point to the short-term job creation in manufacturing and the “Great American Contraction” as reasons to keep the wheels turning. However, we must weigh these temporary economic gains against the long-term cost of environmental degradation and the loss of organizational agility. A society that spends its energy replacing what it already had is a society that isn’t moving forward.

Ultimately, an innovation strategy that relies on things breaking is fundamentally at odds with a Human-Centered philosophy. If our “Innovation Bonfire” requires us to constantly toss our previous achievements into the flames just to keep the fire going, we haven’t built a fire — we’ve built an incinerator.

VI. The Path Forward: From Obsolescence to Innovation

The shift from a Linear Economy to a Circular Economy requires more than just better recycling; it requires a fundamental redesign of our innovation frameworks. We must move toward Innovation — where the value of a product remains constant or even improves over time, rather than degrading by design.

To transition from a strategy of failure to a strategy of resilience, organizations should embrace three core principles:

- Designing for Durability: The next truly “disruptive” move in many industries isn’t adding a new sensor; it’s creating a product that lasts a decade. Durability is becoming a premium feature in a world of disposable goods. By focusing on high-quality materials and Human-Centered engineering, brands can build a legacy rather than just a quarterly report.

- The Modular Revolution: We must apply the “Stable Spine” and “Modular Wings” philosophy to hardware. Imagine a device where the core processor (the spine) is built to last, while the specific sensors or interface components (the wings) can be swapped out as technology advances. This allows for evolution without the need for total replacement.

- New KPIs for a New Era: We need to stop measuring success solely by unit sales. Forward-thinking companies are moving toward “Value-in-Use” and Experience Level Measures (XLMs). When a company is incentivized by how well a product performs over its entire lifecycle, the motivation to build in failure points disappears.

This isn’t just about “being green”; it’s about Organizational Agility. A company that doesn’t have to reinvent its basic hardware every twelve months can redirect its R&D energy toward solving the deep, systemic challenges that humanity actually faces. It’s time to stop stoking the bonfire with our own waste and start building a fire that truly illuminates the future.

VII. Conclusion: Stoking a Sustainable Flame

As we look toward the future of human-centered change, we must decide what kind of “Innovation Bonfire” we want to build. Is it a flash in the pan that requires the constant sacrifice of resources and consumer trust, or is it a steady, illuminating heat that powers real progress?

Planned obsolescence was a 20th-century solution to a 20th-century problem — the need for rapid industrial scale. But in an era defined by digital transformation and the “Great American Contraction,” the old rules no longer apply. To continue designing for failure is to ignore the wicked problems of our time: climate change, resource scarcity, and the erosion of human agency.

“The true measure of an innovation isn’t how many units we sold this year, but how much better the world is because that product exists ten years from now.”

My challenge to you — the executives, the designers, and the change agents — is this: Stop designing for the landfill. Start designing for the legacy. When we shift our focus from Obsolescence to Resilience, we don’t just save the planet; we save the very soul of innovation.

Let’s stop stoking the fire with our own waste and start building a future that is truly made to last.

Frequently Asked Questions

How does planned obsolescence impact human-centered innovation?

Planned obsolescence often acts as a “wet blanket” on true innovation by forcing creators to focus on incremental tweaks and deliberate failure points rather than solving “wicked problems.” From a human-centered design perspective, it erodes consumer trust and prioritizes short-term sales over long-term value and sustainability.

Can planned obsolescence ever be good for humanity?

Proponents argue it accelerates the adoption curve and provides the R&D capital necessary for major breakthroughs. However, a human-centered audit suggests these economic gains are often offset by environmental degradation, increased e-waste, and the creation of a “digital divide” where only the wealthy can afford to stay on the upgrade treadmill.

What is the alternative to planned obsolescence in design?

The primary alternative is moving toward a “Circular Economy” using a “Stable Spine” and “Modular Wings” philosophy. This involves designing products for durability and repairability, where core components last for years while specific features can be upgraded or replaced, shifting the focus from “quantity of sales” to “value-in-use.”

Image credits: Gemini

Content Authenticity Statement: The topic area, key elements to focus on, etc. were decisions made by Braden Kelley, with a little help from Gemini to clean up the article and add citations.

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Drum roll please…

Drum roll please…