Building Teams Ready for Anything

GUEST POST from Art Inteligencia

For decades, we’ve defined high-performing teams by their collective talent, their competitive drive, or their relentless focus on execution. We’ve believed that success is a matter of gathering the smartest people in a room and demanding excellence. But as a human-centered change and innovation thought leader, I’ve seen time and again that this model is insufficient for the complexity of our modern world. The most resilient, innovative, and successful teams are not defined by individual brilliance, but by a shared sense of trust and vulnerability. Their secret weapon is a concept known as psychological safety, a foundational element that empowers people to take risks, speak up, and learn from mistakes without fear of judgment or reprisal.

Psychological safety is a shared belief held by members of a team that the team is safe for interpersonal risk-taking. In simple terms, it’s the feeling that you can be yourself, ask a “stupid” question, admit a mistake, or propose a wild idea without being shamed, ridiculed, or penalized. This isn’t a “soft” concept; it’s a hard, strategic capability. In a world where change is the only constant, teams must be able to experiment, give and receive honest feedback, and pivot with agility. None of this is possible in a fear-based environment. The human instinct to self-preserve—to avoid looking incompetent—is a powerful force. Without psychological safety, we self-censor, we withhold critical information, and we stick to the known, a sure-fire path to stagnation and irrelevance. Conversely, when psychological safety is high, a team’s collective intelligence soars, and their capacity for innovation becomes limitless.

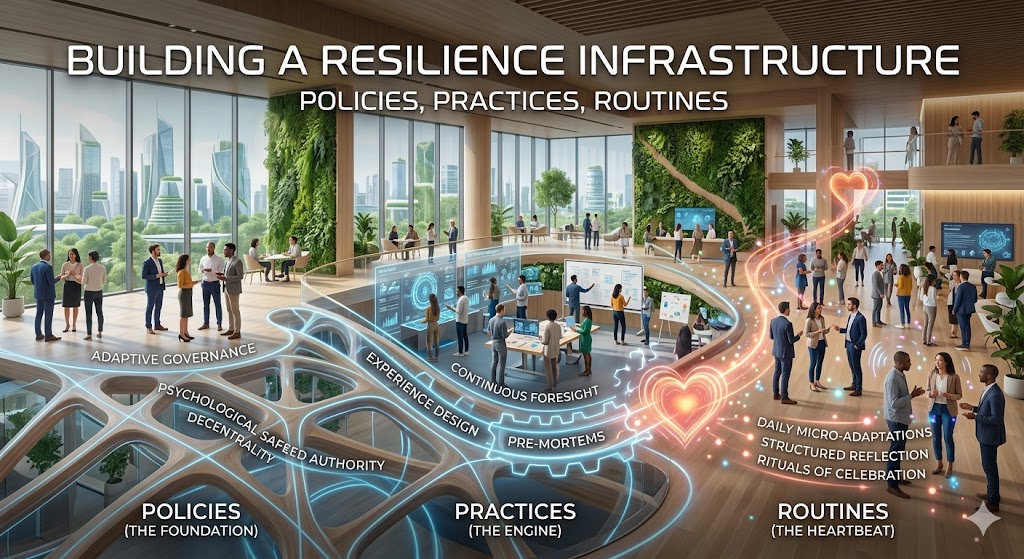

Cultivating a Culture of Safety: A Leader’s Blueprint

Building psychological safety is a leader’s most important job. It’s not about being “nice”; it’s about being intentional. Here are four essential practices for creating an environment where your team is ready for anything:

- 1. Frame the Work as a Learning Problem: In a complex world, there is no single right answer. Frame every challenge not just as a task to be executed, but as a hypothesis to be tested. This reframes failure as a source of valuable data and reframes mistakes as essential steps on the path to a solution.

- 2. Acknowledge Your Own Fallibility: Leaders must go first. When you admit a mistake, say “I don’t know,” or ask for help, you create a powerful permission structure for your team. This vulnerability signals that it’s okay for them to do the same, breaking down the fear of looking incompetent.

- 3. Practice Inclusive Inquiry: Instead of simply stating your opinion, ask questions. Actively seek out the opinions of quieter team members. Say things like, “What are we missing?” or “I want to hear from someone who disagrees with me.” This signals that diverse perspectives are not just welcome but essential.

- 4. Respond Constructively to Failure: When a project fails or a mistake is made, your response is everything. Avoid placing blame. Instead, lead with curiosity. Ask, “What did we learn from this?” and “How can we build a system to prevent this from happening again?” This turns a moment of potential crisis into a learning opportunity.

“Talent gets you on the field, but psychological safety is what allows you to win the game.” — Braden Kelley

Case Study 1: Pixar’s “Braintrust” – A Masterclass in Candor

The Challenge:

In the high-stakes world of animated filmmaking, a single creative misstep can lead to a disastrous flop. For Pixar, the challenge was to create a mechanism for frank, honest, and even brutal feedback on films in progress without crushing the creative spirit of the director and their team. A typical corporate review process would be too political and hierarchical for the level of candid feedback needed.

The Psychological Safety Solution:

Pixar’s solution was the Braintrust, an exclusive group of the company’s most accomplished directors and storytellers. This wasn’t a formal committee; it was a culture built on psychological safety. The core rules of the Braintrust are simple yet powerful: a director is never obligated to act on the feedback, and the group’s purpose is to help the film succeed, not to assert power. The feedback is always on the work, never the person. This deep, shared belief that everyone is there to help and that no one is judging personal worth allowed for a level of open, candid criticism that is almost unheard of in other creative industries. Directors could present their half-finished, deeply flawed films and receive honest input without fear of professional harm.

The Result:

The Braintrust is a key reason for Pixar’s long-term, unprecedented creative success. It is a living testament to the power of psychological safety. By building an environment where candor and vulnerability were not just tolerated but celebrated, Pixar created a collective intelligence that consistently elevated the quality of every film. They proved that honest feedback, delivered with a foundation of trust, is the ultimate driver of creative excellence.

Case Study 2: Volkswagen’s “Dieselgate” – The Cost of Silence

The Challenge:

In the years leading up to the “Dieselgate” scandal, Volkswagen was a highly centralized, hierarchical organization with a demanding culture of top-down perfection. Leaders set ambitious, often unrealistic, performance targets. The challenge was to meet a new set of strict emissions standards for their diesel vehicles, a goal that their engineering teams knew was physically impossible to achieve without compromising performance.

The Psychological Safety Failure:

In this fear-based environment, with a rigid emphasis on hierarchy and an intolerance for failure, employees were not psychologically safe to speak up. The engineers knew the emissions targets were unattainable, but they feared professional repercussions—demotion, firing, or public shaming—if they admitted failure. Instead of raising the impossible challenge to senior leadership, they chose to develop and install a “defeat device,” a software program designed to cheat on emissions tests. This was a direct, disastrous consequence of a culture that prioritized looking good over being honest and vulnerable.

The Result:

When the deception was discovered, it led to one of the biggest corporate scandals in history. The financial cost was in the tens of billions of dollars, but the damage to the company’s brand and reputation was incalculable. “Dieselgate” serves as a powerful cautionary tale. It shows that when psychological safety is absent, people will choose silence over speaking the truth, and a single, unaddressed problem can grow into a monumental crisis that threatens the very existence of the organization. It’s proof that a lack of psychological safety is not just a cultural problem; it’s a critical strategic risk.

Conclusion: The Ultimate Foundation for Innovation

Psychological safety is not a “nice-to-have.” It is the ultimate foundation for building teams that are resilient, adaptable, and ready for anything. It is the soil in which innovation grows, where creativity flourishes, and where people are empowered to be their best, most authentic selves. As leaders, our most important job is not to provide all the answers, but to create the environment where our teams feel safe enough to find them together.

In a world of constant change, the ability to learn and evolve is paramount. And learning only happens when we are willing to admit what we don’t know, to experiment without fear of failure, and to speak our minds without fear of judgment. The future belongs to the psychologically safe. Let’s start building it, one conversation and one act of vulnerability at a time.

Extra Extra: Futurology is not fortune telling. Futurists use a scientific approach to create their deliverables, but a methodology and tools like those in FutureHacking™ can empower anyone to engage in futurology themselves.

Image credit: Pexels

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Drum roll please…

Drum roll please…