by Braden Kelley

The boundary lines between different named generations are a bit fuzzy but the goal should always be to draw the boundary at an event significant enough to create substantial behavior changes in the new generation worthy of consideration in strategy formation.

I believe we have arrived at such a point and that it is time for GenZ to cede the top of strategy mountain to a new generation I call Generation AI (GenAI).

The dividing line for Generation AI falls around 2014 and the people of GenAI are characterized by being the first group of people to grow up not knowing a world without easy access to generative artificial intelligence (AI) tools that begin to transform their interactions with our institutions and each other.

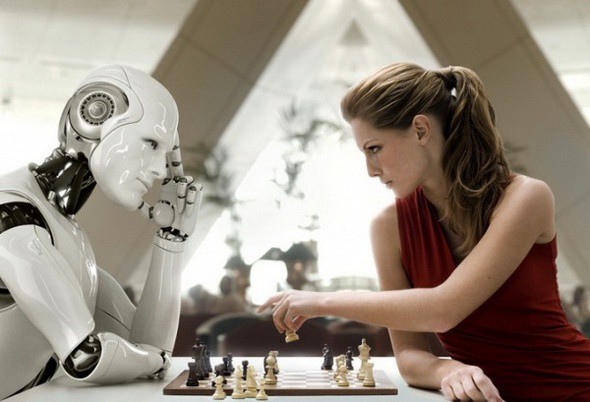

We have already seen professors and teachers having to police AI-generated school essays, while the rest of us are trying to cope with frighteningly realistic deep fake audio and video. But what other impacts on people’s behavior will we see as a result of the coming ubiquity of artificial intelligence?

It is important to remember that generative artificial intelligence is not really artificial intelligence but collective intelligence informed by what we the people have contributed to the training/reference set. As such these large language models are predicting the next word or combining existing content based on whatever training set they are exposed to. They are not creating original thought.

Generative AI is being built into nearly all of our existing software and cloud tools, and GenAI will grow up only knowing a reality where every application and web site they interact with will have an AI component to it. Generation AI will not know a time where they cannot ask an AI, in the same way that GenZ relies on social search, and Gen X and Millenials assume search engines hold their answers.

Our brains are changing to focus more on processing and less on storage. These changes make us more capable, but more vulnerable too.

This new AI technology represents a double-edge sword and its effects could fall on either edge of the sword in different areas:

Option 1 – Best Case

- Generative AI will amplify creativity by encouraging recombination of existing images, text, audio and video in new inspiring ways using the outputs of AI as inputs into human creativity

Option 2 – Worst Case

- Generative AI will reduce creativity because people will become reliant on using artificial intelligence to create, creating an echo chamber of new content only created from existing content, leading to AI outputs becoming the only outputs and a world where people spend more time interacting with AI’s than with other people

Which of these two options on the impact of AI reliance do you see as the most likely in the areas where you focus?

How do you see Generation AI impacting the direction of societies around the world?

Are you planning to add Generation AI to your marketing strategies and strategic planning for 2024 or beyond?

Reference

For reference, here is timeline of previous American generations according to an article from NPR:

Though there is a consensus on the general time period for generations, there is not an agreement on the exact year that each generation begins and ends.

Generation Z – Born 2001-2013 (Age 10-22)

These kids were the first born with the Internet and are suspected to be the most individualistic and technology-dependent generation. Sometimes referred to as the iGeneration.

EDITOR’S NOTE: This description is erroneous, the differentiating factor of GenZ is that they experienced the rise of social media.

Millennials – Born 1980-2000 (Age 23-43)

They experienced the rise of the Internet, Sept. 11 and the wars that followed. Sometimes called Generation Y. Because of their dependence on technology, they are said to be entitled and narcissistic.

Generation X – Born 1965-1979 (Age 44-58)

They were originally called the baby busters because fertility rates fell after the boomers. As teenagers, they experienced the AIDs epidemic and the fall of the Berlin Wall. Sometimes called the MTV Generation, the “X” in their name refers to this generation’s desire not to be defined.

EDITOR’S NOTE: GenX also experienced the rise of the personal computer and this has influenced their parenting of a large portion of Millenials and GenZ

Baby Boomers – Born 1943-1964 (Age 59-80)

The boomers were born during an economic and baby boom following World War II. These hippie kids protested against the Vietnam War and participated in the civil rights movement, all with rock ‘n’ roll music blaring in the background.

Silent Generation – Born 1925-1942 (Age 81-98)

They were too young to see action in World War II and too old to participate in the fun of the Summer of Love. This label describes their conformist tendencies and belief that following the rules was a sure ticket to success.

GI Generation – Born 1901-1924 (Age 99+)

They were teenagers during the Great Depression and fought in World War II. Sometimes called the greatest generation (following a book by journalist Tom Brokaw) or the swing generation because of their jazz music.

If you’d like to sign up to learn more about my new FutureHacking™ methodology and set of tools, go here.

![]() Sign up here to join 17,000+ leaders getting Human-Centered Change & Innovation Weekly delivered to their inbox every week.

Sign up here to join 17,000+ leaders getting Human-Centered Change & Innovation Weekly delivered to their inbox every week.