Designing Systems That Know They Might Be Wrong

LAST UPDATED: March 6, 2026 at 5:07 PM

GUEST POST from Art Inteligencia

I. Introduction: The Next Frontier in Responsible Innovation

As artificial intelligence and algorithmic systems take on increasingly consequential roles in our organizations and societies, a new challenge is emerging. The most dangerous systems are not necessarily the ones that make mistakes. The most dangerous systems are the ones that operate with complete confidence that they are right.

Innovation has always involved uncertainty. But when technology begins influencing decisions about hiring, healthcare, financial access, mobility, and public policy, uncertainty is no longer just a business risk—it becomes a moral one.

This is where a new concept begins to take shape: Moral Uncertainty Engines.

A Moral Uncertainty Engine is a decision architecture designed to recognize that ethical clarity is often elusive. Instead of embedding a single moral framework into a system, these engines evaluate decisions through multiple ethical lenses, quantify disagreements between them, and surface those tensions for human oversight.

In other words, they are systems designed not just to make decisions, but to acknowledge when the ethical landscape is ambiguous.

This represents a profound shift in how we design intelligent systems. For decades, the goal of technology was optimization—finding the single best answer. But the reality of human values is messier. What maximizes efficiency may conflict with fairness. What benefits the majority may harm the vulnerable. What is legal may not always be ethical.

Moral Uncertainty Engines do not attempt to eliminate these tensions. Instead, they illuminate them.

In doing so, they create the possibility for organizations to move beyond simplistic “ethical AI” checklists toward something far more powerful: systems that actively help leaders navigate complex moral tradeoffs.

Because the future of responsible innovation will not belong to the organizations that claim to have solved ethics. It will belong to the ones humble enough to admit they haven’t—and wise enough to design systems that help them think through it anyway.

II. What Is a Moral Uncertainty Engine?

Before we can explore the potential of Moral Uncertainty Engines, we need a clear understanding of what they are and why they matter. At their core, Moral Uncertainty Engines are decision-support systems designed to recognize that ethical certainty is often an illusion.

Traditional algorithms are built to optimize for a defined objective—maximize profit, minimize cost, increase efficiency, or predict outcomes with the highest statistical accuracy. But real-world decisions rarely involve just one objective. They involve competing values, conflicting priorities, and ethical tradeoffs that cannot always be resolved with a single formula.

A Moral Uncertainty Engine is a system designed to evaluate decisions through multiple ethical frameworks simultaneously and to acknowledge when those frameworks disagree.

Instead of embedding a single moral rule set into a system, these engines assess potential actions across different ethical perspectives and quantify the level of uncertainty or conflict between them. The result is not necessarily a single definitive answer, but a clearer picture of the ethical terrain surrounding a decision.

In practice, a Moral Uncertainty Engine typically performs several key functions:

- Multi-framework evaluation – analyzing decisions through several ethical lenses rather than relying on a single rule set.

- Ethical tradeoff analysis – identifying where different value systems produce conflicting recommendations.

- Uncertainty scoring – measuring how confident the system can be in a morally acceptable course of action.

- Transparency and explanation – making visible the reasoning behind recommendations.

- Human escalation triggers – flagging decisions where ethical disagreement is high and human judgment is required.

To understand how this works, consider the most common ethical frameworks used in moral reasoning. A Moral Uncertainty Engine might evaluate a decision using several of these simultaneously:

- Utilitarianism – Which option produces the greatest overall good?

- Rights-based ethics – Does the decision violate fundamental rights?

- Justice and fairness – Are harms and benefits distributed equitably?

- Care ethics – How does the decision affect the most vulnerable stakeholders?

When these frameworks align, the system can move forward with confidence. But when they conflict—as they often do—the engine highlights the disagreement and surfaces the ethical tension instead of burying it.

This is the key insight behind Moral Uncertainty Engines: ethical complexity should not be hidden inside algorithms. It should be surfaced, measured, and navigated deliberately.

In many ways, these systems represent the next step in the evolution of responsible innovation. Rather than pretending that technology can eliminate moral ambiguity, they acknowledge that ambiguity is part of the landscape—and they help leaders make better decisions within it.

III. Why Moral Uncertainty Matters Now

The concept of Moral Uncertainty Engines might sound theoretical at first, but the forces making them necessary are already here. As organizations deploy increasingly autonomous technologies and algorithmic decision systems, they are encountering ethical dilemmas at a scale and speed that traditional governance structures were never designed to handle.

In the past, ethical decisions were typically made by humans, often slowly and with room for debate. Today, many of those same decisions are being influenced—or outright determined—by automated systems operating in milliseconds.

That shift creates a fundamental challenge: machines are excellent at optimizing defined objectives, but they struggle when the objectives themselves are morally contested.

AI Systems Are Increasingly Making Moral Decisions

Consider how many domains already rely on algorithmic decision-making:

- Autonomous vehicles determining how to react in unavoidable accident scenarios

- Healthcare systems prioritizing patients for scarce treatments

- Hiring algorithms screening job candidates

- Financial models determining who receives loans or credit

- Content moderation systems deciding what speech is allowed online

Each of these systems contains embedded value judgments—whether explicitly designed or not. The problem is that most organizations treat these judgments as technical questions rather than ethical ones.

There Is No Universal Ethical Consensus

Humans themselves rarely agree on the “correct” moral answer in complex situations. Different cultures, organizations, and individuals prioritize different values. Some emphasize maximizing overall benefit, while others prioritize protecting individual rights or safeguarding vulnerable populations.

When technology is designed around a single ethical assumption, it risks imposing that value system invisibly and at scale.

Moral Uncertainty Engines acknowledge this reality by recognizing that ethical frameworks often produce conflicting recommendations. Instead of pretending consensus exists, they surface the disagreement so that organizations can navigate it deliberately.

The Risk of Moral Overconfidence

Perhaps the greatest danger in modern algorithmic systems is not error—it is overconfidence. Many AI systems produce outputs that appear authoritative, even when the underlying ethical reasoning is incomplete, biased, or based on questionable assumptions.

This can create what might be called moral automation bias, where humans defer to algorithmic recommendations simply because they appear objective or mathematically grounded.

Moral Uncertainty Engines introduce a critical counterbalance: they explicitly communicate when a decision is ethically ambiguous, contested, or uncertain.

The Innovation Opportunity

Organizations that learn how to operationalize moral uncertainty will gain an important advantage. They will be better equipped to:

- Build trust with customers and stakeholders

- Navigate regulatory scrutiny

- Avoid reputational crises driven by opaque algorithms

- Make more resilient long-term decisions

In other words, acknowledging ethical uncertainty is not a weakness. It is a capability—one that responsible innovators will increasingly need as technology becomes more powerful and more deeply embedded in human lives.

IV. How Moral Uncertainty Engines Work

To understand the potential of Moral Uncertainty Engines, it helps to look at how such a system might actually function in practice. While the concept is still emerging, the underlying architecture draws from fields like decision science, AI safety, machine ethics, and risk management.

At a high level, a Moral Uncertainty Engine acts as a layered decision-support system. Rather than producing a single optimized answer, it evaluates potential actions through multiple ethical perspectives and identifies where those perspectives align—or conflict.

A simplified architecture typically includes four key layers.

Layer 1: Situation Awareness

Every ethical decision begins with context. The system first gathers relevant information about the situation, including:

- The stakeholders involved

- The potential consequences of different actions

- Legal or regulatory constraints

- The scale and reversibility of potential harm

This layer ensures that the system understands the environment in which a decision is being made before attempting to evaluate its ethical implications.

Layer 2: Ethical Framework Evaluation

Next, the system analyzes the possible courses of action through multiple ethical frameworks. Each framework evaluates the decision according to its own principles and priorities.

For example:

- Utilitarian perspective: Which option produces the greatest overall benefit?

- Rights-based perspective: Does any option violate fundamental rights?

- Justice perspective: Are harms and benefits distributed fairly?

- Care perspective: How are vulnerable stakeholders affected?

Each framework generates its own assessment of the available choices.

Layer 3: Moral Aggregation

Once the frameworks have evaluated the options, the system compares their recommendations. In some cases, the frameworks may converge on a similar outcome. In others, they may strongly disagree.

Several approaches can be used to combine these evaluations, including weighted voting models, scenario simulations, or expected moral value calculations. The goal is not necessarily to produce a single definitive answer, but to understand the balance of ethical considerations across the frameworks.

Layer 4: Uncertainty and Escalation

The final layer measures how much disagreement exists between the ethical perspectives. If the frameworks align strongly, the system may proceed with a recommendation. If they diverge significantly, the system can flag the decision as ethically uncertain.

At this point, several actions may occur:

- The system provides an explanation of the ethical tradeoffs

- A confidence or uncertainty score is generated

- The decision is escalated to human oversight

This is the core value of a Moral Uncertainty Engine. Instead of hiding ethical tension behind an optimized output, it reveals the complexity of the decision and invites human judgment where it matters most.

In many ways, these systems function less like automated decision-makers and more like ethical copilots—tools that help organizations think more clearly about the moral consequences of their choices.

V. Case Study: Autonomous Vehicles and the Trolley Problem

Few examples illustrate the challenge of moral uncertainty more clearly than autonomous vehicles. When self-driving systems operate on public roads, they must continuously make decisions that involve safety tradeoffs. Most of the time these choices are routine—slow down, change lanes, maintain distance. But in rare circumstances, a vehicle may face an unavoidable accident scenario where harm cannot be completely prevented.

These moments resemble the classic ethical thought experiment known as the “trolley problem,” where a decision must be made between two outcomes, each involving some form of harm. While philosophers have debated such scenarios for decades, autonomous vehicle developers must translate those debates into operational decisions inside real-world systems.

The difficulty is that different ethical frameworks often produce different answers. A strictly utilitarian approach might prioritize minimizing total casualties. A rights-based perspective might argue that intentionally choosing to harm one person to save others violates fundamental moral principles. A fairness perspective might question whether certain groups are systematically placed at greater risk.

Many early attempts to address these questions focused on encoding a single rule or priority structure into the vehicle’s decision logic. But this approach assumes that there is one universally acceptable ethical answer—an assumption that rarely holds across cultures, legal systems, or public opinion.

A Moral Uncertainty Engine offers a different approach. Instead of hard-coding a single moral rule, the system evaluates potential actions across multiple ethical frameworks and identifies where they agree and where they conflict.

For example, the system might:

- Analyze the scenario from a utilitarian perspective focused on minimizing total harm

- Evaluate whether any potential action violates protected rights

- Assess whether the risks are being distributed fairly among stakeholders

If these frameworks converge on the same outcome, the system can act with greater confidence. If they diverge significantly, the vehicle may default to a predefined safety posture—such as minimizing speed and impact energy—rather than making an ethically aggressive tradeoff.

More importantly, the decision framework itself becomes transparent and auditable. Engineers, regulators, and the public can examine how ethical considerations were evaluated rather than treating the system as a black box.

The lesson from autonomous vehicles extends far beyond transportation. As technology becomes increasingly embedded in complex human environments, organizations will need systems that can recognize ethical tension instead of pretending it doesn’t exist.

Moral Uncertainty Engines provide a path toward that future—one where intelligent systems are designed not only to act, but to reflect the moral complexity of the world they operate within.

VI. Case Study: AI Medical Triage and the Ethics of Scarcity

Healthcare provides one of the most powerful real-world examples of why moral uncertainty matters. Medical systems regularly face situations where resources are limited and difficult prioritization decisions must be made. During public health crises, such as pandemics, these tradeoffs can become especially stark.

Hospitals may need to decide how to allocate ventilators, ICU beds, specialized treatments, or transplant organs when demand exceeds supply. Historically, these decisions have been guided by medical ethics boards, physician judgment, and carefully developed triage protocols. Increasingly, however, algorithmic systems are being introduced to help manage these decisions at scale.

Many triage algorithms are designed to optimize measurable outcomes such as survival probability or expected life-years saved. While these metrics may appear objective, they can create serious ethical tensions when translated into real-world policy.

For example, prioritizing expected life-years may unintentionally disadvantage older patients. Models that rely heavily on historical health data may penalize individuals from underserved communities who have historically received less access to preventative care. Systems designed purely around statistical survival probabilities may overlook broader ethical considerations about fairness, dignity, or social vulnerability.

This is precisely the kind of scenario where a Moral Uncertainty Engine could provide meaningful support.

Instead of optimizing for a single metric, the system evaluates triage decisions through several ethical perspectives simultaneously. A utilitarian framework may prioritize maximizing the number of lives saved. A justice-based framework may emphasize equitable access across demographic groups. A care-based framework may highlight the needs of the most vulnerable patients.

When these perspectives align, the system can offer a strong recommendation. But when they conflict—as they often do in healthcare—the engine surfaces that conflict rather than hiding it behind a numerical score.

The result is not an automated moral verdict. Instead, clinicians and ethics boards receive a clearer picture of the ethical tradeoffs embedded in each decision. The system may present alternative allocation scenarios, highlight potential bias risks, or flag cases that require human deliberation.

In this way, the technology functions less as a replacement for human judgment and more as a decision companion. It expands the visibility of ethical consequences while preserving the role of human responsibility.

Healthcare leaders already recognize that medical decisions involve more than statistics. Moral Uncertainty Engines simply help bring that ethical complexity into the design of the systems that increasingly shape those decisions.

VII. Leading Companies and Startups Exploring Moral Uncertainty

Moral Uncertainty Engines are still an emerging concept, but the foundational components of this category are already being developed across the technology ecosystem. Large technology firms, AI safety organizations, governance platforms, and startups focused on responsible AI are all contributing pieces of what could eventually become full ethical decision infrastructures.

While few organizations are explicitly using the term “Moral Uncertainty Engine,” many are working on the critical building blocks: AI alignment systems, ethical reasoning frameworks, transparency tools, and governance platforms designed to ensure responsible decision-making.

Large Technology Companies

Several major technology companies are investing heavily in AI alignment and responsible innovation. Their research programs are exploring ways to ensure that increasingly autonomous systems operate within acceptable ethical boundaries.

- OpenAI – Research into alignment methods such as reinforcement learning from human feedback and systems designed to incorporate human values into AI behavior.

- Google DeepMind – Work on AI safety, scalable oversight, and constitutional approaches to guiding model behavior.

- Microsoft – Development of responsible AI frameworks, governance tools, and organizational guidelines for ethical AI deployment.

These companies are helping to define the infrastructure that future ethical decision systems will rely upon.

Emerging Startups

A growing number of startups are focusing specifically on governance, auditing, and ethical oversight for AI systems. These organizations are building platforms that help companies monitor algorithmic behavior, detect bias, and ensure compliance with evolving regulatory standards.

- Credo AI – Provides governance platforms designed to help organizations operationalize responsible AI practices.

- Holistic AI – Offers tools for auditing AI systems, identifying bias, and evaluating risk across machine learning models.

- CIRIS – Focuses on runtime governance layers designed to help organizations manage the behavior of AI agents in production environments.

These companies are not yet full Moral Uncertainty Engines, but they are building the monitoring and governance layers that such systems will likely require.

Academic and Research Institutions

Some of the most important advances in machine ethics and moral decision systems are emerging from research institutions exploring how ethical reasoning can be integrated into AI architectures.

- Stanford Human-Centered AI

- MIT Media Lab

- Oxford’s AI safety and governance research community

Researchers in these communities are experimenting with methods for translating ethical theory into operational systems capable of evaluating tradeoffs, measuring moral uncertainty, and providing transparent reasoning.

Taken together, these organizations represent the early ecosystem surrounding what could become one of the most important innovation categories of the next decade: technologies designed not just to make decisions, but to help society navigate the moral complexity that accompanies them.

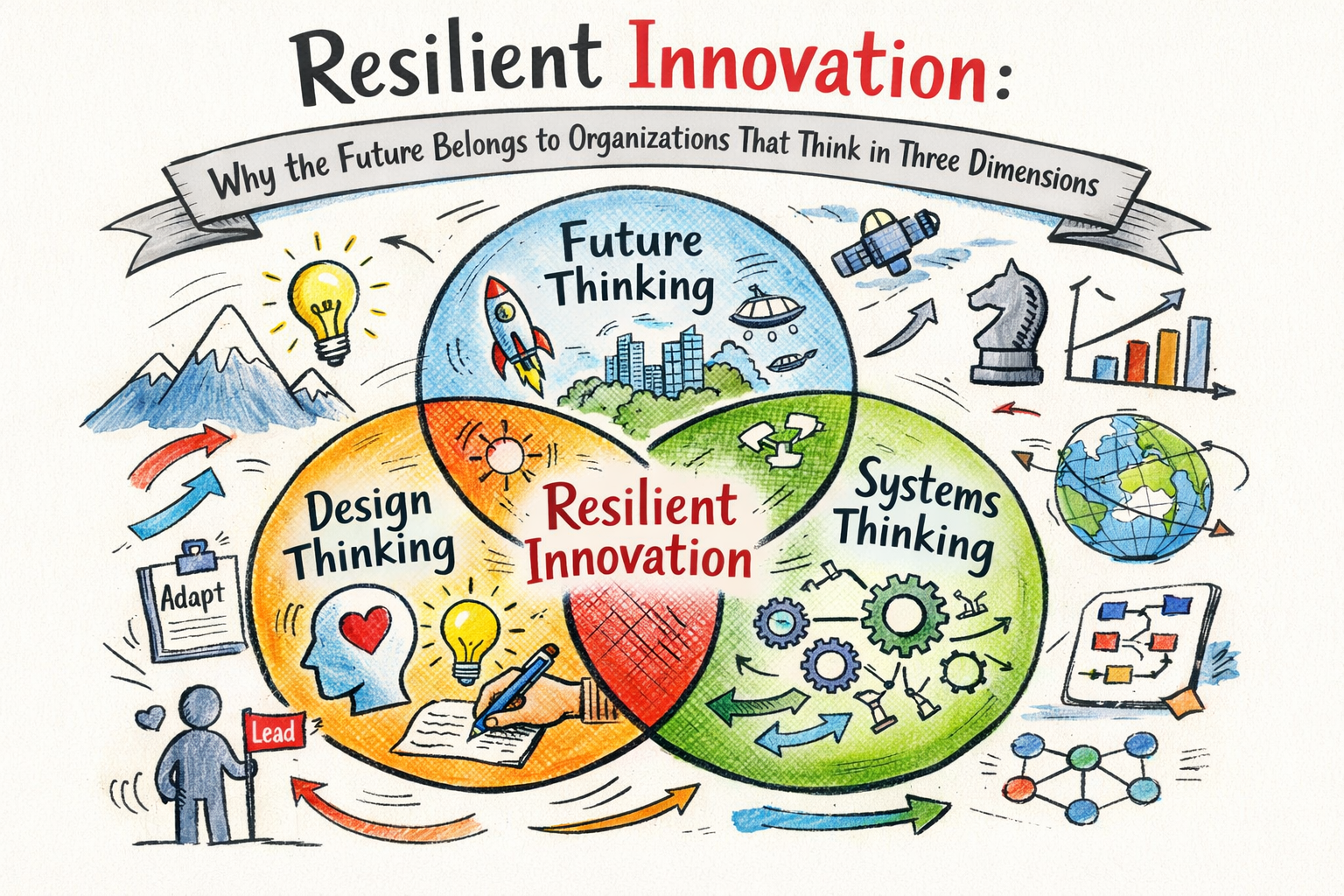

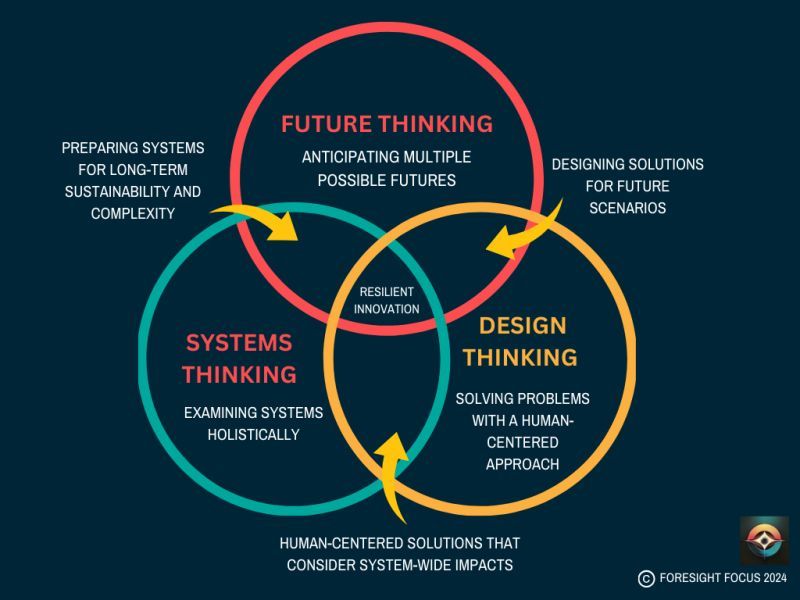

VIII. The Innovation Opportunities

If Moral Uncertainty Engines sound like a niche academic concept today, history suggests that may not remain the case for long. Many of the most important innovation categories begin as abstract ideas before evolving into entire industries. Cloud computing, cybersecurity, and digital trust platforms all followed similar paths.

As AI systems become more deeply embedded in critical decisions, the ability to surface ethical tradeoffs and navigate moral uncertainty will become an increasingly valuable capability. This opens the door to several new innovation opportunities for entrepreneurs, technology companies, and forward-looking organizations.

Ethical Infrastructure Platforms

One opportunity lies in the creation of ethical infrastructure platforms—systems designed to plug into existing AI models and decision engines to provide moral evaluation layers. These platforms could function much like security software or monitoring tools, continuously assessing algorithmic behavior and flagging ethical risks.

Capabilities in this category might include:

- Multi-framework ethical scoring for algorithmic decisions

- Real-time bias detection and mitigation

- Transparency dashboards for regulators and stakeholders

- Ethical risk monitoring across large AI deployments

In effect, these platforms would provide the ethical equivalent of observability tools used in modern software systems.

Organizational Decision Copilots

Another opportunity lies in decision-support tools designed specifically for human leaders. Instead of automating decisions, these systems would act as ethical copilots—helping executives, policymakers, and product teams evaluate complex tradeoffs before implementing new technologies or policies.

Such tools might help organizations:

- Simulate the ethical consequences of product features

- Evaluate policy choices across competing value systems

- Identify stakeholder groups most likely to be affected by a decision

- Stress-test innovations against potential ethical controversies

In this model, the goal is not to replace human judgment, but to strengthen it with better visibility into ethical complexity.

Ethical Digital Twins

A particularly intriguing possibility is the development of ethical digital twins—simulation environments where organizations can test how different decisions might impact stakeholders across multiple ethical frameworks before deploying them in the real world.

Just as engineers use digital twins to simulate the performance of physical systems, leaders could use ethical simulation environments to anticipate unintended consequences, reputational risks, or fairness concerns before they emerge.

The Birth of a New Category

If these opportunities mature, Moral Uncertainty Engines could become the foundation for a new category of enterprise technology focused on ethical intelligence. Organizations would no longer rely solely on legal compliance or reactive crisis management to address ethical challenges. Instead, they would have systems designed to help them navigate those challenges proactively.

In a world where innovation increasingly shapes society at scale, the ability to operationalize ethical awareness may become just as important as the ability to write code or analyze data.

IX. The Risks and Criticisms of Moral Uncertainty Engines

Like any emerging technology category, Moral Uncertainty Engines bring both promise and potential pitfalls. While these systems could help organizations navigate complex ethical terrain more thoughtfully, they also raise legitimate concerns about how moral reasoning is translated into software and who ultimately holds responsibility for the outcomes.

If organizations are not careful, the very tools designed to improve ethical decision-making could inadvertently create new forms of risk.

The Danger of Moral Outsourcing

One of the most common criticisms is the risk of moral outsourcing. When organizations rely too heavily on algorithmic systems to evaluate ethical decisions, leaders may begin to treat those systems as final authorities rather than decision-support tools.

This can create a dangerous dynamic where responsibility quietly shifts from humans to algorithms. Instead of asking whether a decision is morally defensible, leaders may simply ask whether the system approved it.

Moral Uncertainty Engines should never replace human judgment. Their purpose is to illuminate ethical tradeoffs—not to absolve decision-makers of responsibility.

The Illusion of Objectivity

Another concern is the possibility that ethical scoring systems may create a false sense of precision. Numbers, dashboards, and scores can make complex moral questions appear more objective than they actually are.

But ethical frameworks themselves contain assumptions and value judgments. The choice of which frameworks to include, how they are weighted, and how outcomes are interpreted can all influence the system’s conclusions.

Without transparency, these embedded assumptions may go unnoticed by the people relying on the system.

Cultural and Societal Bias

Ethics is deeply shaped by culture, history, and social context. A system designed around one set of moral priorities may not reflect the values of another community or region.

If Moral Uncertainty Engines are built primarily by a narrow set of organizations or cultural perspectives, they could unintentionally export those values into systems used around the world.

Designing these systems responsibly will require diverse input from ethicists, policymakers, technologists, and communities affected by the decisions being modeled.

The Complexity Challenge

Finally, there is a practical challenge: ethical reasoning is incredibly complex. Translating philosophical frameworks into computational systems is difficult, and oversimplification is always a risk.

Not every moral dilemma can be captured in a model, and not every ethical conflict can be resolved through structured analysis.

Recognizing these limitations is essential. The goal of Moral Uncertainty Engines should not be to mechanize morality, but to provide better tools for navigating difficult decisions.

If designed thoughtfully, these systems can serve as valuable companions to human judgment. But if treated as definitive authorities, they risk becoming yet another example of technology that promises clarity while quietly obscuring the deeper questions that matter most.

X. The Leadership Imperative

The rise of Moral Uncertainty Engines underscores a critical lesson for leaders: technology alone cannot solve ethical complexity. Organizations that rely on automated systems to make moral decisions without human oversight risk both moral and reputational failure.

Leaders must approach these tools as companions rather than replacements—systems designed to illuminate ethical tradeoffs, measure uncertainty, and support thoughtful deliberation.

Key Principles for Responsible Leadership

- Accountability: Leaders retain ultimate responsibility for decisions, even when supported by Moral Uncertainty Engines.

- Transparency: Ensure that the reasoning behind system recommendations is visible, understandable, and auditable by humans.

- Human Oversight: Use automated insights as decision-support, not as authoritative directives. Escalate ethically ambiguous scenarios to human judgment.

- Ethical Culture: Encourage organizational practices that prioritize ethical reflection alongside operational efficiency and innovation.

- Diversity of Perspectives: Incorporate insights from ethicists, technologists, and stakeholders representing different communities and cultural contexts.

Moral Uncertainty Engines are powerful because they make ethical ambiguity visible. But the value of that visibility depends entirely on the people interpreting it. Leaders who are willing to engage with these systems thoughtfully—questioning assumptions, evaluating tradeoffs, and embracing uncertainty—will turn ethical complexity into a strategic advantage.

In short, the technology alone does not create ethical outcomes. It is the combination of human judgment, responsible leadership, and machine-supported insight that allows organizations to navigate moral uncertainty successfully.

XI. Conclusion: Designing Systems That Know Their Limits

Moral Uncertainty Engines represent a profound shift in how we think about technology and ethics. They are not designed to replace human judgment, nor to provide definitive moral answers. Instead, they offer a framework for surfacing ethical tradeoffs, quantifying uncertainty, and supporting deliberate decision-making in complex contexts.

The systems of the future will need to balance intelligence with humility. They must optimize for outcomes while acknowledging the moral ambiguity inherent in most consequential decisions. By doing so, they create space for leaders, teams, and organizations to reflect, deliberate, and choose responsibly.

Across industries—from autonomous vehicles to healthcare triage, from hiring algorithms to public policy—ethical complexity is unavoidable. Moral Uncertainty Engines give organizations the tools to confront that complexity openly rather than hiding it behind optimization metrics or opaque algorithms.

In practice, these engines act as ethical copilots. They illuminate areas of tension, highlight disagreements between frameworks, and provide decision-makers with richer, more nuanced insights. The true measure of their success is not perfect moral accuracy, but the degree to which they enable human leaders to make informed, accountable, and ethically aware decisions.

Ultimately, the organizations that thrive in an increasingly automated and interconnected world will be those that design systems capable of acknowledging their limits—and that pair those systems with leaders willing to navigate uncertainty thoughtfully. In this way, Moral Uncertainty Engines may become one of the most important tools for fostering responsible innovation in the 21st century.

Frequently Asked Questions

1. What is a Moral Uncertainty Engine?

A Moral Uncertainty Engine is a decision-support system designed to evaluate choices through multiple ethical frameworks, quantify areas of disagreement, and provide transparent guidance or escalation when ethical uncertainty is high. Its purpose is to help organizations navigate complex moral tradeoffs rather than replace human judgment.

2. Why are Moral Uncertainty Engines important today?

As AI and algorithmic systems increasingly make decisions that affect people’s lives, the ability to surface and manage ethical uncertainty becomes critical. These engines reduce risks of overconfidence, bias, and hidden ethical assumptions, enabling organizations to make more responsible, accountable, and trusted decisions.

3. Which industries or applications can benefit from Moral Uncertainty Engines?

Any sector where complex decisions with moral implications are made can benefit, including healthcare triage, autonomous vehicles, hiring and HR systems, financial services, content moderation, and public policy. Essentially, any domain where decisions have significant ethical consequences can leverage these systems to guide thoughtful human oversight.

Disclaimer: This article speculates on the potential future applications of cutting-edge scientific research. While based on current scientific understanding, the practical realization of these concepts may vary in timeline and feasibility and are subject to ongoing research and development.

Image credits: Google Gemini

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Drum roll please…

Drum roll please…

To help you find problems worth solving and to design and execute experiments, I created a couple of visual and collaborative tools to help you thrive in this new reality. Download them both from my store and enjoy!

To help you find problems worth solving and to design and execute experiments, I created a couple of visual and collaborative tools to help you thrive in this new reality. Download them both from my store and enjoy!