Why Giving AI More Autonomy Requires Us to Give Humans More Agency

LAST UPDATED: April 10, 2026 at 7:11 PM

by Braden Kelley and Art Inteligencia

The Rise of the Machine “Doer”

For the past few years, we have lived in the era of Generative AI — a world of sophisticated chatbots and creative assistants that respond to our prompts. But as we move deeper into 2026, the landscape has shifted. We are now entering the age of Agentic AI. These are not just tools that talk; they are autonomous systems capable of executing complex workflows, making real-time decisions, and acting on our behalf across digital ecosystems.

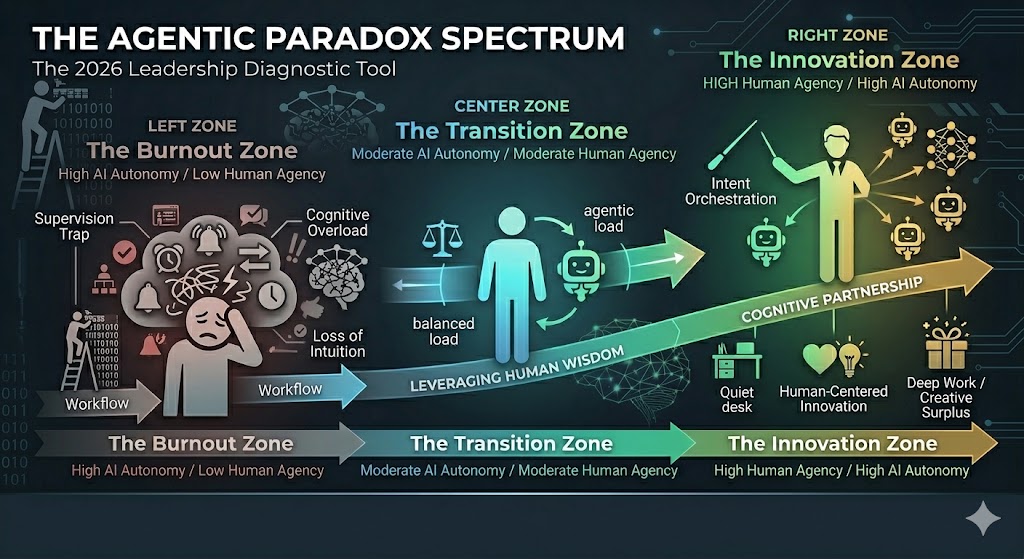

On the surface, this promises the ultimate efficiency. We imagine a future where the “busy work” vanishes, leaving us free to innovate. However, a troubling Agentic Paradox has emerged: as we grant machines more autonomy to act, many humans are finding themselves with less agency. Instead of feeling liberated, workers often feel like they are merely “babysitting” algorithms or reacting to a relentless stream of machine-generated outputs.

This disconnect creates a high-stakes leadership challenge. If we focus solely on the autonomy of the machine, we risk creating an “algorithmic anxiety” that stifles the very human creativity we need to thrive. To succeed in this new era, leaders must realize that the more powerful our AI agents become, the more we must intentionally “upgrade” the agency, authority, and strategic focus of our people.

The Thesis: The goal of innovation in 2026 is not to build the most autonomous machine, but to build a human-centered ecosystem where AI agents manage the tasks and empowered humans manage the intent.

The Hidden Cost: The Cognitive Load Crisis

The promise of Agentic AI was a reduction in workload, but for many organizations, the reality has been a shift in the type of work rather than a reduction of it. This has birthed the Cognitive Load Crisis. While an autonomous agent can process data and execute tasks 24/7, it lacks the contextual wisdom to understand the nuances of organizational culture or ethical gray areas. This leaves the human “orchestrator” in a state of perpetual high-alert.

Instead of performing deep, meaningful work, leaders and employees are becoming trapped in the Supervision Trap. They are forced to manage a relentless firehose of machine-generated notifications, approvals, and “check-ins.” This creates a fragmented mental state where the human mind is constantly context-switching between different agent streams, leading to a unique form of 2026 burnout — digital exhaustion without the satisfaction of tactile achievement.

Furthermore, as AI agents take over more of the “doing,” we see an erosion of Deep Work. When every minute is spent verifying the output of an algorithm, the quiet space required for radical innovation and strategic foresight vanishes. We are effectively trading our long-term creative capacity for short-term operational speed.

- Notification Fatigue: The mental tax of being the constant “emergency brake” for autonomous systems.

- Loss of Intuition: The danger of becoming so reliant on agentic data that we lose our “gut feel” for the market.

- The Feedback Loop: A system where humans spend more time managing machines than mentoring people.

To break this cycle, we must stop treating AI agents as simple productivity tools and start treating them as entities that require a new architecture of human attention. If we don’t manage the cognitive load, our most talented people will eventually shut down, leaving the “Magic Makers” of our organization feeling like mere cogs in a machine-led wheel.

Redefining Roles: From “The Conscript” to “The Architect”

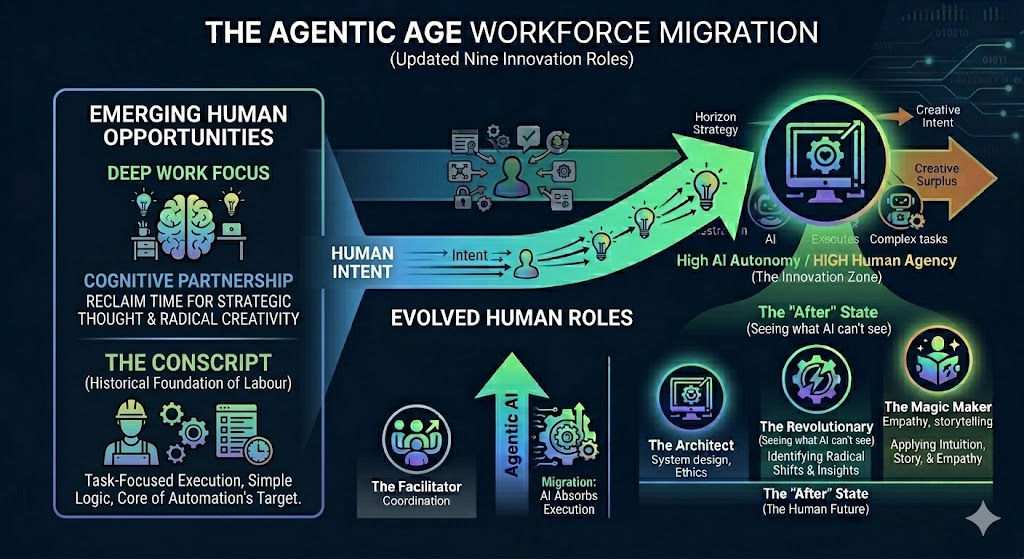

As the landscape of work shifts, so too must our understanding of how individuals contribute to the innovation ecosystem. In my work on the Nine Innovation Roles, I’ve often highlighted how different archetypes fuel organizational growth. In this agentic age, we are seeing a dramatic migration of these roles. If we are not intentional, our best people will default into the role of The Conscript — those who are merely drafted into service to support the AI’s agenda, performing the monotonous tasks of verification and data cleanup.

The goal of a human-centered transformation is to automate the role of the “Conscript” and elevate the human into the role of The Architect or The Magic Maker. When the AI handles the heavy lifting of execution, the human is finally free to focus on Intent. This is where true agency resides. Agency is not the ability to do more; it is the power to decide what is worth doing and why it matters to the human beings we serve.

However, there is a dangerous “Agency Gap” emerging. If an organization implements AI agents without redefining human job descriptions, employees lose their sense of ownership. When the machine becomes the primary creator, the human “spark” is extinguished. We must ensure that AI serves as the support staff for human intuition, not the other way around.

The Migration of Value

| The AI Agent Role | The Human Agency Role |

|---|---|

| The Conscript: Handling repetitive execution and data synthesis. | The Architect: Designing the systems and ethical frameworks for the AI. |

| The Facilitator: Coordinating schedules and managing basic workflows. | The Revolutionary: Identifying the “radical” shifts the AI isn’t programmed to see. |

| The Specialist: Performing deep-dive technical analysis at scale. | The Magic Maker: Applying empathy and storytelling to turn data into a movement. |

By clearly delineating these roles, leaders can close the Agency Gap. We must empower our teams to move away from “monitoring” and toward “orchestrating.” This transition is the difference between a workforce that feels obsolete and one that feels essential.

FutureHacking™ the Cognitive Workflow

To navigate the complexities of 2026, organizations cannot rely on reactive strategies. We must use FutureHacking™ — a collective foresight methodology — to map out how the relationship between human intelligence and agentic automation will evolve. This isn’t just about predicting technology; it’s about engineering the “Human-Agent Interface” so that it scales without crushing the human spirit.

The core of this approach involves identifying the Innovation Bonfire within your team. In this metaphor, the AI agents are the fuel — abundant, powerful, and capable of sustaining a massive output. However, the humans must remain the spark. Without the human spark of intent and empathy, the fuel is just a cold pile of logs. FutureHacking™ allows teams to visualize where the “fuel” might be smothering the “spark” and adjust the workflow before burnout sets in.

By engaging in collective foresight, teams can proactively decide which cognitive territories are “Human-Core.” These are the areas where we intentionally limit AI autonomy to preserve our creative agency and cultural identity. It’s about choosing where we want the machine to lead and where we require a human to hold the compass.

- Mapping the Friction: Identifying which agent-led tasks are creating the most mental “drag” for the team.

- Defining Non-Negotiables: Establishing which parts of the customer and employee experience must remain 100% human-centric.

- Intent Modeling: Shifting the focus from “What can the agent do?” to “What outcome are we trying to hack for the future?”

When we FutureHack our workflows, we move from being passive recipients of technological change to being the active architects of our organizational destiny. We ensure that as the machine gets smarter, our collective human intelligence becomes more focused, not more fragmented.

Framework: The “Agency First” Operating Model

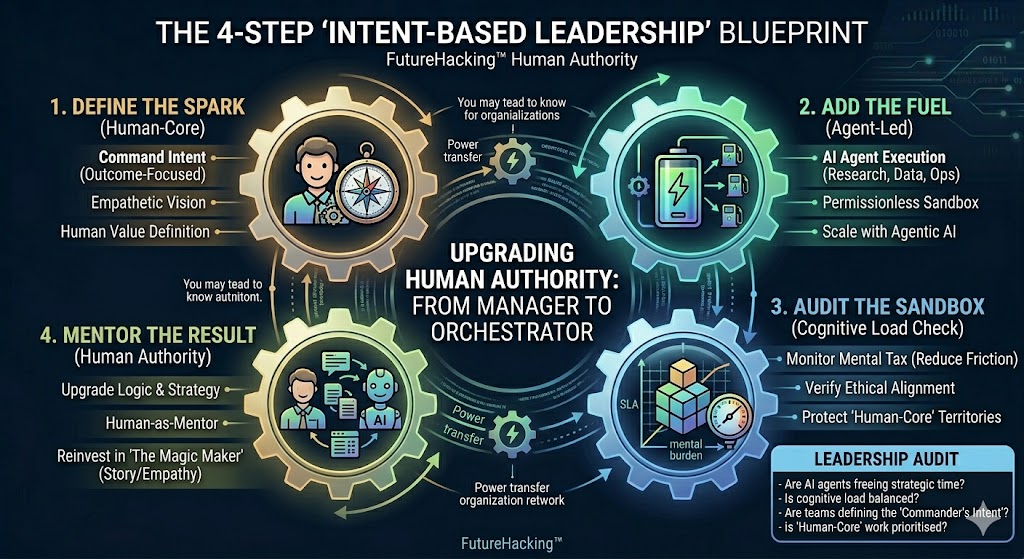

Building a resilient organization in the age of Agentic AI requires more than just new software; it requires a new operating philosophy. We must move away from a model of Machine Management and toward a model of Intent Orchestration. This framework provides three critical steps to ensure that human agency remains the primary driver of your business value.

1. Cognitive Offloading, Not Task Dumping

The goal of automation should be to reduce the mental noise for the employee, not just to move a task from a human to a machine. If a human still has to track, verify, and worry about every step the agent takes, the cognitive load hasn’t decreased — it has merely changed shape.

The Strategy: Design “set and forget” guardrails that allow agents to operate within a defined ethical and operational “sandbox,” only alerting the human when a decision falls outside of those parameters.

2. The “Human-in-the-Loop” Upgrade

We must shift the role of the worker from Monitor to Mentor. In the old model, the human checks the machine’s homework for errors. In the “Agency First” model, the human coaches the agent on why certain decisions are better than others, treating the AI as an apprentice. This reinforces the human’s position as the source of wisdom and authority, preventing the “Conscript” mentality.

3. Intent-Based Leadership

Management must evolve to focus on the Intent rather than the Activity. In a world where agents can generate infinite activity, “busyness” is no longer a proxy for value. Leaders must empower their teams to spend their time defining the “Commander’s Intent” — the high-level objectives and human-centered outcomes that the AI agents must then figure out how to achieve.

The Agency Audit: Ask your team this week: “Does this new AI agent give you more time to think strategically, or does it just give you more machine-generated work to manage?” The answer will tell you if you are facing an Agentic Paradox.

Conclusion: Leading the Human-Centered Revolution

The true test of leadership in 2026 is not how quickly you can deploy autonomous agents, but how effectively you can protect and amplify the human spirit within your organization. As we navigate the Agentic Paradox, we must remember that technology is a force multiplier, but it requires a human “integer” to multiply. Without a clear sense of agency, even the most advanced AI becomes a source of friction rather than a source of freedom.

By addressing the Cognitive Load Crisis and intentionally moving our teams out of “Conscript” roles and into “Architectural” ones, we do more than just improve efficiency — we future-proof our culture. We ensure that our organizations remain places of meaning, creativity, and purpose.

The “Year of Truth” demands that we be honest about the mental tax of automation. It calls on us to use FutureHacking™ not just to map out our tech stacks, but to map out our human potential. The companies that win the next decade won’t be those with the smartest agents; they will be the ones that used those agents to give their people the time and agency to be truly, radically human.

“Innovation is a team sport where the machines play the support roles so the humans can score the points.”

Are you ready to hack your agentic future?

Frequently Asked Questions

What is the primary difference between Generative AI and Agentic AI?

Generative AI focuses on creating content (text, images, code) based on human prompts. Agentic AI goes a step further by having the autonomy to execute multi-step workflows, make decisions, and interact with other systems to complete a goal without constant human intervention.

How can leaders identify if their team is suffering from the Agentic Paradox?

Look for signs of the “Supervision Trap,” where employees spend more time managing and verifying machine outputs than performing strategic work. If your team feels busier but reports a decline in creative output or “Deep Work,” they are likely experiencing the paradox.

What role does FutureHacking™ play in managing AI integration?

FutureHacking™ is a collective foresight methodology used to visualize the long-term impact of AI on organizational roles. It helps teams proactively define “Human-Core” territories, ensuring that as AI scales, it supports rather than smothers human agency and innovation.

Image credits: Google Gemini

Content Authenticity Statement: The topic area, key elements to focus on, etc. were decisions made by Braden Kelley, with a little help from Google Gemini to clean up the article, add images and create infographics.

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.