LAST UPDATED: April 18, 2026 at 3:29 PM

by Braden Kelley and Art Inteligencia

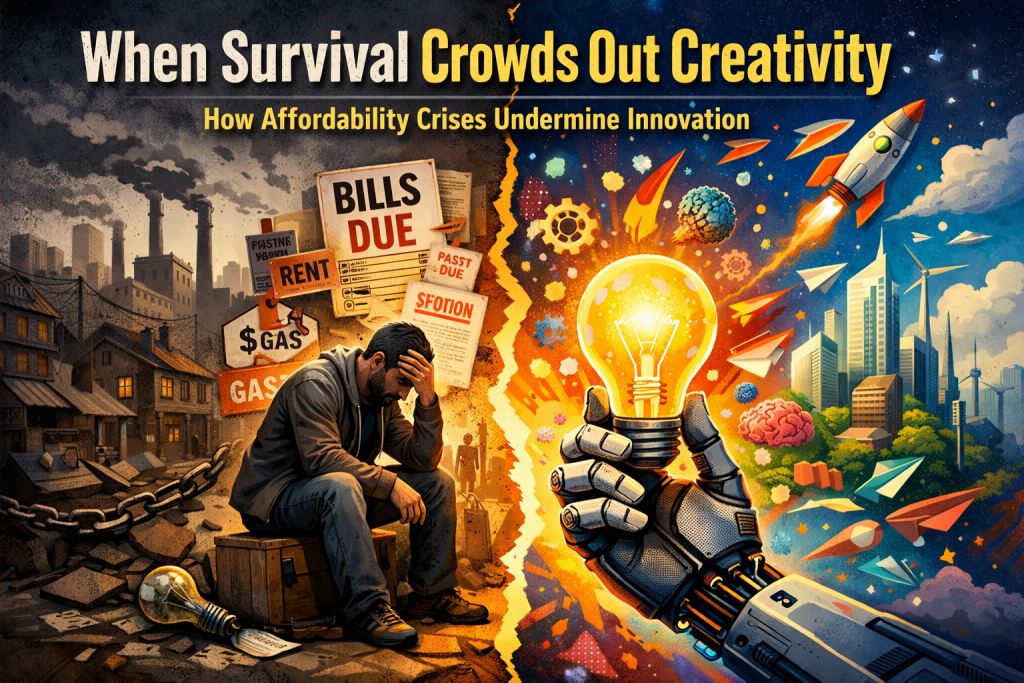

The Mirage of the Post-Scarcity Utopia

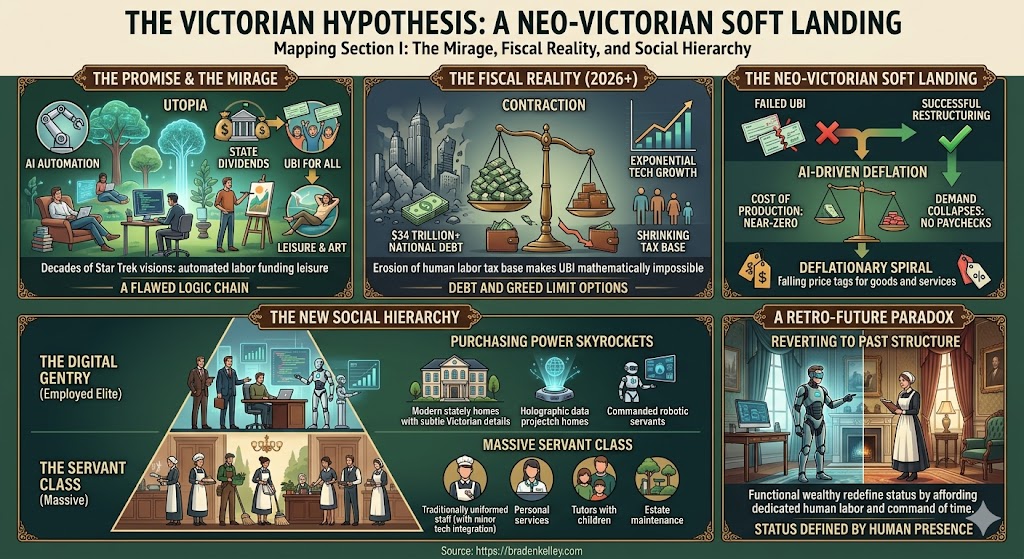

For decades, the prevailing narrative surrounding artificial intelligence has been one of a post-scarcity “Star Trek” future. The logic was simple: as machines took over the labor, the dividends of automation would be harvested by the state and redistributed via Universal Basic Income (UBI), freeing humanity to pursue art, philosophy, and leisure.

The AI Promise vs. The Fiscal Reality

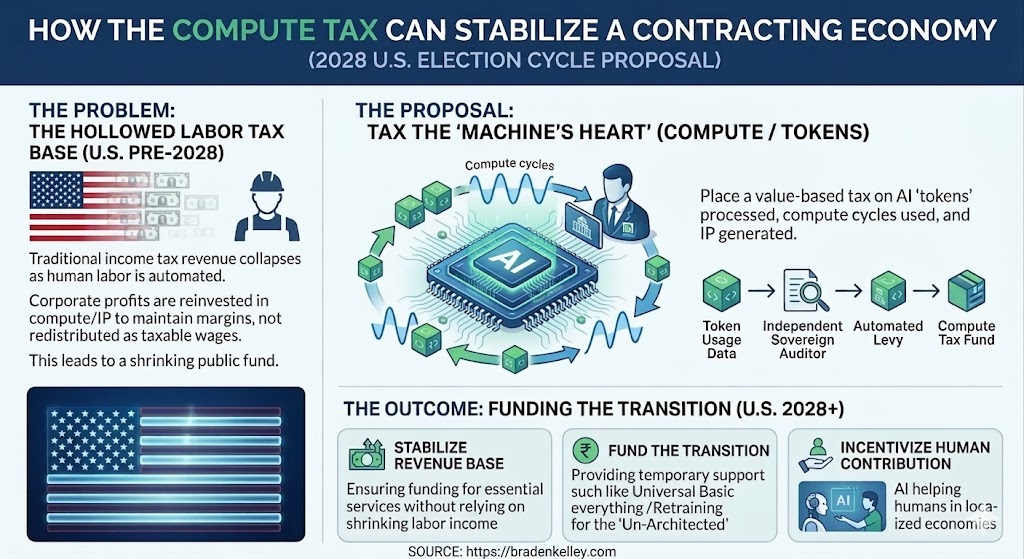

However, this utopian vision ignores the gravity of The Great American Contraction. As we approach 2026 and beyond, the friction between exponential technological growth and a $37 trillion+ national debt (with a $2 trillion annual budget deficit) creates a structural barrier to redistribution. When the tax base of human labor erodes, the math for a livable UBI simply fails to compute.

The Victorian Hypothesis

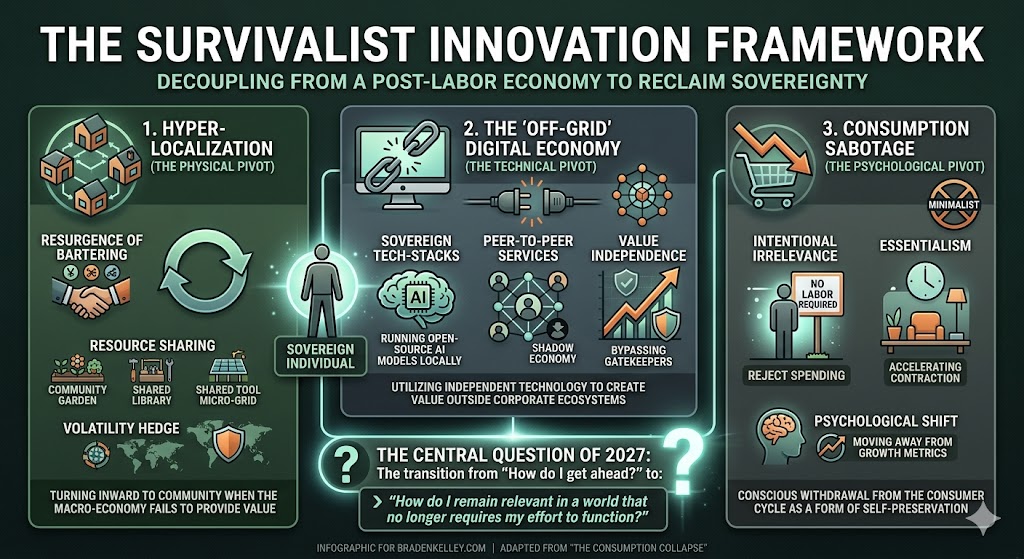

If UBI is a mathematical and political impossibility fueled by corporate and human greed, we must look toward an alternative “soft landing.” This hypothesis suggests a vertical restructuring of society. As AI drives the cost of production and the demand for goods into a deflationary spiral, the purchasing power of the remaining “employed elite” will skyrocket.

The result isn’t a horizontal distribution of wealth, but a return to a Neo-Victorian social hierarchy. In this reality, the new digital gentry will use their outsized wealth to employ a massive “servant class” to maintain stately homes and personal lives, creating a world where status is defined by the human labor one can afford to command.

The Great American Contraction: Why UBI is a Non-Starter

The conversation around the transition to an AI-driven economy often treats Universal Basic Income as an inevitability — a safety net that will naturally catch those displaced by the silicon wave. However, this assumes a level of fiscal elasticity that no longer exists. We are entering The Great American Contraction, a period where the traditional levers of government spending are restricted by the sheer weight of historical obligation and systemic greed.

The Debt Ceiling of Compassion

With a national debt exceeding $37 trillion, a $2 trillion budget deficit and rising interest rates, the federal government’s “room to maneuver” has effectively vanished. A livable UBI requires a massive, consistent tax base. As AI begins to hollow out the middle class, the very tax revenue needed to fund such a program disappears. To fund UBI under these conditions would require a level of sovereign borrowing that the global markets simply will not support, leading to a reality where the government cannot afford to be the savior of the displaced.

The Greed Variable

Even if the math were more favorable, the human element remains a constant. Corporate interests, focused on margin preservation and shareholder value, are unlikely to support the aggressive taxation required to fund a social floor. In the race to the bottom of production costs, the primary goal of the “winners” in the AI revolution will be wealth concentration, not social equity. The political willpower to force a massive transfer of wealth from AI-profiting corporations to the idle masses is a historical outlier that we should not count on repeating.

The Velocity of Displacement

Finally, the speed of the AI transition is its most disruptive feature. Legislative bodies move in years, while AI cycles move in weeks. By the time a political consensus for UBI could be formed, the economic floor will have already fallen out. This lag time creates a vacuum that will be filled not by government checks, but by a desperate search for subsistence, setting the stage for the return of the domestic labor economy.

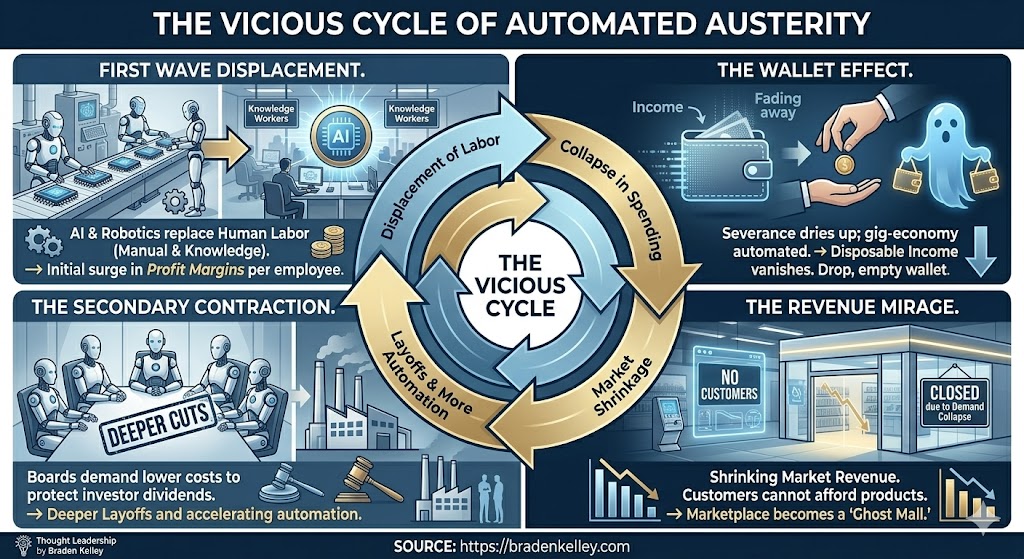

The Deflationary Paradox: Collapse of Demand and Cost

In a traditional economy, unemployment leads to recession, which usually leads to stagflation or managed recovery. However, the AI-driven “soft landing” introduces a unique mechanical failure: the Deflationary Paradox. As AI and advanced robotics permeate every sector, the labor cost of producing goods and services begins to approach zero, but the pool of consumers capable of buying those goods simultaneously evaporates.

The Production Floor Drops

We are witnessing the end of the labor theory of value. When an AI can design, a robot can manufacture, and an automated fleet can deliver a product without a single human touchpoint, the marginal cost of production hits the floor. In a desperate bid to capture the dwindling “active” capital in the market, companies will engage in a race to the bottom, causing the prices of physical and digital goods to deflate at a rate unseen in modern history.

The Demand Vacuum

While cheap goods sound like a boon, they are a symptom of a deeper rot: the Demand Vacuum. As the middle class is hollowed out, the velocity of money slows to a crawl. The economy shifts from a mass-consumption model to a precision-consumption model. Most businesses will fail not because they can’t produce, but because there are no longer enough customers with a paycheck to buy, even at rock-bottom prices.

The Purchasing Power of the “Remaining”

This is where the Victorian shift begins. For the small percentage of Americans who retain their income — the innovators, the orchestrators, and the entrepreneurs — this deflationary environment is a golden age. Their dollars, fixed in value while the cost of everything else drops, suddenly possess exponential purchasing power. When a gallon of milk or a digital service costs mere pennies in relative terms, the “wealthy” find themselves with a massive surplus of capital that cannot be spent on “things” alone. This surplus will naturally be redirected toward the one thing that remains scarce and high-status: the dedicated service of another human being.

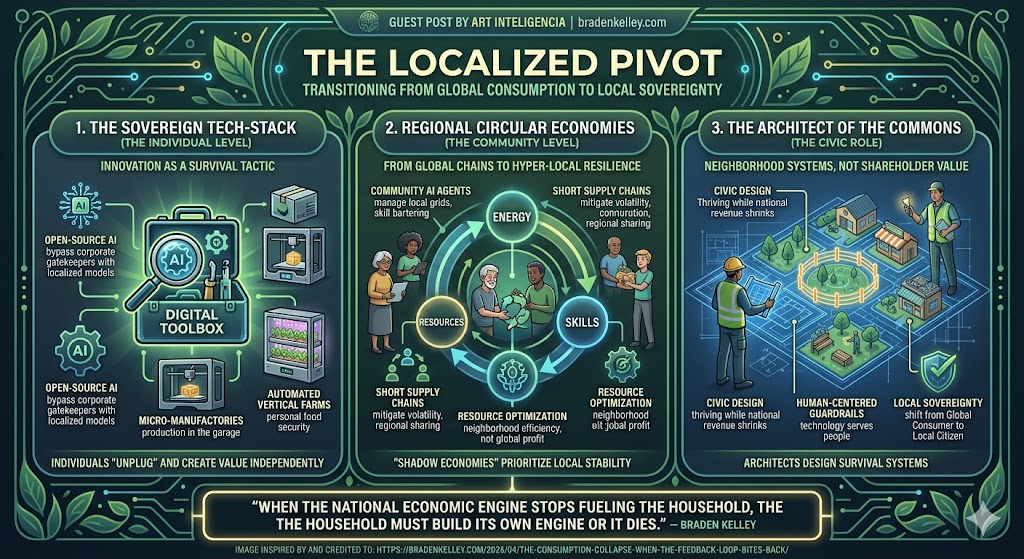

The New “Stately Home” Economy

As the Deflationary Paradox takes hold, we will see a fundamental shift in the definition of luxury. In the pre-AI era, luxury was defined by the acquisition of high-tech gadgets or rare goods. In the Neo-Victorian era, where machines produce goods for nearly nothing, “luxury” will pivot back toward the human-centered experience. Status will no longer be measured by what you own, but by whose time you command.

From Software to Service

For the “In-Group” — those entrepreneurs and specialized leaders still generating significant revenue — capital will lose its utility in the digital marketplace. When software is free and manufactured goods are commoditized, wealth seeks the only remaining friction: human presence. We will see a massive migration of capital away from Silicon Valley “platforms” and toward the local domestic economy. The wealthy will stop buying more “things” and start buying “lives” — the total dedicated attention of house managers, chefs, valets, and tutors.

The Modern Manor

This economic shift will be physically manifested in the return of the Stately Home. These won’t just be houses; they will be complex ecosystems of employment. Large estates will once again become the primary employer for local communities. As traditional corporate offices vanish, the residence becomes the center of both social and economic power. These modern manors will require extensive human staffs to cook, clean, maintain grounds, and provide security — services that, while technically possible via robotics, will be performed by humans as a deliberate signal of the owner’s immense “effectively wealthy” status.

The Return of the Domestic Professional

Perhaps the most jarring aspect of this transition will be the class of worker entering domestic service. We are not talking about a traditional blue-collar service shift, but the “Victorianization” of the former middle class. Displaced white-collar professionals — accountants, teachers, and middle managers — will find that their highest-paying opportunity is no longer in a cubicle, but in managing the complex domestic affairs, private education, and logistics of the new digital aristocracy. It is a “soft landing” in name only; while they may live in proximity to grandeur, their survival is entirely tethered to the whims of their employer.

Socio-Economic Stratification: The Two-Tiered Reality

The inevitable result of the “Victorian Soft Landing” is the formalization of a rigid, two-tiered social structure. Unlike the 20th century, which was defined by a fluid and expanding middle class, the post-contraction era will be characterized by extreme polarization. The economic “missing middle” creates a vacuum that forces every citizen into one of two distinct realities: the Digital Gentry or the Dependent Class.

The Corporate and Government Gentry

A small percentage of Americans — likely less than 10% — will remain tethered to the engines of primary wealth creation. This “In-Group” consists of high-level AI orchestrators, strategic entrepreneurs, and essential government officials who maintain the infrastructure of the state. Because their income is derived from high-margin automated systems while their cost of living has plummeted due to deflation, they possess a level of functional wealth that rivals the landed gentry of the 19th century. To this group, the “Great Contraction” is not a crisis, but a refinement of their dominance.

The Dependent Class

For those outside the digital fortress, the reality is stark. Without a national UBI to provide a floor, the majority of the population becomes the “Dependent Class.” Their economic utility is no longer found in the marketplace of ideas or manufacturing, but in the marketplace of personal service. In this neo-Victorian landscape, you either work for the companies that own the AI, work for the government that protects it, or you work directly for the individuals who do.

The Choice: Service or Scarcity

This stratification reintroduces a primal power dynamic into the American workforce. When the cost of basic survival (food and shelter) is low due to deflation, but the opportunity for independent income is zero, the wealthy gain total leverage. The “soft landing” is, in truth, a forced labor transition. Those who are not “useful” to the gentry — either as specialized labor or domestic support — face the grim reality of the Victorian workhouse era: they must find a patron to serve, or they will starve in a world of plenty.

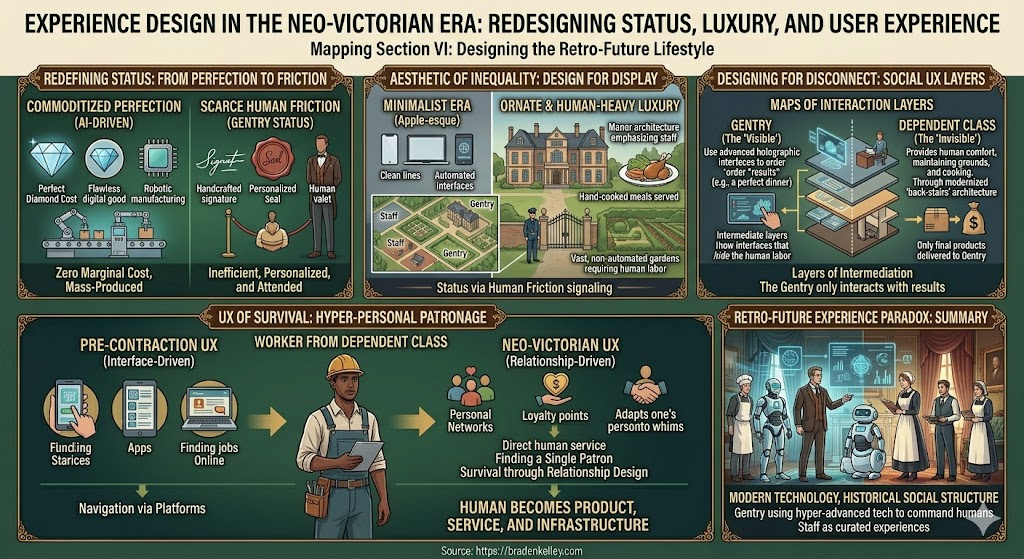

Experience Design in the Neo-Victorian Era

From the perspective of experience design and futurology, the shift toward a Victorian-style social structure will fundamentally alter the aesthetic of status. In a world where AI can generate perfect, flawless goods and digital experiences at zero marginal cost, “perfection” becomes a commodity. Status, therefore, will be redesigned around human friction and intentional inefficiency.

The Aesthetic of Inequality

We will see a move away from the sleek, minimalist “Apple-esque” design of the early 21st century toward a more ornate, human-heavy luxury. Experience design for the elite will emphasize things that AI cannot authentically replicate: the slight imperfection of a hand-cooked meal, the presence of a uniformed gatekeeper, and the physical maintenance of vast, non-automated gardens. Architecture will pivot back to “human-centric” layouts—designing spaces not for efficiency, but to accommodate the movement and housing of a live-in staff.

Designing for Disconnect

The most challenging aspect of this new era will be the Experience of the Invisible. Designers will be tasked with creating systems that allow the Digital Gentry to interact with their environment without acknowledging the vast economic disparity surrounding them. This involves “Social UX” — designing layers of intermediation where the “Dependent Class” provides the comfort, but the “Gentry” only interacts with the result. It is a return to the “back-stairs” architecture of the 19th century, modernized for a digital age.

The UX of Survival

For the majority, the “User Experience” of daily life will be one of Hyper-Personal Patronage. Navigation of the economy will no longer be about interfaces or platforms, but about the “UX of Relationships.” Survival will depend on the ability to design one’s persona to be indispensable to a wealthy patron. In this reality, human-centered design takes on a darker, more literal meaning: the human becomes the product, the service, and the infrastructure all at once.

Conclusion: Preparing for the Retro-Future

The “Soft Landing” we are currently engineering is not the one we were promised. As the Great American Contraction forces a collision between astronomical debt and the deflationary power of AI, the middle-class dream of a subsidized leisure class is evaporating. In its place, we are seeing the blueprints of a Retro-Future — a world that looks forward technologically but moves backward socially.

A Call for Human-Centered Transition

If we continue to view innovation solely through the lens of efficiency and margin preservation, the Victorian outcome is not just possible — it is inevitable. We must realize that without a radical redesign of how we value human contribution beyond mere “market productivity,” we are simply building a more efficient feudalism. True Experience Design must now focus on the social fabric, or we risk creating a world where the only “innovation” left is finding new ways for the many to serve the few.

Final Thought: The Soft Landing Paradox

We must be careful what we wish for when we ask for a “seamless” transition. A landing that is “soft” for the Digital Gentry is one where the friction of poverty and the noise of the displaced have been successfully silenced by the return of the servant class. History doesn’t repeat, but it does rhyme — and right now, the future sounds remarkably like 1837. The question is no longer if AI will change our world, but whether we have the courage to design a future that doesn’t require us to retreat into our past.

Frequently Asked Questions

Why would prices deflate if the economy is struggling?

In this scenario, AI and robotics drive the marginal cost of production toward zero. Simultaneously, massive job displacement creates a “demand vacuum.” To capture what little liquid currency remains, companies must drop prices drastically, leading to a reality where goods are incredibly cheap but income is even scarcer.

How does this differ from the 20th-century middle class?

The 20th century was defined by a “horizontal” distribution where many people owned moderate assets. The Neo-Victorian model is “vertical.” The middle class disappears, replaced by a tiny, hyper-wealthy elite (Digital Gentry) and a large class of people who provide them with personalized human services (the Servant Class).

Isn’t UBI a more logical solution to AI displacement?

While logical in theory, the “Great American Contraction” hypothesis suggests that high national debt and corporate prioritisation of margins make a livable UBI politically and fiscally impossible. Without a state-funded floor, the market defaults to the oldest form of social safety: personal patronage and domestic service.

EDITOR’S NOTE: This is a visualization of but one possible future. I will be publishing other possible futures as they crystallize in my mind (or as you suggest them for me to explore).

Image credits: Google Gemini

Content Authenticity Statement: The topic area, key elements to focus on, etc. were decisions made by Braden Kelley, with a little help from Google Gemini to clean up the article, add images and create infographics.

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.