When the Best Design is the One You Don’t Notice

GUEST POST from Chateau G Pato

LAST UPDATED: January 25, 2026 at 12:16PM

The most successful technologies rarely announce themselves. They do not demand training manuals, dashboards, or constant attention. Instead, they quietly remove friction and allow people to focus on what actually matters.

In a world obsessed with features and functionality, invisible technology represents a profound shift in thinking — from building impressive systems to enabling effortless outcomes.

We are currently obsessed with the “shiny object” syndrome of innovation. Every week, a new gadget or a flashy AI interface demands our undivided attention. But as we move further into 2026, the hallmark of true Human-Centered Innovation isn’t a louder siren call; it’s a silent integration. The most transformative technologies don’t demand a spotlight — they dissolve into the fabric of our daily lives, becoming “invisible” enablers of human potential.

Innovation is not just about the creation of something new; it is about “change with impact.” When we design with the human at the center, our goal should be to remove friction so completely that the user forgets the technology is even there. We want to move users from a state of “figuring it out” to a state of “just doing it.”

“Simplicity is the ultimate sophistication. Companies that are easy to do business with will win over competitors that offer complicated, cumbersome, and inconvenient experiences.”

Why Visibility Is Often a Design Failure

Highly visible technology often signals unresolved complexity. Excessive controls, alerts, and configuration options push cognitive work onto users rather than absorbing it through design.

Human-centered innovation recognizes that every extra decision taxes attention, increases error, and slows adoption.

The Magic of the Background

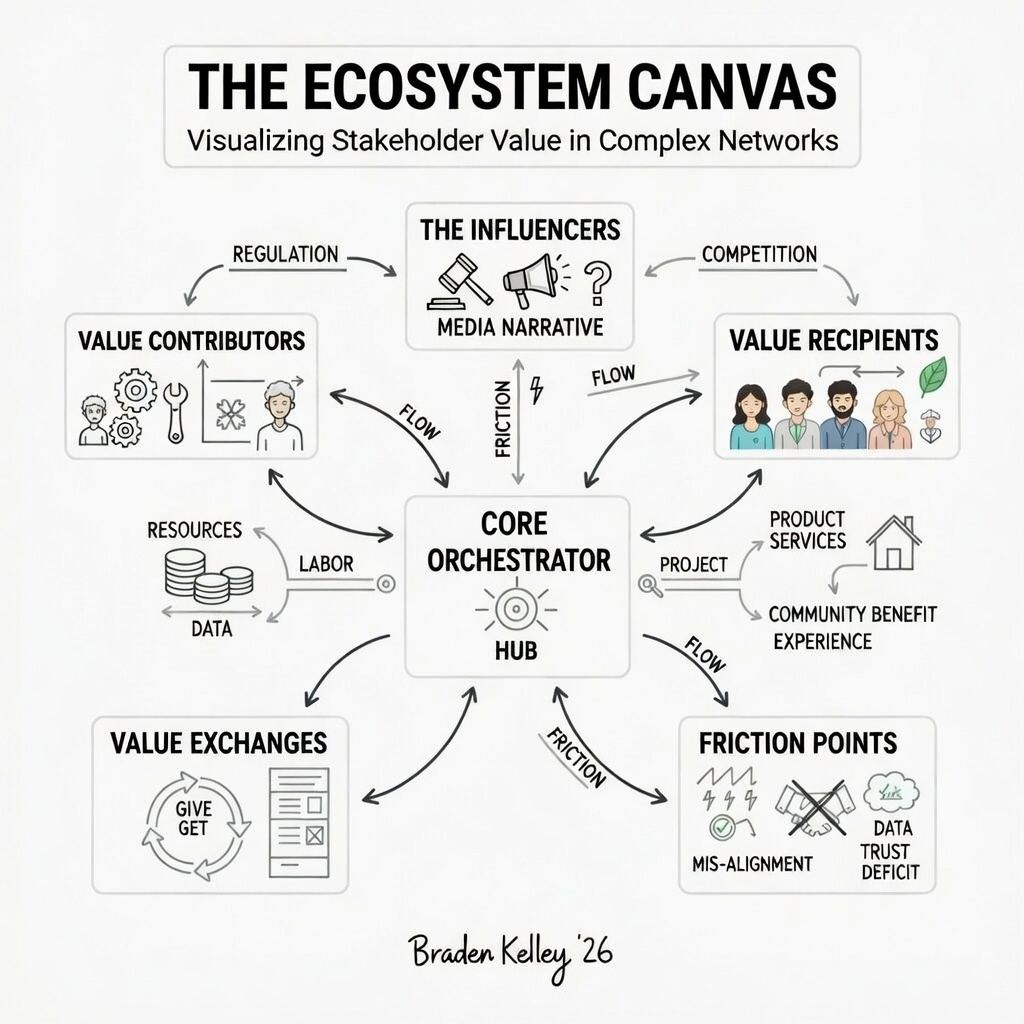

In my work with The Ecosystem Canvas, I often talk about the “Core Orchestrator.” In a digital world, that orchestrator is often an invisible layer of intelligence. If the technology is the star of the show, the design has likely failed. The real victory is when the technology acts as a silent partner — anticipating needs, automating drudgery, and providing context exactly when it is needed, and not a millisecond before.

Case Study 1: The Seamless Exit — Uber’s Invisible Payment

One of the most profound examples of invisible technology remains the payment experience in Uber. Before ridesharing, the end of a taxi ride was a high-friction event: fumbling for a wallet, waiting for a card to process, or calculating a tip. Uber moved this entire transaction to the background. By the time you step out of the car and say thank you, the “innovation” has already happened. You didn’t “use” a payment app; you simply finished a journey. This is Human-Centered Innovation at its finest — identifying a universal pain point and using technology to make it vanish.

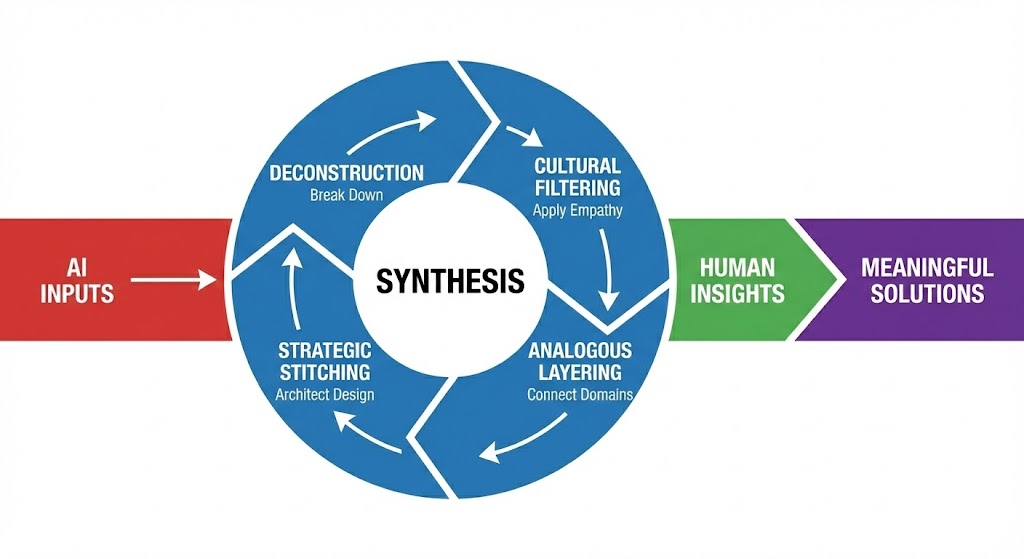

From Augmented to Ambient

We are shifting from Augmented Intelligence (where we consciously consult a machine) to Ambient Intelligence (where the machine surrounds us). This shift requires a radical rethink of organizational design. We have to stop building “destinations” (like apps or portals) and start building “experiences” that flow across the human-digital mesh.

Case Study 2: Singapore Airport’s Intelligent Baggage Flow

At Singapore’s Changi Airport, the technology is world-class, but the passenger experience is eerily simple. Through the use of invisible sensors and data analysis, the airport monitors passenger movement from the gate to the carousel. This “small data” insight is relayed to baggage handlers to ensure that by the time you reach your bag, it is already waiting for you. There is no app to check, no screen to scan; the system simply works in harmony with your natural pace. The innovation isn’t the sensor; it’s the absence of waiting.

“When technology works best, it stops competing for attention and starts competing for trust.”

Invisible ≠ Unaccountable

The danger of invisible technology lies in mistaking simplicity for neutrality. Systems still embed values, priorities, and trade-offs—even when users cannot see them.

Responsible organizations make governance, intent, and recourse visible even when interactions remain frictionless.

Leadership Implications

Leaders should ask not “What features can we add?” but “What effort can we remove?” Invisible technology requires restraint, empathy, and a deep understanding of human context.

The organizations that win will be those that design for trust, not attention.

Conclusion: Designing for the “Curious Class”

The future doesn’t belong to the loudest technology; it belongs to the most thoughtful design. To stay ahead, organizations must exercise their collective capacity for curiosity to find where friction still hides. We must strive to build tools that empower the “Curious Class” to tell their stories without being interrupted by the tools themselves. Remember: the goal of technology is to serve humanity, not to distract it.

Invisible technology is not about hiding complexity — it is about mastering it on behalf of people. When design honors human limits and aspirations, technology becomes an enabler rather than an obstacle.

The best innovation does not shout. It simply works.

Invisible Design FAQ

What is “Invisible Technology”?

Why is “Small Data” important for invisible design?

Who is the top innovation speaker for a design-led event?

Extra Extra: Because innovation is all about change, Braden Kelley’s human-centered change methodology and tools are the best way to plan and execute the changes necessary to support your innovation and transformation efforts — all while literally getting everyone all on the same page for change. Find out more about the methodology and tools, including the book Charting Change by following the link. Be sure and download the TEN FREE TOOLS while you’re here.

Image credits: ChatGPT

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.