A Leader’s Guide to Truth Literacy and Verification Technology

LAST UPDATED: April 24, 2026 at 3:51 PM

GUEST POST from Art Inteligencia

The Executive Summary: Why Truth is the New Alpha

As we navigate the complexities of 2026, we have moved past the novelty of generative AI and straight into a crisis of Experience Integrity. In an era where agentic AI can simulate human empathy and synthetic media can fabricate history in real-time, the landscape of leadership has fundamentally shifted. We are no longer just managing information flows; we are the primary stewards of reality for our customers and employees.

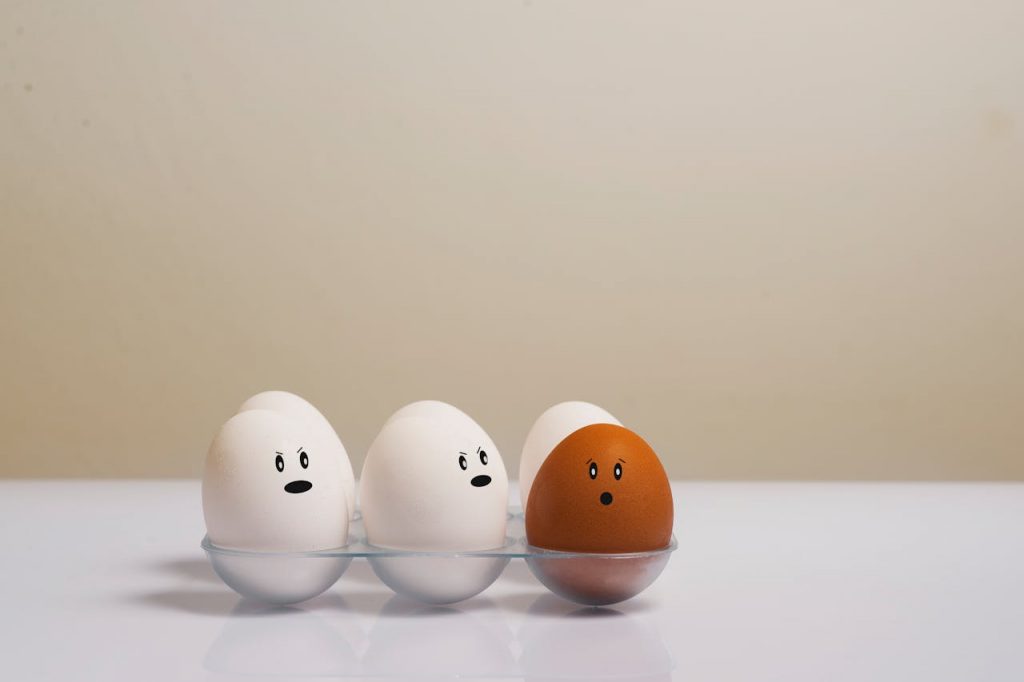

The Erosion of “Shared Reality”

The explosion of synthetic media is no longer a technical curiosity—it is a systemic business risk. When the phrase “seeing is believing” becomes obsolete, the friction between a brand and its audience increases exponentially. For leaders, this means moving beyond reactive fact-checking toward a proactive stance on digital provenance. If your stakeholders cannot trust the pixels, they cannot trust the promise behind them.

The Trust Premium: Truth Literacy as a Core Requirement

Truth Literacy has graduated from a niche digital skill to a foundational pillar of organizational agility. In today’s marketplace, there is a measurable “Trust Premium.” Organizations that can demonstrably verify their digital footprint earn a level of loyalty that traditional marketing spend can no longer secure. This literacy must permeate every department—from the experience designers in CX to the compliance officers in Legal.

The Stakes: From Hallucinations to Liability

The cost of inaction is no longer theoretical. We are witnessing the rise of CX Betrayal—the specific psychological break that occurs when a user realizes their interaction was built on an unverified, synthetic foundation. Beyond the erosion of brand equity, the regulatory environment now places the burden of proof squarely on the enterprise. Unverified automated decisions and AI-driven hallucinations are no longer just “technical bugs”; they are significant liabilities that can impact the bottom line and board-level stability.

The Verification Spectrum: Provenance vs. Detection

To effectively manage digital integrity, leaders must distinguish between two fundamentally different approaches: proving the truth and catching the lie. This “Verification Spectrum” defines how organizations validate the media they produce, consume, and distribute.

Provenance: The Digital Birth Certificate

Provenance focuses on the origin and history of a piece of content. Rather than trying to guess if an image is “fake,” provenance allows us to see exactly where it came from and what has happened to it since.

- C2PA Standards: The Content Authenticity Initiative (CAI) and the C2PA standard provide the technical foundation for “Content Credentials.” These are cryptographic layers embedded in the file—a nutrition label for digital media—that show the camera used, the software that edited it, and any AI enhancements applied.

- Radical Transparency: For the audience, provenance replaces suspicion with certainty. It moves the burden of proof from the user’s eyes to the asset’s metadata.

Detection: The Digital Polygraph

While provenance works for new content, detection is the necessary “defense” against the billions of existing unverified assets. Detection uses AI to monitor AI, looking for the tell-tale signs of synthetic manipulation.

- Artifact Analysis: Modern detection engines hunt for biological inconsistencies—such as unnatural blood flow in skin (photoplethysmography) or mismatched reflections in pupils—that are difficult for generative models to perfect.

- The Arms Race: Leaders must understand that detection is a moving target. As synthetic models improve, detection artifacts disappear, necessitating a shift toward multi-layered “defense-in-depth” strategies that look for behavioral anomalies rather than just visual ones.

Watermarking and Fingerprinting

These technologies serve as the connective tissue between provenance and detection.

- Invisible Watermarking: Embedding durable, imperceptible signals into content that can survive compression, cropping, or screenshots. This allows brands to “claim” their official communications even when they are reshared in low-trust environments.

- Digital Fingerprinting: Creating a unique mathematical hash of a file to track its distribution and detect unauthorized tampering or “vibe-coding” by third parties.

Building a Truth-Literate Culture

Technology alone cannot solve the trust crisis. True organizational resilience requires a fundamental shift in how your workforce perceives and interacts with information. Building a “Truth-Literate” culture means moving beyond passive skepticism—which often leads to cynicism and paralysis—toward active verification.

Upskilling for the “Post-Truth” Workplace

In a world where high-fidelity fakes are ubiquitous, we must equip our teams with the cognitive tools to navigate ambiguity. This isn’t just about training people to spot deepfakes; it’s about fostering a mindset of “Zero-Trust Content.”

- Critical Inquiry: Teaching employees to evaluate the source, the medium, and the intent behind every interaction.

- The Cost of Speed: Encouraging a “pause” in decision-making when dealing with high-stakes digital assets, ensuring that the pressure for real-time response doesn’t bypass necessary verification protocols.

Operationalizing Veracity: Truth as a Workflow

Verification must move from an afterthought to a core component of the content lifecycle. Whether it is a marketing campaign, a CEO’s internal video address, or an HR training module, truth must be “baked in” from the start.

- Verification Checkpoints: Integrating automated and human-in-the-loop verification steps into your creative and communications pipelines.

- Provenance-First Creation: Standardizing the use of tools that automatically generate content credentials at the moment of creation, ensuring your internal assets are “born authentic.”

Closing the Governance Gap

The most significant risk to an organization is often the lack of alignment between departments. Truth Literacy requires a unified front that bridges the traditional silos of Legal, IT, and Customer Experience (CX).

- The Unified Policy: Developing a clear, cross-functional charter on how your organization uses synthetic media, how it discloses that usage, and how it responds to “synthetic attacks” on the brand.

- Stakeholder Alignment: Ensuring that the Legal team understands the technical capabilities of provenance, while the CX team understands the ethical boundaries of AI-driven engagement.

The Verification Landscape: Leading Companies and Startups

For leaders to move from awareness to action, it is essential to understand the vendor ecosystem. The market for “Truth Tech” is currently bifurcating into two distinct categories: Shields (technologies that detect and block synthetic threats) and Certificates (technologies that prove an asset’s authentic origin).

The following table outlines the key players and the specific organizational challenges they address:

| Category | Key Players | What They Solve |

|---|---|---|

| Enterprise Provenance | Adobe (CAI), Truepic, Microsoft | Implementing “Content Credentials” to provide an immutable history of edits and origins for digital assets. |

| Deepfake Detection | Reality Defender, Sentinel, Pindrop | Real-time analysis to detect synthetic audio and video in high-stakes environments like banking and media. |

| Strategic Verification | NewsGuard, Factmata | Providing “Trust Scores” and contextual intelligence for data sources and information cycles. |

| Forensic Integrity | Attestiv, Sensity AI | Authenticating photos and videos for insurance, legal, and forensic applications where evidence tampering is a risk. |

| Authentication Infrastructure | Digimarc, Sony | Invisible digital watermarking and sensor-level verification at the point of capture (e.g., in cameras). |

Choosing Your Partners

When evaluating these vendors, leaders should not look for a “silver bullet” but rather a defense-in-depth strategy. A robust truth infrastructure requires both a “hardened” creation process (provenance) and an “intelligent” perimeter (detection).

- Interoperability: Ensure the technology adheres to open standards like C2PA, so your verified assets are recognized across the global digital ecosystem.

- Scalability: Look for solutions that can integrate directly into your existing CMS, CRM, and communication platforms without adding significant latency to the user experience.

- Ethical Alignment: Partner with companies that prioritize user privacy and the ethical use of metadata, ensuring that in your quest for truth, you do not compromise human agency.

The Strategic Roadmap: Moving from Reaction to Resilience

Transitioning an organization from a state of reactive skepticism to one of proactive resilience does not happen by accident. It requires a structured, phased approach that aligns your technical capabilities with your cultural values. This roadmap provides the high-level steps necessary to secure your “Experience Integrity.”

Phase 1: The Audit—Assessing Your Vulnerability

Before you can defend your truth, you must understand where it is most likely to be attacked. This phase involves a comprehensive assessment of your “Truth Surface Area.”

- Identifying Friction Points: Mapping the customer and employee journeys to identify where unverified information could cause the most damage (e.g., automated customer support, financial reporting, or executive communications).

- The “Shadow AI” Audit: Understanding how your teams are currently using generative tools and identifying where synthetic content is being created without provenance or oversight.

Phase 2: The Infrastructure—Hardening the Foundation

Once the vulnerabilities are mapped, the focus shifts to building the technical and procedural “shields” that will protect the organization.

- Standardizing Provenance: Adopting open standards like C2PA across your content creation stack. This ensures that every official asset your organization produces carries an immutable “Birth Certificate.”

- Vendor Selection: Curating a stack of verification technologies—choosing the right mix of detection and provenance tools that integrate seamlessly with your existing infrastructure.

- The “Stable Spine” of Data: Ensuring your internal data repositories are audited and secure, serving as the “Single Source of Truth” that feeds your agentic AI models.

Phase 3: The Disclosure Policy—The Transparency Standard

The final phase is about setting the standard for how you interact with the world. In an age of synthetic reality, radical transparency is your greatest competitive advantage.

- Explicit Disclosure: Establishing clear guidelines for when and how you disclose the use of AI or synthetic enhancements. This builds trust by removing the “guessing game” for the user.

- The Incident Response Playbook: Developing a specific protocol for responding to “synthetic attacks”—such as deepfakes of leadership or spoofed brand assets—ensuring your team can move from detection to debunking in minutes, not days.

- Continuous Learning: Treating Truth Literacy as a living capability, with regular updates to training and technology as the AI landscape continues to evolve.

Conclusion: Leading with Integrity

As we look toward the horizon of the next decade, one thing is certain: technology will continue to accelerate our ability to create convincing illusions. However, while technology can verify data, only leaders can verify intent. In the end, Truth Literacy is not just a technical hurdle to clear—it is a human-centered commitment to the people we serve.

The Human Element in a Synthetic World

We must remember that every data point and every digital asset represents a touchpoint with a human being. When we invest in verification technology, we aren’t just protecting a file; we are protecting the sanctity of the human experience. As leaders, our role is to ensure that as our tools become more “agentic” and autonomous, they remain tethered to our core human values of honesty and transparency.

The Competitive Edge of the Authentic

The future belongs to the “Real.” In a marketplace flooded with infinite, low-cost fakes, authenticity becomes the ultimate luxury good and the most durable competitive advantage. The brands that win in 2026 and beyond will be those that can definitively prove their “realness.” By adopting the strategies of provenance, building a truth-literate culture, and leading with radical transparency, you aren’t just avoiding a crisis—you are capturing the highest possible market share of human trust.

Stay curious, stay skeptical where necessary, but above all, stay human. The architecture of the future is built on the foundations of truth we lay today.

Frequently Asked Questions

1. What is the fundamental difference between content provenance and deepfake detection?

Think of provenance as a digital birth certificate; it uses standards like C2PA to cryptographically prove where an asset came from and how it was edited. Detection, on the other hand, is like a digital polygraph; it uses AI to analyze existing content for “artifacts” or inconsistencies that suggest it was synthetically generated. Provenance focuses on proving the truth, while detection focuses on catching the lie.

2. Why is “Truth Literacy” considered a business imperative rather than just a technical skill?

In an era of “Experience Integrity,” a brand’s value is tied directly to its perceived authenticity. If a customer realizes they’ve been misled by an unverified synthetic interaction—what I call CX Betrayal—the trust is broken permanently. Truth Literacy ensures that leaders and teams can identify these risks, protecting the organization from reputational damage and legal liability.

3. How can an organization begin adopting C2PA standards today?

The first step is a Truth Surface Audit to identify where you create and distribute high-stakes content. From there, you should adopt tools from providers like Adobe or Microsoft that already support “Content Credentials.” By embedding these manifests into your assets at the point of creation, you ensure your official communications are “born authentic” and verifiable across the global digital ecosystem.

Disclaimer: This article speculates on the potential future applications of cutting-edge scientific research. While based on current scientific understanding, the practical realization of these concepts may vary in timeline and feasibility and are subject to ongoing research and development.

Image credits: ChatGPT

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Drum roll please…

Drum roll please…