LAST UPDATED: March 4, 2026 at 11:14 AM

GUEST POST from Art Inteligencia

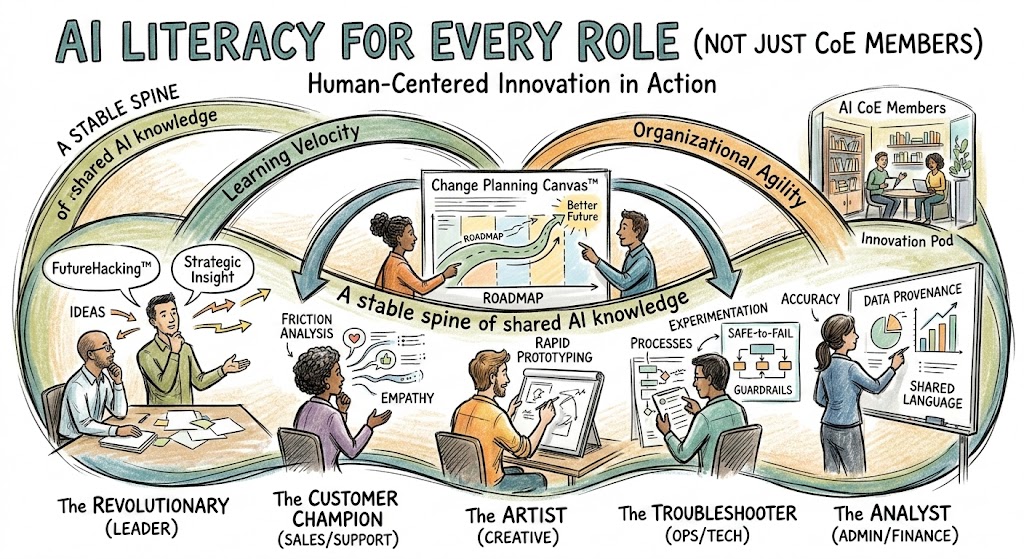

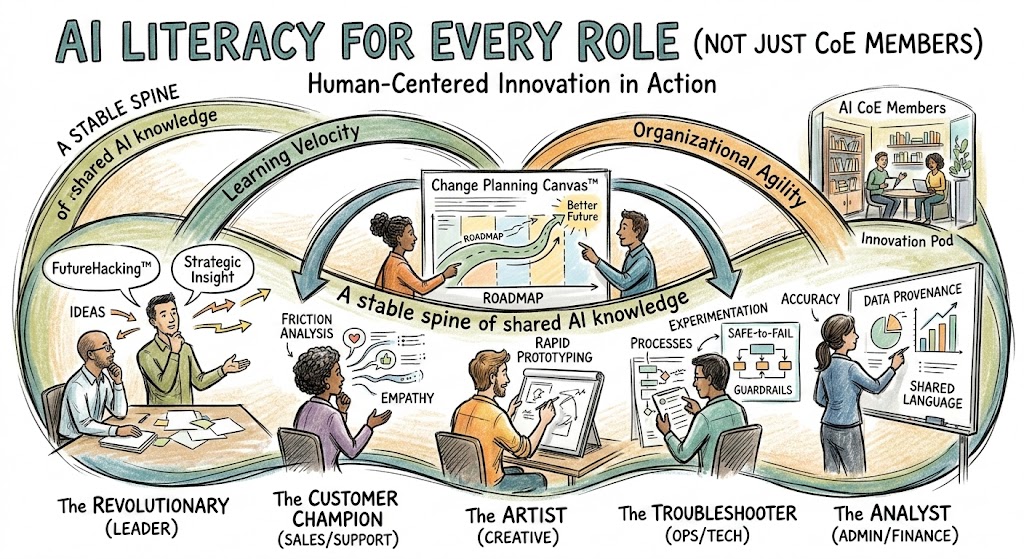

I. The Myth of the “AI Specialist” Silo

In my years helping organizations navigate the Human-Centered Innovation™ landscape, I’ve seen a recurring ghost in the machine: the belief that innovation belongs in a locked room. We saw it with the early days of “Digital Transformation,” and we are seeing it again with Artificial Intelligence. Many leaders are rushing to build an AI Center of Excellence (CoE), thinking that by gathering a few specialists in a silo, they have “solved” the AI problem.

This is a dangerous misunderstanding of how organizational agility works. When you confine AI literacy to a CoE, you create a catastrophic “Assumption Gap.” The specialists understand the math, but they don’t understand the friction of the front-line salesperson or the nuanced empathy required by a customer success lead.

“Software — and by extension, AI — is far too important to be left solely to the software people.”

If the rest of your workforce remains AI-illiterate, your CoE becomes an island. You end up with “Rigid Decay,” where the specialist team builds high-tech solutions that the rest of the organization is either too afraid to use or too uninformed to integrate. To move from a static “project” mindset to a living Inherent Capability, we must democratize the language of AI.

The goal isn’t to turn every accountant into a data scientist; it is to ensure every accountant knows how to collaborate with one. We need to stop treating AI as a “specialty” and start treating it as a foundational layer of the Change Planning Canvas™.

II. Defining AI Literacy: The “Stable Spine” of Knowledge

In any Human-Centered Innovation™ initiative, we must distinguish between “tool-fluency” and “literacy.” Knowing how to type a prompt into a chatbot is a fleeting skill; understanding the logic of Generative AI and its impact on your specific value chain is a durable capability. I call this the “Stable Spine” — the core set of principles that stay upright even as the technology shifts beneath our feet.

True AI literacy for the broader workforce isn’t about learning Python. It’s about building a Common Language across the organization. When Marketing, HR, and Operations speak the same dialect of “Data Provenance,” “Hallucination Risks,” and “Iterative Refinement,” the Change Planning Canvas™ actually begins to work.

- Beyond Tool-Picking: We must move from “What tool should I use?” to “What problem am I solving?” This reduces “Cognitive Clutter” and ensures we aren’t just automating bad processes.

- Understanding Causal AI: Every employee should grasp the “Why” behind the output. If you don’t understand the logic, you can’t provide the “Human-in-the-Loop” oversight that prevents catastrophic brand or operational errors.

- The Ethics of Insight: Literacy includes recognizing bias. We must learn the lessons of the past — like the “Tay” chatbot — to ensure our AI implementations don’t scale our existing organizational prejudices.

By establishing this spine, we move from “Experience Narcissism” (assuming our old ways are best) to a state of Marked Flexibility. We aren’t just using AI; we are integrating it into the very marrow of how we innovate.

III. The Role-Based AI “Squad” Strategy

One size does not fit all in the Change Planning Canvas™. To democratize AI literacy, we must translate it into the specific “Value-Add” for different roles. When we move beyond the CoE, we empower individuals to become part of an Innovation Squad, each using AI as a “Force Multiplier” for their unique perspective.

| The Persona |

The AI “Superpower” |

Human-Centered Outcome |

| The Revolutionary (Leadership) |

Strategic “FutureHacking™” and Trend Synthesis. |

Reducing “Time-to-Insight” to make bolder, data-backed bets. |

| The Customer Champion (Front Line) |

Real-time Friction Analysis and Sentiment Mapping. |

Closing the “Experience Narcissism” gap by truly hearing the customer. |

| The Artist & Troubleshooter (Technical/Creative) |

Rapid Prototyping and “Safe-to-Fail” Simulation. |

Increasing “Learning Velocity” without risking the core business. |

By equipping The Revolutionary with AI literacy, we ensure they aren’t just chasing “Shiny Object Syndrome.” Instead, they are using AI to identify where the organization can be Markedly Flexible.

Meanwhile, The Customer Champion uses AI to sift through the “Cognitive Clutter” of thousands of feedback points, identifying the one intervention that will actually move the needle on customer loyalty. This isn’t just “using a tool” — it’s a deliberate Human-Centered Intervention to create a better future for the user.

IV. Overcoming the “70% Failure Rate” in AI Adoption

Statistics in the change management world are sobering: nearly 70% of change initiatives fail. When we layer the complexity of Artificial Intelligence onto that, the risk of “Rigid Decay” skyrockets. To beat these odds, we must look past the algorithms and focus on the PCC Framework: Psychology, Capability, and Capacity.

1. Addressing the Psychology of “Replacement Anxiety”

If an employee perceives AI as a threat to their livelihood, they will subconsciously (or consciously) sabotage its adoption. We must reframe AI as a tool for “Subjective Time Expansion.” By automating the mundane, we aren’t replacing the human; we are freeing them to perform the high-value, high-empathy tasks that AI cannot touch.

2. Clearing the “Cognitive Clutter”

AI literacy helps teams identify where they are drowning in “Cognitive Clutter” — those low-value tasks that prevent them from reaching a state of flow. Literacy allows a worker to say, “AI can handle the data synthesis here, so I can focus on the strategic intervention.”

3. Establishing “Safe-to-Fail” Zones

Organizational Agility requires a culture where experimentation is the norm. We must reward Learning Velocity. If a team tries an AI-driven workflow and it fails, but they document why and share that insight across the Change Planning Canvas™, that is a win for the entire organization.

“The goal of AI literacy is to move from fear of the unknown to the mastery of a new medium.”

By visualizing these change hurdles using collaborative tools, we ensure the entire “Squad” is literally on the same page. We aren’t just pushing a new tool; we are performing a Deliberate Intervention to evolve the company culture.

V. Moving from Theory to Practice: The Implementation Checklist

To avoid “Rigid Decay,” we must treat AI literacy as a living organism, not a one-time workshop. This checklist is designed to integrate AI into your Change Planning Canvas™, ensuring that the entire organization moves at the same Learning Velocity.

1. Audit for “Marked Flexibility”

Every department should identify three legacy processes that are currently “rigid.” Ask: “If we had an infinite amount of data synthesis capability, how would this process change?” This identifies where AI literacy can provide the most immediate Human-Centered lift.

2. Deploy “Safe-to-Fail” Micro-Pilots

Don’t wait for a company-wide rollout. Encourage Innovation Squads to run two-week experiments. The goal isn’t necessarily a “win,” but a documented insight. If the pilot fails, but the team learns something about their data quality, that is a successful intervention.

3. Establish the “Shared Vocabulary” Baseline

Create a “No-Jargon Zone.” Ensure that everyone from the CEO to the front-line intern understands the basics of Prompt Engineering, Algorithmic Bias, and Data Privacy. When everyone speaks the same language, the “Assumption Gap” disappears.

4. Visualize the Flow

Use collaborative tools to map out how AI-augmented work flows through the company. If the AI output stays in a silo, it’s useless. We must visualize how an AI-generated insight in Marketing triggers a Deliberate Intervention in Sales or Product Development.

“The future belongs to the organizations that can learn as fast as their tools evolve.”

By following this checklist, you aren’t just “buying AI” — you are building a Future-Ready culture that is Markedly Flexible and deeply human.

VI. Conclusion: The Future is Human-Led, AI-Augmented

Innovation is never about the technology itself; it is a Deliberate Intervention to create a better future. When we democratize AI literacy, we aren’t just teaching a new skill — we are dismantling “Rigid Decay” and replacing it with Organizational Agility.

By moving AI out of the CoE and into every role, we empower the Customer Champion, the Revolutionary, and the Troubleshooter to speak a Common Language. We bridge the “Assumption Gap” and ensure that our digital transformation is anchored in human empathy.

“The question is not how intelligent the AI is, but how we are intelligent in using it to expand our human potential.”

The organizations that thrive in this era will be those that prioritize Learning Velocity over static expertise. They will be the ones that use the Change Planning Canvas™ to visualize a future where AI handles the “spin” so that humans can provide the “lift.”

The future is not a destination we reach; it is a state of Marked Flexibility we inhabit every day. Let’s stop building silos and start building a literate, empowered, and innovative workforce.

Frequently Asked Questions: AI Literacy for All

1. Why should AI literacy extend beyond the Center of Excellence (CoE)?

Confining AI knowledge to a CoE creates “Rigid Decay,” where specialists build tools that the broader workforce cannot or will not use. Extending literacy to every role bridges the Assumption Gap, ensuring that AI solutions are human-centered and solve real-world friction rather than just adding to “Cognitive Clutter.”

2. Does every employee need to learn how to code or build AI models?

No. True AI literacy is about building a “Stable Spine” of knowledge—understanding the “why” and “how” of AI logic, data ethics, and Human-in-the-Loop oversight. The goal is Organizational Agility, where every “Innovation Squad” member has the common language to collaborate on the Change Planning Canvas™.

3. What is the immediate benefit of role-based AI literacy?

The primary benefit is “Subjective Time Expansion.” When every role — from the Revolutionary to the Customer Champion — understands how to use AI for data synthesis and rapid prototyping, they reduce their Learning Velocity and clear away the “Cognitive Clutter” of low-value tasks. This allows the human workforce to focus on high-empathy, high-strategy interventions that AI cannot replicate.

Image credit: Google Gemini

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.