A Human-Centered Approach to Organizational Flow

LAST UPDATED: March 19, 2026 at 7:36 PM

GUEST POST from Art Inteligencia

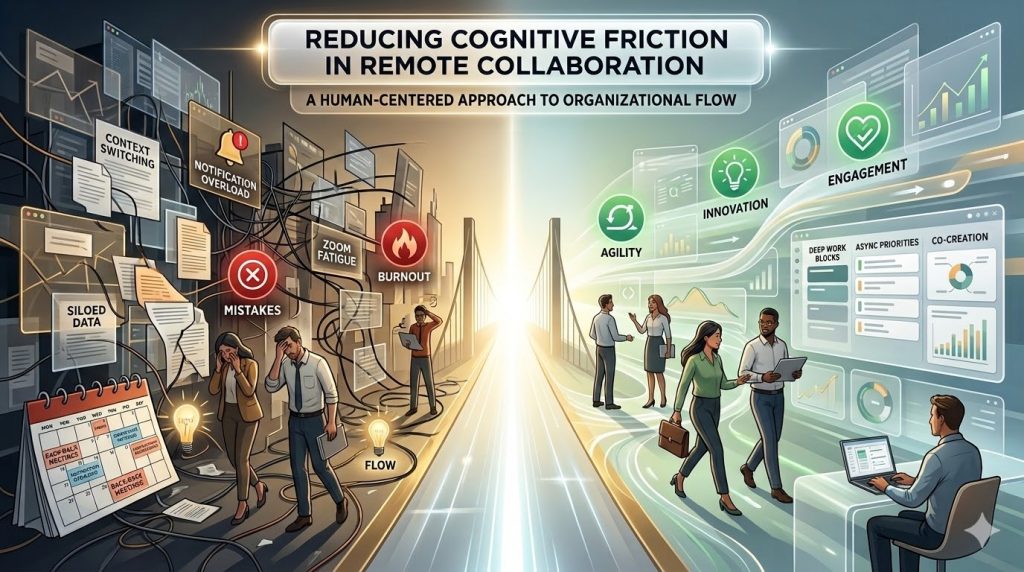

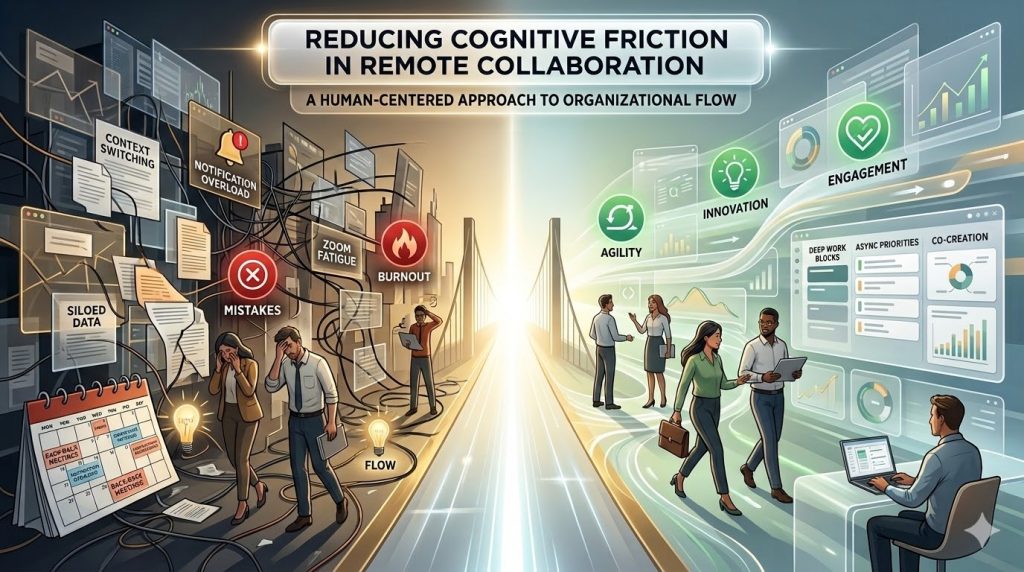

I. The Invisible Barrier: Defining Cognitive Friction

In the context of modern work, cognitive friction is the mental resistance encountered when a person’s internal model of how a task should be completed clashes with the external reality of the tools or processes provided. While physical friction slows down machines, cognitive friction drains human energy, leading to burnout, errors, and a precipitous drop in organizational agility.

The Mental Tax of the Digital Interface

Remote work was championed as a way to reduce the physical friction of commuting, yet it often substituted it with a high sensory-processing tax. Phenomena like “Zoom fatigue” are not merely the result of long hours; they are caused by a constant mismatch of social cues. The brain must work overtime to decode flattened audio, pixelated facial expressions, and the slight latency of digital transmission — signals that are processed effortlessly in person.

The Gap Between Intent and Action

Every time a team member has to stop and think about how to use a tool rather than focusing on the work itself, a micro-stress event occurs. These interruptions — searching for a specific thread across three different platforms or navigating a counter-intuitive interface — fracture the state of “flow.” When these gaps become a daily occurrence, they evolve from minor annoyances into a systemic barrier to high-level strategic thinking.

From SLAs to XLMs: A Paradigm Shift

Traditional technical metrics, or Service Level Agreements (SLAs), typically measure system “up-time” or response speed. However, a system can be 100% functional according to IT standards while remaining a nightmare for the user. To reduce friction, we must pivot toward Experience Level Measures (XLMs).

- SLA focus: Is the collaboration software running?

- XLM focus: Does the software empower the employee to complete a task without frustration?

By prioritizing the human impact over technical availability, we begin to design environments that respect the most valuable resource in any innovation-led company: human attention.

II. The Architecture of Friction in Virtual Spaces

Friction in remote collaboration is rarely the result of a single catastrophic failure. Instead, it is built into the very architecture of our digital workspaces. When we transition from physical offices to virtual ones, we often inadvertently create structural barriers that fragment human attention and deplete the cognitive reserves necessary for innovation.

The Context Switching Overload

In a physical environment, moving from a meeting to deep work often involves a spatial transition—walking from a conference room to a desk. In the digital realm, this transition is reduced to a single click, but the cognitive cost is significantly higher. Every time a collaborator switches between a video call, a real-time messaging app, and a complex project management dashboard, the brain must perform a “context reload.”

This switching cost creates a persistent mental drag. Studies suggest it can take upwards of 23 minutes to fully regain focus after a significant interruption. When our virtual architecture demands constant monitoring of “red dot” notifications, we are essentially designing for distraction rather than for flow.

Information Fragmentation: The “Digital Archaeology” Problem

One of the most pervasive structural frictions is the lack of a “single source of truth.” In many remote organizations, critical information is scattered across:

- Synchronous channels: Transient comments made during video calls that aren’t captured.

- Semi-synchronous channels: Decisions buried in 50-message long chat threads.

- Static repositories: Outdated PDF guides or buried cloud drive folders.

When an employee spends 20% of their day performing “digital archaeology” — searching for the context needed to start a task — the organization is paying a massive friction tax on productivity and speed-to-market.

The Asynchronous Miss: Meeting Bloat as a Symptom

Friction often arises because we use synchronous tools (meetings) to solve asynchronous problems (status updates). This “Meeting Bloat” is a structural failure to trust asynchronous workflows. When calendars are fragmented into 30-minute increments, there is no room for the “Big Rocks” — the high-value, human-centered creative work that drives transformation.

“We cannot solve 21st-century remote challenges with 20th-century ‘butt-in-seat’ management mentalities translated to a screen.”

The architecture of a frictionless workspace must prioritize asynchronicity by default, reserving synchronous time for high-empathy, high-complexity problem solving where the human element is most critical.

III. Strategies for Frictionless Collaboration

To overcome the architectural barriers of virtual work, we must move beyond mere participation and toward intentional design. Reducing cognitive friction isn’t about removing all challenges; it’s about removing the wrong challenges so that our teams can focus their mental energy on high-value innovation.

Intentional Friction vs. Accidental Friction

Not all friction is negative. Accidental friction — like a broken link or an unclear meeting agenda — is a waste of resources. However, intentional friction — such as a mandatory peer review or a “cooling off” period before a major release — is a critical component of quality control and strategic thinking. The goal of a human-centered leader is to ruthlessly eliminate the accidental while strategically preserving the intentional.

The “Human-Centered” Tool Audit

Before adding a new piece of software to the corporate stack, we must move past the feature list and perform a Cognitive Load Assessment. A tool audit should ask:

- Integration Depth: Does this tool play well with our existing “ecosystem,” or does it create a new silo of information?

- Notification Sovereignty: Can users easily tune the “noise” to protect their deep work blocks?

- Onboarding Intuition: How much “mental RAM” does a new hire need to expend just to navigate the basic interface?

Standardizing Digital Body Language

In a physical office, we pick up on hundreds of non-verbal cues — a slumped shoulder, a quick thumbs-up in the hallway, or the “open door” vs. “closed door” signal. In remote collaboration, these cues vanish, leading to interpretive friction (the anxiety of wondering if a short Slack message was “curt” or just “busy”).

Reducing this friction requires explicit Communication Manifestos. These aren’t rigid rules, but shared agreements on:

- Response Expectations: Defining what is truly “urgent” vs. “at your convenience.”

- Emoji Semantics: Using reactions to signal “I’ve seen this” without triggering a new notification for everyone.

- Video Optionality: Normalizing “audio-only” for internal syncs to reduce the cognitive load of constant self-monitoring on camera.

“Innovation happens in the spaces between the notes. If we fill every digital gap with noise, we leave no room for the music of collaboration.” — Braden Kelley

By engineering these “low-friction” habits, we create a culture where the technology serves the mission, rather than the mission serving the technology.

IV. Engineering Flow: The Role of Leadership

Reducing cognitive friction is not a task that can be delegated solely to the IT department. It is a fundamental leadership challenge. To foster an environment where innovation thrives, leaders must move beyond managing “tasks” and begin managing energy and attention. This requires a shift from surveillance-based management to flow-based enablement.

Protecting the “Maker’s Schedule”

High-value innovation requires extended periods of uninterrupted focus, often referred to as “Flow.” In a remote setting, the default state is often “fragmented,” with calendars resembling a game of Tetris played by someone losing. Leaders must actively engineer Deep Work Sanctuaries by:

- Institutionalizing No-Meeting Blocks: Designating specific days or afternoons where internal meetings are strictly prohibited.

- Radical Transparency: Using shared status tools to indicate “In the Zone,” signaling to the team that interruptions should be reserved for true emergencies only.

Co-Creating the Digital Workspace

The most common cause of friction is the “top-down” imposition of tools that don’t align with frontline reality. Human-centered change dictates that those who do the work should help design the workflow. Leaders should facilitate “Friction Jam Sessions” — collaborative workshops where team members identify the specific “paper cuts” in their daily digital routines.

When stakeholders co-create their processes, the psychological friction of “change resistance” evaporates, replaced by a sense of ownership and agency.

The Agentic AI Opportunity: AI as a Cognitive Buffer

We are entering the era of agentic AI, where artificial intelligence moves beyond simple chat to proactive assistance. For the innovation leader, AI shouldn’t just be about “replacing” tasks, but about serving as a cognitive buffer to reduce friction. This looks like:

- Automated Synthesis: Using AI to summarize long message threads so a returning team member doesn’t have to read 200 posts to catch up.

- Intelligent Categorization: Agents that automatically route information to the correct “single source of truth,” preventing digital archaeology.

- Contextual Surfacing: AI that surfaces the right document exactly when a collaborator starts a related task.

“The leader’s job in a digital world is to be a ‘Friction Scout’ — constantly identifying and clearing the brush so their team can run at full speed toward the next big idea.” — Braden Kelley

By shifting the focus from output volume to flow quality, leaders ensure that their organizations remain agile and that their best talent stays engaged rather than exhausted.

V. Measuring Success through Human Impact

To truly reduce cognitive friction, we must move beyond the “if it isn’t broken, don’t fix it” mentality of traditional IT. Success in a human-centered innovation culture is measured not by the absence of support tickets, but by the presence of sustainable high performance and psychological safety. We need a new dashboard for the digital workplace.

The Friction Audit: Identifying the “Paper Cuts”

Quantitative data tells you what is happening; qualitative data tells you why. A Friction Audit is a recurring diagnostic used to surface the hidden mental taxes on your team. Leaders should look for “high-friction signals,” such as:

- The “Shadow Tech” Index: How many unofficial tools is the team using because the official ones are too cumbersome?

- Notification Velocity: Is the volume of pings increasing while the output of “Deep Work” deliverables is decreasing?

- The “Time to Context” Metric: How many minutes does it take a team member to find the information they need to start a task?

Developing Experience Level Measures (XLMs)

As we move away from cold Service Level Agreements (SLAs), we must define Experience Level Measures that track the human-tool relationship. Examples of effective XLMs for remote collaboration include:

| Dimension |

The XLM Question |

Target Outcome |

| Cognitive Load |

“Did the tools help or hinder your focus today?” |

Reduced mental fatigue at EOD. |

| Clarity of Intent |

“How often did you feel unsure about a message’s tone?” |

High alignment, low anxiety. |

| Flow State |

“How many 90-minute blocks of deep work did you achieve?” |

Increased creative breakthrough rate. |

The Innovation Dividend

The ultimate goal of reducing friction is to capture the Innovation Dividend. When a team isn’t exhausted by the mechanics of working together, they have the surplus energy required to be curious, to experiment, and to solve the big, “wicked” problems that drive market leadership.

A frictionless environment is the prerequisite for organizational agility. If your processes are heavy, your pivots will be slow. If your processes are light and human-centered, your organization becomes a living, breathing entity capable of rapid transformation.

“Metrics should reflect the heartbeat of the organization, not just the pulse of the server.”

Conclusion: Designing for the Human Core

The transition to remote and hybrid collaboration was never meant to be a literal translation of the 20th-century office into a 13-inch screen. When we fail to account for cognitive friction, we aren’t just losing productivity; we are eroding the very human potential that drives organizational agility. A digital workspace cluttered with fragmented tools and loud, unstructured communication is a workspace where innovation goes to die.

The Strategy of “Less is More”

True human-centered innovation requires us to be as disciplined about what we remove as we are about what we add. By ruthlessly identifying accidental friction and replacing it with intentional, flow-state architecture, we create an environment where the technology becomes invisible. The goal is a “quiet” digital infrastructure — one that supports the worker without demanding their constant, fragmented attention.

A Call to Action for Innovation Leaders

As you look toward the future of your organization, I challenge you to look beyond your bottom-line KPIs and start measuring the Experience Level (XLM) of your teams. Ask yourself:

- Are our tools empowering my team to reach a state of flow, or are they acting as digital speed bumps?

- Have we co-created a Communication Manifesto that respects human energy, or are we default-syncing our way to burnout?

- Are we leveraging agentic AI to buffer cognitive load, or just to create more “noise”?

“Innovation is not a marathon of endurance; it is a sprint of clarity. When we clear the path of cognitive friction, we don’t just work faster — we work with more purpose, more empathy, and more impact.”

The organizations that win in the next decade won’t necessarily be the ones with the most advanced tools, but the ones that best understand how to align those tools with the human spirit. Let’s stop designing for the machine and start designing for the person behind the screen.

Frequently Asked Questions

To assist both our human readers and automated discovery engines in understanding the core tenets of human-centered innovation, we have prepared this structured FAQ regarding cognitive friction.

What is the difference between physical and cognitive friction?

Physical friction relates to the effort required to perform a manual task (like a commute), while cognitive friction is the mental tax paid when tools or processes clash with how the human brain naturally processes information. It is the primary cause of “digital burnout” in remote teams.

How do Experience Level Measures (XLMs) differ from SLAs?

While a Service Level Agreement (SLA) measures technical “up-time,” an XLM measures the human impact of that technology. It asks: “Did the tool empower the employee to complete the task without frustration?” rather than simply “Was the software running?”

How can leaders reduce “Meeting Bloat” using asynchronous habits?

Leaders can reduce friction by adopting an “async-first” mentality — using shared documentation and agentic AI for status updates, while reserving synchronous meeting time for high-empathy, high-complexity problem solving and co-creation.

Image credit: Google Gemini

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

![]() Sign up here to join 17,000+ leaders getting Human-Centered Change & Innovation Weekly delivered to their inbox every week.

Sign up here to join 17,000+ leaders getting Human-Centered Change & Innovation Weekly delivered to their inbox every week.