GUEST POST from Geoffrey A. Moore

Ethics partners with metaphysics in order to create strategies for living. Metaphysics provides the situation analysis and ethics the prescribed course of action. The two are indispensable to one another. Metaphysics without ethics is idle speculation, ethics without metaphysics, arbitrary action. Taken together, however, they supply our fundamental equipment for living.

In that context, ethics is chartered to help us “do good.” It has two central questions to answer: What kind of good should we want to bring about? and What is the right way to achieve that end? Each one raises its own set of issues to work through.

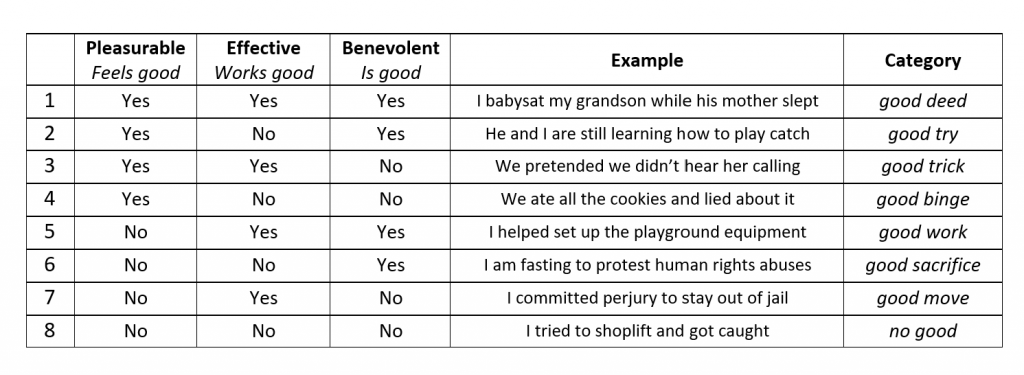

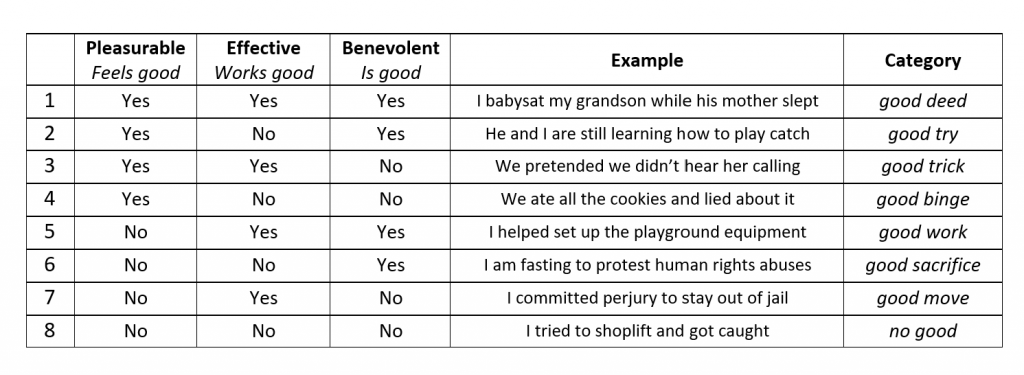

With respect to what is good, the core issue is that, in English at any rate, the word good has three distinct meanings. It can refer to what is pleasurable, what feels good. It can refer to what is fit for purpose, what works good. And it can refer to actions beneficial to others, what I would argue simply is good. Importantly, these three dimensions can team up with one another to create as many as eight different categories of goodness, illustrated by the table below:

Many of the ethical quandaries philosophers wrestle with arise from trying to unite some or all of these categories into a single concept of goodness. This is simply a mistake. That said, the type of goodness that is most proper to ethics is benevolence, actions beneficial to others (see rows 1,2, 5, and 6). It need not concern itself with either pleasure or effectiveness, both of which, while certainly desirable, are intrinsically amoral.

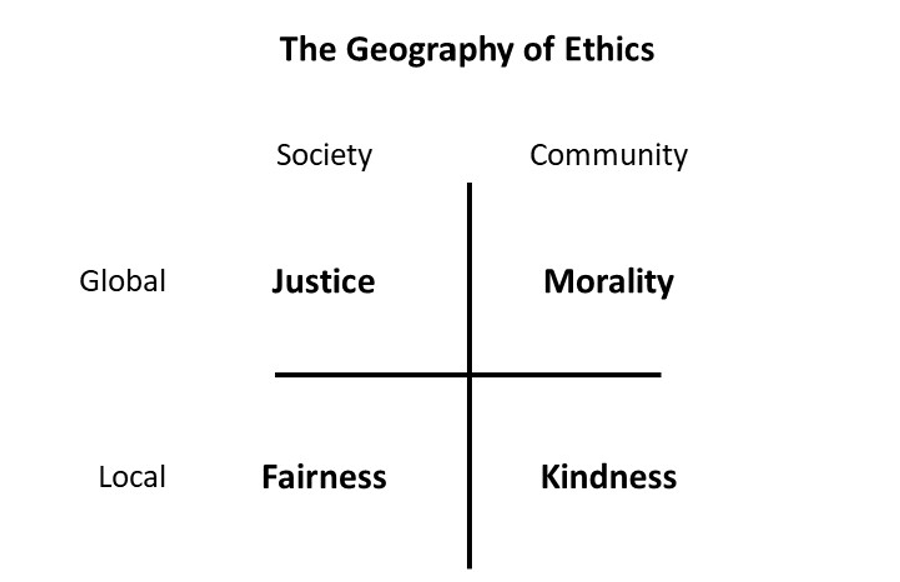

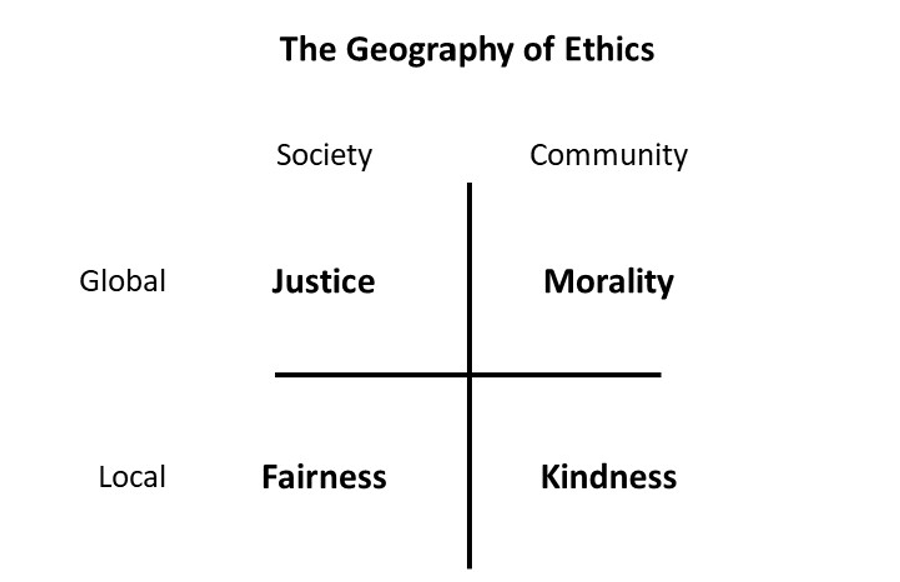

Focusing on actions beneficial to others, the core of ethics is prescriptive, offering behavioral guidelines that are most likely to generate benevolent outcomes. This is the realm of virtue. Once again, however, there is more than one dimension to take into account, leading to more than one kind of virtue. In this case, it is determined by the situation or context in which the action is undertaken, what we called in The Infinite Staircase the geography of ethics.

The geography of ethics is organized into four zones divided by two defining axes. The first axis distinguishes between society and community, the former being the realm of impersonal third-party relationships, the latter that of personal first-and-second-party relationships). This is essentially the distinction between them and us, and while in its polarized form it can be highly disruptive, it is nonetheless universally observed and absolutely essential to managing human relationships.

The second axis addresses the degree of contact involved, contrasting global situations which involve large populations that have little to no direct contact with each other versus local situations where we participate in exchanges with people we encounter in our daily lives. There is still a distinction between them and us, but local relationships require us to enact and incorporate our responses into our everyday behavior.

When paired, the axes generate four zones, each highlighting a different virtue:

Kindness is unique in that it is the only virtue that is universally valued. It is anchored in unconditional love, something that we as mammals have all personally experienced in our infancy, else we would not be alive today. Unlike the other virtues called out here, it does not depend upon the resources of culture, language, narrative, and analytics to activate itself. Once we engage with those forces, we will find ourselves increasingly at odds with people who have opposing views, but prior to so doing, we are all one family. Kindness, thus, is the glue that holds community together, and as such it deserves our greatest respect.

Fairness comes next. The ability to play fair, something children learn at a very early age, sets us apart from all other animals. That’s because it calls upon narrative and analytics to operationalize itself. Specifically, it asks us to imagine a situation in which we are the other person, and they are us, and to then determine whether or not we would endorse the action under consideration. This is the first bridge to connect us with them, and thus is the foundation for social equity and inclusion. Importantly, it is distinct from kindness, for it is possible to be kind without being fair and to be fair without being kind. Kindness by nature is personal, fairness by nature is impersonal, and together they govern our day-to-day ad hoc relationships.

To scale beyond local governance we must transition from the essentially intuitive disciplines of kindness and fairness to the more formalized ones of morality and justice. Both the latter are essential to social welfare, but neither comes into being easily, and each poses challenges humankind continues to struggle with.

Morality is the actionable extension of metaphysics. It teaches us how to align our behavior with the highest forces in the universe, be they sacred or secular. It does so through inspirational narratives that recruit us into imitating role models and committing to values we will live by, and if necessary, be willing to die for. These values are captured in moral codes that assist our day-to-day decision-making. We judge ourselves and others in terms of how well our actual behavior measures up to these codes.

In this way morality becomes foundational to identity. As such, we want it to be both stable and authoritative. Religion provides stable authority by holding certain texts and traditions to be both sacred and undeniable. This works fine up to the boundaries of the religious faith, but beyond that, it encounters disbelief and unbelief, as well as counter-beliefs, all of which deny such authority. The question for the believers then becomes, is such denial acceptable, or must it be confronted and overcome?

Call this the challenge of righteousness. Deeply moved by their own commitments, the righteous seek to impose moral sanctions on entire populations that do not share their views. The current engagement with abortion rights in the U.S. is a relatively benign example. Conservative parties empowered by the recent action of the Supreme Court are challenging a secular tradition of tolerance that is deeply ingrained in American culture. This tolerance is anchored by the First Amendment’s guarantee of religious freedom, itself a product of the European Enlightenment’s efforts to counteract more than a hundred years of sustained religious warfare between Protestants and Catholics, fueled by righteousness of a similar kind. At present, the First Amendment still has the upper hand, but in other societies, we have watched the opposite unfold, and it can leave deep rents in the social fabric.

Whereas conservatives on the right are challenged when they seek to bend the domain of morality to their ends, progressives on the left are equally challenged when they seek to bend the domain of justice to theirs. Justice represents society’s best attempt to institutionalize fairness at scale. It is comprised of two domains—legal justice and social justice. Legal justice represents the rule of law. It is foundational to safety and security, ensuring accountability with respect to personal acts, laws, elections, and dispute resolution. Social justice, in contrast, represents a commitment to equity. It is aspirational, anchored in empathy for all those who are disadvantaged.

The challenge is that legal justice can reinforce, even institutionalize, social injustice, as both our prison and homeless populations bear witness. This is further exacerbated by failed autocratic states exporting their disadvantaged populations to democratic nations, creating crises of immigration around the world. In response, progressives committed to social justice often seek to subvert legal controls in order to create more equitable outcomes, turning a blind eye to illegal immigration and encampments, as well as misdemeanor crimes like shoplifting and drug use. This has the unintended consequence, however, of encouraging free riders to further exploit these looser controls, pushing the boundaries of tolerance ever closer to intolerability, as cities like San Francisco, Portland, and Seattle can testify.

To operate successfully at scale, both morality and justice call for a balance between accountability and empathy. The righteous tend to withdraw empathy in the name of accountability, the progressives to withdraw accountability in the name of empathy. Neither approach suffices. Citizenship calls for us all to hold these two imperatives in tandem, even when they pull us in opposite directions.

That’s what I think. What do you think?

HALLOWEEN BONUS: Save 30% on the eBook, hardcover or softcover of Braden Kelley’s latest book Charting Change (now in its second edition) — FREE SHIPPING WORLDWIDE — using code HAL30 until midnight October 31, 2025

Image Credit: Unsplash and Geoffrey Moore

Sign up here to join 17,000+ leaders getting Human-Centered Change & Innovation Weekly delivered to their inbox every week.

Sign up here to join 17,000+ leaders getting Human-Centered Change & Innovation Weekly delivered to their inbox every week.

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.