Or Just Removed Its Excuses?

LAST UPDATED: March 2, 2026 at 5:13 PM

by Braden Kelley and Art Inteligencia

I. The Question Everyone Is Whispering

Something fundamental has changed in how products are created.

Artificial intelligence can now generate working software in minutes. Designers can move from an idea to a functional prototype without waiting for engineering. Engineers can generate interface concepts, user flows, and even early product ideas with a few well-crafted prompts.

The traditional product development cycle — design, then build, then test — is collapsing into something faster, messier, and far more fluid.

In the past, the biggest constraint in innovation was the cost and time required to build something. Today, AI dramatically reduces that barrier. Entire features, experiments, and even applications can be created almost instantly.

Which raises an uncomfortable question that many product leaders, designers, and engineers are quietly asking:

If we can ship almost immediately, do we still need design thinking?

At first glance, the answer might seem obvious. Design thinking was created to help teams understand people, define the right problems, and avoid building the wrong solutions. Those goals have not disappeared.

But when the cost of building approaches zero, the role of design inevitably changes. The traditional pacing of discovery, ideation, prototyping, and testing begins to compress. The boundaries between designer and engineer begin to blur.

And as those boundaries dissolve, the question is no longer simply whether design thinking still matters.

The deeper question is whether the discipline itself must evolve to survive in a world where almost anyone can turn an idea into working software.

II. Design Thinking Was Built for a World of Scarcity

To understand how artificial intelligence is reshaping product creation, it helps to remember the environment in which design thinking originally emerged.

Design thinking did not appear because organizations suddenly discovered empathy or creativity. It emerged because building things was expensive, slow, and risky. Every product decision carried significant cost, and mistakes could take months or years to correct.

In that world, organizations needed a structured way to reduce uncertainty before committing engineering resources. Design thinking provided that structure.

Its now-famous stages helped teams move deliberately from understanding people to building solutions:

- Empathize — deeply understand the people you are designing for.

- Define — frame the real problem worth solving.

- Ideate — generate a wide range of possible solutions.

- Prototype — create rough representations of potential ideas.

- Test — validate whether those ideas actually work for people.

The goal was simple: avoid spending months building something no one actually needed.

Design thinking slowed teams down in the right places so they could move faster later. It created space for exploration before the heavy machinery of engineering was set in motion.

But this entire framework assumed one critical constraint:

Building was the most expensive part of innovation.

Prototypes were often static mockups. Experiments required engineering time. Even small product changes could take weeks or months to ship.

In other words, design thinking was optimized for a world where the biggest risk was building the wrong thing.

Today, AI is rapidly changing that assumption. When working software can be generated in minutes rather than months, the bottleneck shifts — and the role of design must evolve with it.

III. AI Has Flipped the Innovation Constraint

For most of the history of digital product development, the limiting factor in innovation was the ability to build. Even the best ideas had to wait in line for scarce engineering resources, long development cycles, and complex release processes.

Artificial intelligence is rapidly dismantling that constraint.

Today, AI tools can generate functional code, working interfaces, and interactive prototypes in minutes. What once required a team of specialists and weeks of effort can often be produced by a single individual in an afternoon.

Designers can now:

- Create interactive prototypes that behave like real products

- Generate front-end code directly from design concepts

- Rapidly explore multiple product directions

Engineers can now:

- Generate user interfaces and layouts

- Experiment with product concepts before committing to full builds

- Quickly iterate on product experiences

The barrier between idea and implementation is shrinking dramatically.

As a result, the core constraint in innovation is no longer the ability to build something. The new constraint is the ability to decide what should actually be built.

When creation becomes cheap, judgment becomes the scarce resource.

Organizations can now generate more ideas, features, and experiments than they have the capacity to evaluate thoughtfully. The risk is no longer simply building the wrong thing slowly.

The risk is building thousands of things quickly without enough clarity about which ones actually matter.

This shift fundamentally changes the role of design. Instead of primarily helping teams avoid costly mistakes in development, design increasingly becomes the discipline that helps organizations navigate overwhelming possibility.

IV. The Blurring of Roles: Designers Reach Forward, Engineers Reach Back

One of the most profound effects of AI in product development is the erosion of traditional professional boundaries.

For decades, the technology industry operated with relatively clear separations of responsibility. Designers focused on user needs, interaction models, and visual systems. Engineers translated those designs into working software. Product managers coordinated priorities and timelines between the two.

That structure was largely a reflection of technical limitations. Designing and building required specialized tools, knowledge, and workflows that made cross-disciplinary work difficult.

AI is rapidly dissolving those barriers.

Designers can now reach forward into the domain that once belonged exclusively to engineering. With AI-assisted tools, they can generate working interfaces, produce front-end code, and simulate complex user interactions without waiting for implementation.

At the same time, engineers can reach backward into design. AI systems can help them generate layouts, propose interface structures, and explore experience flows that once required specialized design expertise.

The result is a new kind of creative overlap:

- Designers who can prototype in code

- Engineers who can explore experience design

- Product creators who move fluidly between disciplines

The traditional model of work moving through a linear chain — research to design to engineering — begins to give way to a far more integrated creative process.

The future product creator is not defined by a job title, but by the ability to move fluidly between understanding problems and building solutions.

This does not mean design expertise or engineering skill become less important. If anything, the opposite is true. As tools make it easier for everyone to participate in creation, the depth of real craft becomes more visible and more valuable.

But it does mean the rigid boundaries between “designer” and “builder” are beginning to dissolve, creating a new generation of hybrid creators who can move seamlessly between imagining, designing, and shipping experiences.

V. The Death of the Handoff

For decades, most product development operated like a relay race. Work moved from one team to the next through a series of formal handoffs.

Researchers gathered insights and passed them to designers. Designers created wireframes and mockups that were handed to engineering. Engineers translated those designs into working software and eventually passed the finished product to testing and operations.

Each transition introduced delays, misinterpretations, and loss of context. The original understanding of the problem often became diluted as it traveled through the system.

Artificial intelligence is accelerating the collapse of this model.

When individuals can move rapidly from idea to prototype to functional product, the need for rigid handoffs begins to disappear. A single person can now:

- Explore a user problem

- Design a potential solution

- Generate working code

- Launch an experiment

Instead of waiting for work to pass from one discipline to another, creators can stay connected to the entire lifecycle of an idea.

The distance between insight and implementation is shrinking.

This shift has profound implications for how innovation happens inside organizations. Instead of large teams coordinating complex handoffs, smaller groups — or even individuals — can rapidly test ideas and learn from real-world feedback.

Product development begins to look less like an industrial assembly line and more like a creative studio, where ideas are explored, built, and refined continuously.

The most effective teams in this environment will not simply move faster. They will maintain ownership of ideas from the moment a problem is discovered all the way through to the moment a solution is experienced by real people.

VI. What AI Actually Kills

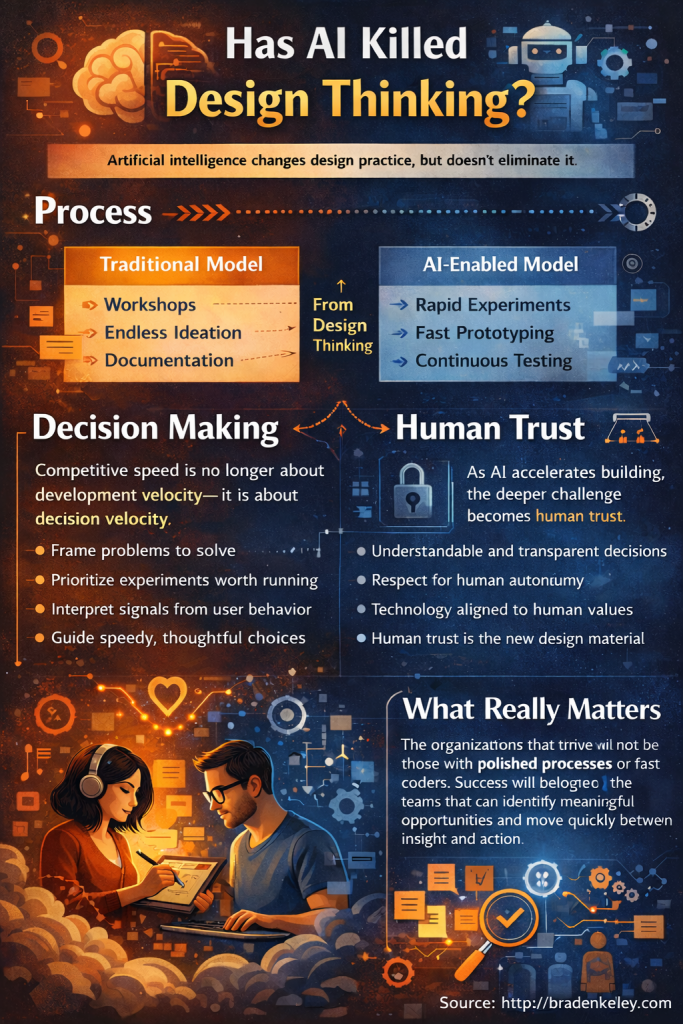

Artificial intelligence is not killing design thinking.

What it is killing are many of the habits that organizations adopted in the name of design thinking but that were never truly about understanding people or solving meaningful problems.

For years, some teams have mistaken the appearance of innovation for the practice of it. Workshops replaced experiments. Sticky notes replaced decisions. Slide decks replaced prototypes.

When building was slow and expensive, these behaviors were often tolerated because teams needed time to align before committing resources. But in a world where working solutions can be generated almost instantly, those habits quickly become friction.

AI removes the excuses that allowed these patterns to persist.

Process Theater

Innovation workshops that generate energy but not outcomes become difficult to justify when teams can build and test ideas immediately.

Endless Ideation

Brainstorming sessions that produce dozens of ideas without committing to experiments lose their value when ideas can be rapidly turned into prototypes and evaluated in the real world.

Documentation Instead of Exploration

Detailed reports, long strategy decks, and static artifacts once helped communicate ideas across teams. But when AI allows concepts to be expressed through working experiences, documentation becomes less important than experimentation.

Safe Innovation

Perhaps most importantly, AI challenges organizations that use process as a shield against risk. When it becomes easy to test bold ideas quickly and cheaply, avoiding experimentation becomes a choice rather than a necessity.

AI doesn’t eliminate design thinking. It eliminates the distance between thinking and doing.

The organizations that thrive in this environment will not be the ones with the most polished innovation processes. They will be the ones that are most willing to replace discussion with discovery and ideas with experiments.

VII. The New Role of Design: Decision Velocity

When the cost of building drops dramatically, the nature of competitive advantage changes.

In the past, organizations succeeded by efficiently transforming ideas into products. Engineering capacity, technical expertise, and operational discipline were often the primary constraints.

But when AI can generate working software, prototypes, and experiments almost instantly, the challenge is no longer how quickly something can be built.

The challenge becomes how quickly and wisely teams can decide what is actually worth building.

In an AI-driven world, innovation speed is no longer about development velocity — it is about decision velocity.

This is where the role of design evolves.

Design shifts from primarily producing artifacts — wireframes, mockups, and prototypes — to guiding the choices that shape meaningful innovation.

Designers increasingly become the people who help teams:

- Frame the right problems to solve

- Clarify human needs and motivations

- Prioritize which ideas deserve experimentation

- Interpret signals from real-world user behavior

In other words, design becomes less about shaping the interface of a product and more about shaping the direction of learning.

When organizations can generate thousands of potential solutions, the real value lies in identifying the small number that actually create meaningful value for people.

Designers, at their best, help organizations navigate that complexity. They connect technology to human context, helping teams avoid the trap of building faster without thinking better.

In the AI era, design is not slowing innovation down. It is helping organizations move quickly without losing their sense of where they should be going.

VIII. From Design Thinking to Design Doing

As artificial intelligence compresses the distance between idea and implementation, the nature of design practice begins to change. The emphasis shifts away from structured stages and toward continuous experimentation.

Traditional design thinking frameworks helped teams organize their thinking before committing to build. But in an AI-enabled environment, building itself becomes part of the thinking process.

Instead of long cycles of analysis followed by development, teams can now explore ideas directly through working prototypes and rapid experiments.

The most effective teams no longer separate thinking from building. They think by building.

This shift marks a move from design thinking to what might be called design doing.

In this model, learning happens through fast cycles of creation, feedback, and refinement. Ideas are not debated endlessly in workshops or captured in lengthy documents. They are explored through tangible experiences that can be observed, tested, and improved.

The practical differences begin to look like this:

| Traditional Model | AI-Enabled Model |

|---|---|

| Workshops and brainstorming sessions | Rapid experiments and live prototypes |

| Personas and research summaries | Behavioral data and real-world signals |

| Concept mockups | Functional prototypes |

| Long planning cycles | Continuous learning loops |

None of this diminishes the importance of understanding people. If anything, the need for deep human insight becomes even more important as the pace of experimentation accelerates.

What changes is how that understanding is expressed. Instead of existing primarily as documents or presentations, insight becomes embedded directly into the experiences teams create and test.

In an AI-native organization, design is no longer a phase that happens before development begins. It becomes an ongoing activity woven directly into the act of building and learning.

IX. Human Trust Becomes the New Design Material

As artificial intelligence accelerates the speed of building, the most important design challenges begin to shift away from usability and toward something deeper: trust.

When products can be created, modified, and deployed almost instantly, the risk is not simply poor interface design. The risk is creating experiences that feel disconnected from human values, human context, and human expectations.

AI makes it easier than ever to generate functionality. But it does not automatically ensure that what is generated is responsible, understandable, or aligned with the needs of the people who will use it.

In an AI-driven world, the most important design material is no longer pixels or screens — it is human trust.

This raises a new set of responsibilities for designers, engineers, and product leaders alike.

Teams must think carefully about questions such as:

- Do people understand what the system is doing?

- Are decisions being made transparently?

- Does the experience respect human autonomy?

- Does the technology reinforce or erode confidence?

As AI systems become more powerful, the danger is not just that they might fail. The danger is that they might succeed in ways that quietly undermine the relationship between organizations and the people they serve.

Design therefore becomes a critical safeguard. It ensures that rapid technological capability does not outpace thoughtful consideration of human consequences.

In this sense, the role of design expands beyond shaping products. It becomes the discipline that ensures technology remains grounded in human meaning, responsibility, and trust.

X. The Future: Designers Who Ship, Engineers Who Empathize

As AI blurs the traditional boundaries between design and engineering, the most valuable creators in the future will be those who can move fluidly between imagining, designing, and building.

Designers will need to ship working products, not just static prototypes. Engineers will need to empathize deeply with users, understanding problems and shaping experiences that align with human needs.

The new hybrid product creator embodies both curiosity and capability, bridging the gap between thinking and doing. They are able to:

- Rapidly translate insights into working solutions

- Experiment and learn from real-world user behavior

- Balance technical feasibility with human desirability

- Maintain alignment between strategy, design, and execution

In this new landscape, design thinking does not disappear — it evolves. AI removes many of the barriers that previously prevented designers and engineers from collaborating fully and iterating quickly.

The organizations that succeed will be those where everyone has the ability to both understand humans and act on that understanding at the speed of AI.

The future belongs to hybrid creators who can navigate ambiguity, make fast decisions, and embed human trust into every experiment. In such a world, innovation is no longer the domain of specialists — it is the responsibility of anyone capable of connecting insight with action.

XI. The Real Question Leaders Should Be Asking

The debate is often framed as a dramatic question: “Has AI killed design thinking?” But this framing misses the deeper challenge facing organizations today.

The real question is not whether design thinking survives — it is whether organizations are prepared to operate in a world where anyone can turn ideas into working products almost instantly.

In this AI-accelerated environment, success depends less on the speed of coding or the elegance of design frameworks. It depends on human judgment, understanding, and alignment.

Leaders must ask themselves:

- Do our teams know what problems are truly worth solving?

- Can we prioritize experiments that create real human value?

- Are we embedding human trust and ethical consideration into everything we build?

- Are our designers and engineers equipped to operate across traditional boundaries?

In this new era, the organizations that thrive will not be the ones with the fastest developers or the slickest design processes.

They will be the organizations that can rapidly identify meaningful opportunities, make thoughtful decisions, and maintain human-centered principles while moving at the speed of AI.

Innovation will no longer belong to the people who can code. It will belong to the people who understand humans well enough to know what should be built in the first place.

The role of leadership is no longer just managing workflows — it is shaping the environment in which hybrid creators can think, act, and build responsibly at unprecedented speed.

New Tools for the New Design Reality

To help you find problems worth solving and to design and execute experiments, I created a couple of visual and collaborative tools to help you thrive in this new reality. Download them both from my store and enjoy!

To help you find problems worth solving and to design and execute experiments, I created a couple of visual and collaborative tools to help you thrive in this new reality. Download them both from my store and enjoy!

- Problem Finding Canvas — Only $4.99 for a limited time

- Experiment Canvas — FREE

FAQ: AI and the Evolution of Design Thinking

- 1. Has AI made design thinking obsolete?

- No. AI has not killed design thinking, but it has changed the context in which it operates. Traditional design thinking frameworks assumed that building was slow and expensive. With AI accelerating the creation of prototypes and software, design thinking evolves from a staged process into a continuous cycle of experimentation and decision-making.

- 2. How are the roles of designers and engineers changing with AI?

- AI blurs the traditional boundaries between designers and engineers. Designers can now generate working code and functional prototypes, while engineers can explore user experience and interface design. The future favors hybrid creators who can both understand human needs and rapidly implement solutions.

- 3. What becomes the main focus of design in an AI-driven product environment?

- The primary focus shifts from producing artifacts to guiding decision-making and protecting human trust. Design becomes the discipline that helps teams prioritize meaningful experiments, interpret real-world feedback, and ensure that rapid technological development remains aligned with human values and needs.

Image credits: ChatGPT

Content Authenticity Statement: The topic area, key elements to focus on, etc. were decisions made by Braden Kelley, with a little help from ChatGPT to clean up the article and add citations.

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.