LAST UPDATED: April 25, 2026 at 11:55 AM

GUEST POST from Art Inteligencia

I. Introduction: The Convergence of Code and Cells

The traditional “move fast and break things” mantra of software development is hitting a wall of complexity. As our digital ecosystems become more interconnected, the “breaking” part is no longer a minor inconvenience — it’s a systemic risk.

Meanwhile, biotechnology — a field where “breaking things” can cost billions of dollars or human lives — has spent decades developing rigorous frameworks for managing extreme uncertainty. We are entering an era where software is becoming as complex as biological systems, requiring a shift in how we approach creation.

- The Paradigm Shift: Moving from “Iterative Tweaking” (minor UI adjustments) to “Discovery-Driven Development” (solving fundamental logic puzzles).

- The Thesis: To build more resilient and impactful products, software teams must borrow the experimental rigor, ethical frameworks, and architectural patience of biotech.

- The Human Element: Shifting team mindset from “Feature Factories” focused on output to “Scientific Investigators” focused on outcomes.

II. The “Clinical Trial” Approach to Feature Validation

In software, we often celebrate the “pivot,” but in biotech, a pivot is a failure of the hypothesis. By applying the structure of clinical trials to our development cycles, we can move away from the “throw it at the wall and see what sticks” method and toward a high-fidelity validation process.

Phase I: Safety and Feasibility

Before a drug reaches a human subject, it must prove it isn’t toxic. In software, Phase I is about isolating the core technical assumption. Can the algorithm actually process the data at scale? Does the integration work? This isn’t an MVP with a pretty UI; it is a “lab bench” test to ensure the technical foundation is safe to build upon.

Phase II: Efficacy (The Human Response)

Once we know the code is “safe,” we must prove it works — not just that it runs, but that it solves the human problem. This phase involves controlled testing with a small, specific cohort of users to measure biological impact: changes in behavior, reduction in friction, or true value creation. If the efficacy isn’t there, the feature is “terminated” before further investment is wasted.

Phase III: Scale and Side Effects

A drug might work for ten people but cause systemic issues for ten thousand. In software, Phase III is the rollout strategy where we monitor for “systemic toxicity” — technical debt, performance degradation, or unexpected UX friction that only emerges at scale. We aren’t just looking for bugs; we are looking for negative externalities.

The Protocol Mentality: Every Jira ticket or user story should be treated as a clinical protocol. It must start with a falsifiable hypothesis, a defined “dosage” (the scope), and a clear success metric (the primary endpoint).

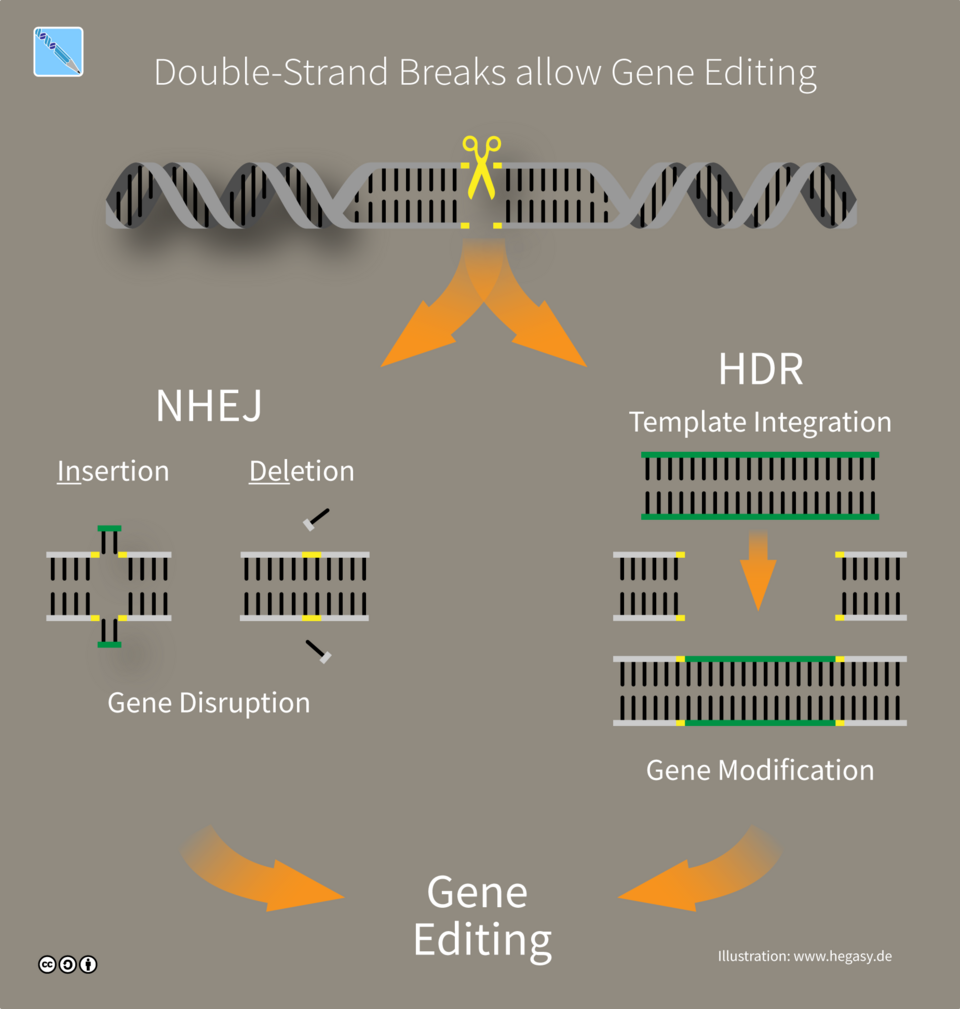

III. Modular Architecture: The “CRISPR” of Software

In biotechnology, the breakthrough of CRISPR-Cas9 changed everything by allowing scientists to edit specific strands of DNA with surgical precision. Software architecture often suffers from “monolithic bloat” — where one small change can lead to unforeseen mutations across the entire system. By adopting a “genomic” approach to modularity, we can build software that is both more resilient and easier to evolve.

Precision Engineering and Gene Editing

Borrowing the concept of gene editing, software teams should strive for components that are highly modular and “hot-swappable.” Just as a specific genetic sequence can be targeted without rewriting the entire genome, our microservices and functions should be designed to be updated, replaced, or deleted without destabilizing the organism — the application.

Bio-mimicry: Self-Healing and Autophagy

Biological systems have evolved incredible ways to maintain health. We can borrow these concepts for our infrastructure:

- Homeostasis (Self-Healing): Developing systems that automatically detect when they have drifted from a “healthy” state and trigger automated recovery protocols without human intervention.

- Autophagy (Self-Cleaning): In biology, cells “eat” their own damaged parts to stay healthy. In software, this means building routines that automatically identify and decommission orphaned data, dead code, or underutilized resources to prevent “architectural decay.”

Risk Mitigation and Contamination Control

In a lab, a single drop of “contaminated” material can ruin an entire experiment. Biotech handles this through isolation and containment. Software teams can apply this by shrinking the “blast radius” of updates. By using advanced containerization and strict API contracts, we ensure that if a specific “gene” (feature) fails or is corrupted, the rest of the software organism remains healthy and functional.

IV. Embracing the “Long R&D” Cycle in an Agile World

The tech industry is obsessed with two-week sprints, but biotech understands that some breakthroughs require years of foundational research. To innovate truly, software teams must learn to balance the “Sprint” with the “Study,” creating space for deep research and development that doesn’t fit into a standard ticket cycle.

Deep Innovation vs. Surface Polish

There is a fundamental difference between optimizing a checkout flow and developing a new machine learning model. The former is a sprint; the latter is a “Lab Phase.” Recognizing when a problem is a “discovery” problem rather than a “delivery” problem allows leaders to allocate the right resources and timelines, preventing the burnout that occurs when trying to force breakthrough innovation into a rigid agile framework.

The Failure Lab: Valuing Negative Results

In biotech, a failed experiment is not a waste of time — it is a vital piece of data that prevents the company from spending billions on a dead end. Software culture often stigmatizes “failed” features. We must build “Failure Labs” where teams are rewarded for proving that a product direction was flawed early. A “successful failure” preserves capital and engineering bandwidth for more viable candidates.

Portfolio Management: Generics vs. Blockbusters

A healthy biotech company manages a balanced portfolio. Software teams should do the same:

- Generics: Maintaining and improving standard, expected features that keep the lights on and the users satisfied.

- Blockbuster Drugs: High-risk, high-reward “FutureHacking” projects that have the potential to disrupt the market or define a new category.

By categorizing work this way, innovation becomes a repeatable process of investment and discovery rather than a desperate search for the next “big thing.”

V. Ethical Sequencing: Responsibility by Design

In the world of biotech, the question is rarely just “Can we do this?” but rather “Should we do this?” The industry is governed by bioethics and stringent regulatory oversight because the stakes are human health. As software increasingly dictates the flow of labor, information, and even democratic processes, we must adopt a similar ethical sequencing protocol.

Bioethics for Algorithms

Just as medical researchers must adhere to the principle of Primum non nocere (First, do no harm), software architects must evaluate the long-term impact of their code. This means assessing algorithms for bias, addictive patterns, or “toxic” data collection before they are ever deployed. We need to move toward a model where ethical impact is a non-negotiable part of the definition of “Done.”

Informed Consent in UX

Most software “consent” is buried in fifty pages of legal jargon that no human reads. Borrowing from clinical research, we should move toward true Informed Consent. This involves transparent, human-centered design that clearly explains how data will be used, what the risks are, and what the user is “signing up for” in plain language, empowering the user rather than tricking them.

Institutional Review Boards (IRBs) for Tech

In biotech, an IRB must approve a study before it begins. Software teams can implement internal Innovation Review Boards. These cross-functional groups — comprising designers, engineers, and even sociologists — should evaluate major pivots or “FutureHacking” initiatives. Their role is to look past the quarterly ROI and consider the systemic “side effects” the software might have on the user’s life or the economy at large.

The Goal: To ensure that our digital “treatments” improve the human condition without creating a legacy of unintended consequences.

VI. Conclusion: Cultivating a High-Fidelity Future

The future of software development isn’t just about writing more lines of code; it’s about increasing the fidelity of our innovation. As we move into an era dominated by agentic AI and increasingly complex digital organisms, the chaotic “move fast and break things” approach is no longer sustainable.

By looking toward biotechnology, we find a roadmap for a more disciplined, ethical, and resilient way to build. When we treat our backlogs as scientific protocols and our architectures as living systems, we stop being “Feature Factories” and start being true pioneers of the digital frontier.

- Summary: The most successful software teams of the next decade will look less like assembly lines and more like high-performance research laboratories.

- The Call to Action: Start treating your next sprint as a series of controlled experiments. Evaluate your codebase for its “biological” health. Most importantly, ensure your innovation is always human-centered by design.

The Braden Kelley Perspective: Innovation isn’t just about the speed of delivery; it’s about the quality of the discovery. By borrowing the discipline of biotech, software teams can stop guessing and start solving for a better tomorrow.

Frequently Asked Questions

What is the primary benefit of applying biotech principles to software development?

The primary benefit is shifting from a “trial and error” approach to a “high-fidelity discovery” model. By using biotech’s rigorous validation phases, software teams can identify “toxic” features or technical debt early, saving significant capital and resources that would otherwise be wasted on non-viable products.

How does the “Clinical Trial” model differ from standard Agile Sprints?

While Sprints focus on rapid delivery and iteration, the Clinical Trial model prioritizes safety, efficacy, and scalability in distinct phases. It requires proving a core hypothesis in a “lab setting” before building out a full user interface, ensuring that the software solves a fundamental human problem rather than just adding surface-level polish.

Can small software teams implement these biotech-inspired strategies?

Absolutely. You don’t need a massive R&D budget to adopt a “Protocol Mentality.” Even small teams can begin by rewriting user stories as falsifiable hypotheses and instituting a “Failure Lab” culture where disproving a feature’s value is celebrated as a strategic win for the product’s long-term health.

Image credit: Google Gemini, Wikimedia Commons

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.