Efficiency Breakthrough or Creative Bankruptcy?

LAST UPDATED: March 21, 2026 at 10:24 PM

by Braden Kelley and Art Inteligencia

Framing the Debate: Signals or Symptoms?

A new wave of layoffs across technology companies has reignited a familiar but increasingly urgent question: what exactly are we witnessing? On the surface, the explanation seems straightforward — companies are tightening costs, responding to macroeconomic pressures, and recalibrating after years of aggressive hiring. But beneath that surface lies a deeper and more consequential debate about the future of innovation, the role of engineers, and the impact of artificial intelligence on knowledge work itself.

Two competing narratives have quickly emerged. The first frames these layoffs as a rational and even necessary evolution. In this view, advances in AI-powered development tools — ranging from large language models to code-generation systems — have fundamentally altered the productivity equation. Engineers equipped with tools like Claude or OpenAI Code can now accomplish in hours what once took days. The implication is clear: if output can be maintained or even increased with fewer people, then reducing headcount is not a sign of weakness but a signal of maturation. Companies are becoming leaner, more efficient, and ultimately more profitable.

The second narrative is far less optimistic. It suggests that layoffs are not a leading indicator of a smarter, AI-augmented future, but a trailing indicator of something more troubling — an innovation slowdown. According to this perspective, many technology companies have already harvested the most accessible opportunities within their existing platforms. What remains is incremental improvement rather than transformative change. In such an environment, cutting engineering talent becomes less about efficiency gains and more about a lack of compelling new problems to solve. The cupboard, in other words, may not be empty — but it may be significantly less full than it once was.

What makes this moment particularly complex is that both narratives can be true at the same time. AI is undeniably increasing productivity in certain domains, compressing development cycles and enabling smaller teams to deliver meaningful results. At the same time, innovation has never been solely a function of efficiency. Breakthroughs emerge from exploration, from cross-functional collisions, and from a willingness to invest in uncertain futures. Layoffs, especially when executed at scale, can disrupt the very conditions that make those breakthroughs possible.

This tension forces us to confront a more nuanced question: are these layoffs a signal of transformation or a symptom of stagnation? Are organizations courageously embracing a new model of AI-augmented work, or are they retreating into cost-cutting as a substitute for bold thinking? The answer matters, because it shapes not only how we interpret today’s decisions, but how we design organizations for tomorrow.

For leaders, the stakes extend beyond quarterly earnings. The choices being made now will determine whether AI becomes a catalyst for a new era of human-centered innovation or a tool that accelerates efficiency at the expense of imagination. For engineers, the implications are equally profound. Their roles are being redefined in real time — not just in terms of what they produce, but in how they create value within increasingly AI-mediated systems.

Ultimately, this is not just a debate about layoffs. It is a debate about what organizations choose to optimize for: productivity or possibility, efficiency or exploration, output or insight. And in that choice lies the future trajectory of innovation itself.

The Case for “Smarter, Leaner, More Profitable”

For many technology leaders, the recent wave of layoffs is not a retreat — it is a re-calibration. The argument is grounded in a simple but powerful premise: the economics of software development have fundamentally changed. With the rapid advancement of AI-assisted coding tools, the amount of output a single engineer can produce has increased dramatically. What once required large, specialized teams can now be accomplished by smaller, more versatile groups augmented by intelligent systems.

Tools such as Claude and OpenAI Code are not merely incremental improvements in developer productivity; they represent a shift in how work gets done. Routine coding tasks, boilerplate generation, debugging assistance, and even architectural suggestions can now be offloaded to AI. This allows engineers to spend less time writing repetitive code and more time focusing on higher-value activities such as system design, problem framing, and integration across complex environments.

In this emerging model, the role of the engineer evolves from builder to orchestrator. Instead of manually crafting every line of code, engineers guide, refine, and validate the outputs of AI systems. The result is a compression of development cycles — features are built faster, iterations occur more rapidly, and time-to-market shrinks. From a business perspective, this translates into a compelling opportunity: maintain or even increase output while reducing labor costs.

This logic is not without precedent. Across industries, waves of automation have consistently redefined the relationship between labor and productivity. In manufacturing, the introduction of robotics did not eliminate production; it scaled it. In many cases, it also improved quality and consistency. Proponents of the current shift argue that AI represents a similar inflection point for knowledge work. The companies that adapt fastest will be those that learn to pair human creativity with machine efficiency.

From a financial standpoint, the incentives are clear. Reducing headcount while sustaining output improves margins, a priority that has become increasingly important in an environment where growth-at-all-costs is no longer rewarded. Investors are placing greater emphasis on profitability and operational discipline, and companies are responding accordingly. Leaner teams are not just a byproduct of technological change — they are a strategic choice aligned with evolving market expectations.

There is also a strategic argument that goes beyond cost savings. By automating lower-value tasks, organizations can theoretically redeploy human talent toward more innovative efforts. Engineers freed from routine work can focus on solving harder problems, exploring new product ideas, and experimenting with emerging technologies. In this view, AI does not replace innovation capacity; it expands it by removing friction from the development process.

Smaller teams can also mean faster decision-making. With fewer layers of coordination required, organizations can become more agile, responding quickly to changing market conditions and customer needs. This agility is often cited as a competitive advantage, particularly in fast-moving technology sectors where speed can determine success or failure.

Ultimately, the “smarter, leaner” argument rests on a belief that efficiency and innovation are not mutually exclusive. Instead, they are mutually reinforcing. By leveraging AI to increase productivity, companies can create the financial and operational headroom needed to invest in the next wave of innovation. Layoffs, in this context, are not an admission of weakness — they are a signal that the underlying system of value creation is being rewritten.

The Case for “Innovation Is Running Dry”

While the efficiency narrative is compelling, an equally important — and more unsettling — interpretation of recent layoffs is gaining traction: that they reflect not technological progress, but an innovation slowdown. In this view, companies are not simply becoming leaner because they can do more with less, but because they have fewer truly novel problems worth investing in. The layoffs, therefore, are less a signal of transformation and more a symptom of diminishing opportunity.

Over the past decade, many technology companies have scaled around a set of highly successful platforms and business models. These platforms have been optimized, expanded, and monetized with remarkable effectiveness. But maturity brings constraints. As systems stabilize and markets saturate, the number of greenfield opportunities naturally declines. What remains is often incremental improvement — refinements, extensions, and efficiencies — rather than the kind of breakthrough innovation that requires large, exploratory engineering teams.

In this context, layoffs can be interpreted as a rational response to a shrinking frontier. If there are fewer bold bets to pursue, there is less need for the capacity required to pursue them. The risk, however, is that this becomes a self-reinforcing cycle. As organizations reduce investment in exploration, they further limit their ability to discover the next wave of opportunity. Over time, efficiency begins to crowd out possibility.

Compounding this dynamic is an increasing reliance on metrics that prioritize productivity over potential. Organizations are becoming exceptionally good at measuring what is already known — velocity, output, utilization — but far less adept at valuing what has yet to be discovered. When success is defined primarily by efficiency gains, it becomes harder to justify the uncertainty and longer time horizons associated with breakthrough innovation.

The rise of AI tools adds another layer of complexity. While these tools can accelerate development, they do not inherently generate new insight. They are trained on existing patterns, which means they are exceptionally effective at extending the present but less equipped to invent the future. This creates the risk of an “illusion of progress,” where output increases but originality does not. More code is produced, but not necessarily more meaningful innovation.

There are also significant cultural consequences to consider. Layoffs, particularly when they affect engineering and product teams, can erode trust and psychological safety within an organization. When employees perceive that their roles are precarious, they are less likely to take risks, challenge assumptions, or pursue unconventional ideas. Yet these behaviors are precisely what fuel innovation. In attempting to optimize for efficiency, companies may inadvertently suppress the very creativity they depend on for long-term growth.

Another often overlooked impact is the loss of institutional knowledge. Experienced engineers carry not just technical expertise, but contextual understanding of systems, decisions, and past experiments. When they leave, they take with them insights that are difficult to codify or replace. This loss can slow future innovation efforts, even as short-term efficiency metrics appear to improve.

Ultimately, the concern is not that companies are becoming more efficient — it is that they may be becoming too narrowly focused on efficiency at the expense of exploration. Innovation requires slack, curiosity, and a willingness to invest in uncertain outcomes. When organizations begin to treat these elements as expendable, they risk signaling something far more significant than cost discipline: a diminishing appetite for invention itself.

The Human-Centered Tension: Productivity vs. Possibility

Beneath the surface of the efficiency versus stagnation debate lies a deeper, more human tension — one that cannot be resolved by technology alone. At its core, innovation has never been just about output. It has always been about the quality of thinking, the diversity of perspectives, and the collisions between ideas that spark something new. When organizations focus too narrowly on productivity, they risk overlooking the very conditions that make possibility achievable.

Innovation does not emerge from isolated efficiency; it emerges from interaction. It is the byproduct of cross-functional curiosity — engineers engaging with designers, product managers challenging assumptions, customers re-framing problems, and leaders creating space for exploration. These interactions are often messy, inefficient, and difficult to measure. But they are also where breakthroughs live. When layoffs reduce not just headcount but diversity of thought and opportunities for collaboration, the innovation system itself becomes less dynamic.

The rise of AI-augmented work introduces a new layer to this tension. As engineers increasingly rely on AI tools to generate code, suggest solutions, and optimize workflows, their role begins to shift. They move from hands-on builders to orchestrators of machine-assisted output. While this shift can increase speed and efficiency, it also raises an important question: what happens to deep craft? The tacit knowledge developed through wrestling with complexity — the kind that often leads to unexpected insights — may be diminished if too much of the process is abstracted away.

There is also a cognitive risk. AI systems are designed to identify and replicate patterns based on existing data. This makes them powerful tools for scaling what is already known, but less effective at challenging foundational assumptions. If organizations become overly dependent on these systems, they may unintentionally standardize thinking. The range of possible solutions narrows, not because people lack creativity, but because the tools they use guide them toward familiar patterns.

Trust plays a critical role in navigating this tension. In environments where employees feel secure, valued, and empowered, they are more likely to experiment, take risks, and pursue unconventional ideas. Layoffs, particularly when they are frequent or poorly communicated, can erode that trust. The result is a more cautious workforce — one that prioritizes safety over exploration. In such environments, productivity may remain high, but the willingness to pursue breakthrough innovation often declines.

Curiosity is the other essential ingredient. It is the force that drives individuals to ask better questions, challenge the status quo, and seek out new possibilities. Yet curiosity requires space — time to think, room to explore, and permission to deviate from immediate objectives. When organizations optimize relentlessly for efficiency, that space tends to disappear. Every moment is accounted for, every effort measured, and every outcome expected to justify itself in the short term.

This creates a paradox. The same tools and strategies that enable organizations to move faster can also constrain their ability to think differently. Speed without reflection can lead to acceleration in the wrong direction. Efficiency without exploration can result in incremental progress that ultimately limits long-term growth.

For leaders, the challenge is not to choose between productivity and possibility, but to intentionally design for both. This means recognizing that innovation systems require balance — between execution and exploration, between structure and flexibility, and between human judgment and machine assistance. It requires protecting the conditions that enable creativity even as new technologies reshape how work gets done.

Ultimately, the question is not whether AI will make organizations more efficient — it already is. The question is whether leaders will use that efficiency to create more space for human ingenuity, or whether they will allow it to crowd out the very behaviors that make innovation possible in the first place.

The Future of Innovation in the Age of AI: Augmentation or Abdication?

As organizations navigate layoffs, AI adoption, and shifting expectations around productivity, the future of innovation is not predetermined — it is being actively shaped by the choices leaders make today. The central question is no longer whether artificial intelligence will transform how work gets done, but how that transformation will be directed. Will AI serve as an amplifier of human ingenuity, or will it become a mechanism for narrowing ambition in the pursuit of efficiency?

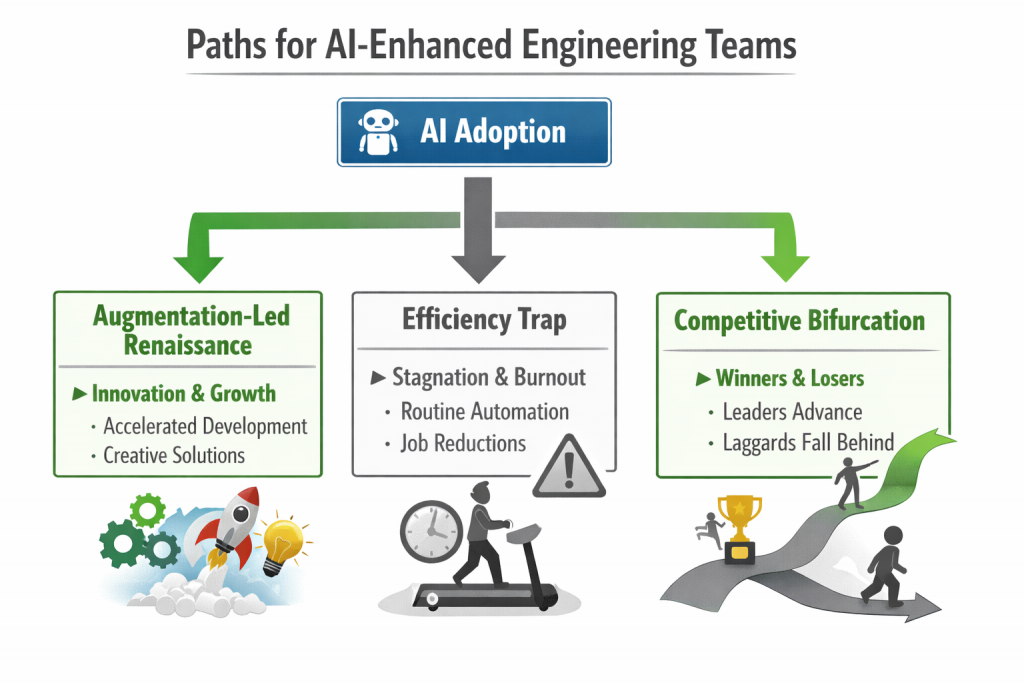

Three distinct paths are beginning to emerge. The first is an augmentation-led renaissance, where organizations successfully combine human creativity with machine capability. In this scenario, AI handles the repetitive and computationally intensive aspects of work, freeing humans to focus on problem framing, experimentation, and breakthrough thinking. Innovation accelerates not because there are fewer people, but because those people are empowered to operate at a higher level of abstraction and impact.

The second path is the efficiency trap. Here, organizations become so focused on optimizing output and reducing cost that they gradually lose their capacity for exploration. AI is used primarily to streamline existing processes rather than to unlock new possibilities. Over time, these organizations become highly efficient at executing yesterday’s ideas, but increasingly disconnected from tomorrow’s opportunities. What appears to be strength in the short term reveals itself as fragility in the long term.

The third path is a bifurcation of the competitive landscape. Some organizations will lean into augmentation, investing in both AI capabilities and the human systems required to harness them effectively. Others will prioritize efficiency, focusing on cost control and incremental gains. The result is a widening gap between companies that consistently generate new value and those that primarily replicate and optimize existing models. In such an environment, innovation becomes a defining differentiator rather than a baseline expectation.

What separates the leaders from the laggards will not be access to AI alone — those tools are increasingly commoditized — but how organizations integrate them into their innovation systems. Leading organizations will invest not just in AI infrastructure, but in what might be called curiosity infrastructure: the cultural, structural, and leadership practices that encourage questioning, exploration, and cross-functional collaboration. They will recognize that technology can accelerate execution, but only humans can redefine the problems worth solving.

This shift will require a redefinition of roles. Engineers, for example, will need to move beyond execution and into areas such as systems thinking, ethical judgment, and interdisciplinary collaboration. Their value will be measured not just by what they build, but by how they frame problems, challenge assumptions, and integrate diverse inputs into coherent solutions. Similarly, leaders will need to become stewards of both performance and possibility, ensuring that the drive for efficiency does not crowd out the pursuit of innovation.

Organizations that thrive will also be those that intentionally protect space for exploration. This does not mean abandoning discipline or ignoring financial realities. It means recognizing that innovation requires a portfolio approach — balancing investments in core optimization with bets on uncertain, high-potential opportunities. AI can make this balance more achievable by reducing the cost of experimentation, but only if leaders choose to reinvest those gains into discovery rather than solely into margin expansion.

Ultimately, the future of innovation in the age of AI will be defined by whether organizations treat these tools as a substitute for human thinking or as a catalyst for it. The real risk is not that AI replaces engineers — it is that organizations stop asking the kinds of questions that require engineers to think deeply, creatively, and collaboratively in the first place.

Augmentation or abdication is not a technological choice. It is a leadership choice. And in making it, organizations will determine whether this moment becomes a turning point toward a more innovative future — or a gradual slide into highly efficient irrelevance.

Frequently Asked Questions

1. Why are technology companies laying off engineers despite using AI tools?

Layoffs may result from a combination of efficiency gains and slowing innovation opportunities. AI tools like

Claude and OpenAI Code allow smaller teams to maintain or increase output, reducing the need for some roles.

At the same time, some companies face fewer breakthrough projects to pursue, which can also drive workforce reductions.

2. Does AI replace human engineers or just augment their work?

AI primarily augments engineers by automating repetitive coding, debugging, and optimization tasks. This allows

engineers to focus on higher-value activities such as system design, problem framing, and creative innovation.

While some roles shift, AI is intended as an amplifier of human ingenuity rather than a replacement.

3. How can companies maintain innovation in the age of AI?

Companies can preserve innovation by investing in curiosity infrastructure, protecting time and space for

experimentation, fostering cross-functional collaboration, and reinvesting efficiency gains into exploratory,

high-potential projects. Balancing productivity with opportunity ensures that humans and AI together drive breakthroughs.

Image credits: ChatGPT

Content Authenticity Statement: The topic area, key elements to focus on, etc. were decisions made by Braden Kelley, with a little help from ChatGPT to clean up the article and add citations.

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.