GUEST POST from Shep Hyken

One of the reasons customers are concerned about or even scared of artificial intelligence (AI) is that it has been known to provide incorrect answers. The result is frustration and concern over whether to believe any AI-fueled technology. In my annual customer service and customer experience research, I asked more than 1,000 U.S. consumers if they ever received wrong or incorrect information from an AI self-service technology. Fifty-one percent said yes.

No, AI is not perfect. Even though the technology continues to improve, it still makes mistakes. And my response to those who claim they won’t trust AI because of those mistakes is to ask, “Has a live customer support agent ever given you bad information?”

That question gets a surprised look, and then a smile, and then an acknowledgement, something like, “You’re right. I never thought about that.”

When AI gives bad information, I refer to that as Artificial Incompetence. It’s just as frustrating when we experience bad information from a live agent, which I call HI, or Human Incompetence. I doubt – I actually know – that the AI and the human aren’t trying to give you bad information.

I once called a customer support number to get help with what seemed like a straightforward question. I didn’t like the answer I received. It just didn’t make sense. Rather than argue, I thanked the agent, hung up, and dialed the same customer support number. A different agent answered, and I asked the same question. This time, I liked the answer. Two humans from the same company answering the same question, but with two completely different answers. And we worry about AI being inconsistent!

AI and Humans Make Mistakes

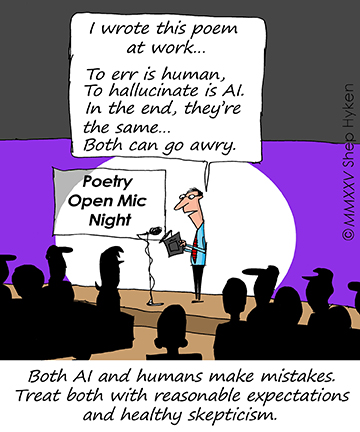

The reality is that both AI and humans make mistakes, and both will continue to do so. The difference is our expectations. We don’t expect humans to be perfect, so when they are not, we may be disappointed, maybe even angry. We may or may not forgive them, but usually, we just chalk it up to being … human. But it’s different when interacting with AI. We expect it to be reliable, and when it makes a mistake, we often assume the entire system is flawed.

Perhaps we should treat both with the same reasonable expectations and the same healthy skepticism we apply to weather forecasters, who use sophisticated technology and have years of training yet still can’t seem to get tomorrow’s forecast right half the time. Well, it seems like half the time! That doesn’t mean we won’t be checking the forecast before we plan our outdoor activities. AI, too, is sophisticated technology that can make life easier.

Image credits: Gemini, Shep Hyken

![]() Sign up here to join 17,000+ leaders getting Human-Centered Change & Innovation Weekly delivered to their inbox every week.

Sign up here to join 17,000+ leaders getting Human-Centered Change & Innovation Weekly delivered to their inbox every week.

Mmm. I think there is a difference. The AI can take factually correct information and spew out clearly nonsensical hallucinations. Often. The human agent won’t, 99.9% of the time. Ask an AI whether sleeping on an IKEA bed means someone may dream of Sweden, and it answers yes. That’s the price we pay for word/language prediction at a massive scale.

I have to admit, your comment made me go to ChatGPT, Grok and Gemini and ask them and all three were incredibly amused by the questions and some of their answers made me chuckle, but all of them strongly stated that sleeping in an IKEA bed won’t make you dream of Sweden. I had a rep in a store tell me factually false information just the other day — even after reading the promo details right off his iPad before speaking to me so humans definitely hallucinate (in nonsensical ways too on Twitter for sure) using their own faulty token predictions. Thanks for stopping by and sharing your perspective!