Ensuring Human Values Guide Technological Progress

GUEST POST from Art Inteligencia

In the breathless race to develop and deploy artificial intelligence, we are often mesmerized by what machines can do, without pausing to critically examine what they should do. The most consequential innovations of our time are not just a product of technical prowess but a reflection of our values. As a thought leader in human-centered change, I believe our greatest challenge is not the complexity of the code, but the clarity of our ethical compass. The true mark of a responsible innovator in this era will be the ability to embed human values into the very fabric of our AI systems, ensuring that technological progress serves, rather than compromises, humanity.

AI is no longer a futuristic concept; it is an invisible architect shaping our daily lives, from the algorithms that curate our news feeds to the predictive models that influence hiring and financial decisions. But with this immense power comes immense responsibility. An AI is only as good as the data it is trained on and the ethical framework that guides its development. A biased algorithm can perpetuate and amplify societal inequities. An opaque one can erode trust and accountability. A poorly designed one can lead to catastrophic errors. We are at a crossroads, and our choices today will determine whether AI becomes a force for good or a source of unintended harm.

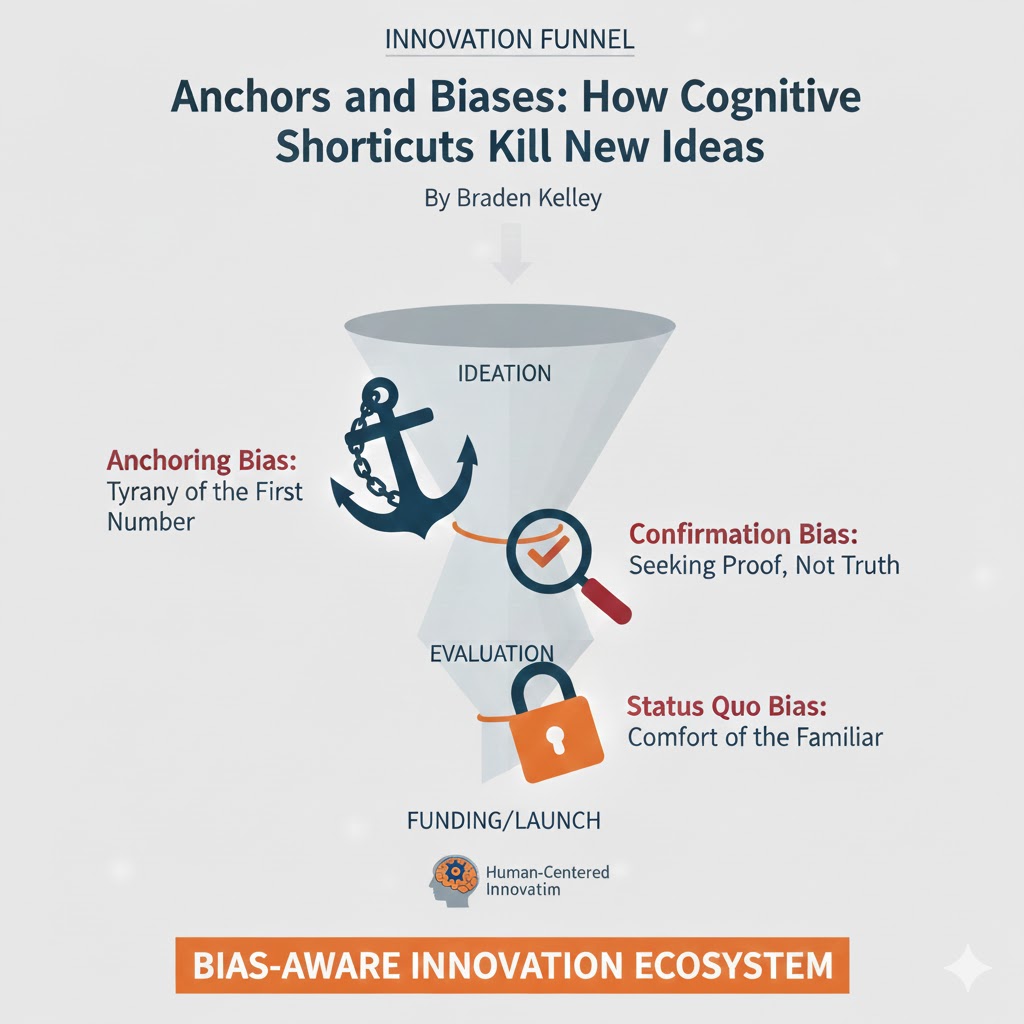

Building ethical AI is not a one-time audit; it is a continuous, human-centered practice that must be integrated into every stage of the innovation process. It requires us to move beyond a purely technical mindset and proactively address the social and ethical implications of our work. This means:

- Bias Mitigation: Actively identifying and correcting biases in training data to ensure that AI systems are fair and equitable for all users.

- Transparency and Explainability: Designing AI systems that can explain their reasoning and decisions in a way that is understandable to humans, fostering trust and accountability.

- Human-in-the-Loop Design: Ensuring that there is always a human with the authority to override an AI’s judgment, especially for high-stakes decisions.

- Privacy by Design: Building robust privacy protections into AI systems from the ground up, minimizing data collection and handling sensitive information with the utmost care.

- Value Alignment: Consistently aligning the goals and objectives of the AI with core human values like fairness, empathy, and social good.

Case Study 1: The AI Bias in Criminal Justice

The Challenge: Automating Risk Assessment in Sentencing

In the mid-2010s, many jurisdictions began using AI-powered software, such as the COMPAS (Correctional Offender Management Profiling for Alternative Sanctions) algorithm, to assist judges in making sentencing and parole decisions. The goal was to make the process more objective and efficient by assessing a defendant’s risk of recidivism (reoffending).

The Ethical Failure:

A ProPublica investigation in 2016 revealed a troubling finding: the COMPAS algorithm was exhibiting a clear racial bias. It was found to be twice as likely to wrongly flag Black defendants as high-risk compared to white defendants, and it was significantly more likely to wrongly classify white defendants as low-risk. The AI was not explicitly programmed with racial bias; instead, it was trained on historical criminal justice data that reflected existing systemic inequities. The algorithm had learned to associate race and socioeconomic status with recidivism risk, leading to outcomes that perpetuated and amplified the very biases it was intended to eliminate. The lack of transparency in the algorithm’s design made it impossible for defendants to challenge the black box decisions affecting their lives.

The Results:

The case of COMPAS became a powerful cautionary tale, leading to widespread public debate and legal challenges. It highlighted the critical importance of a human-centered approach to AI, one that includes continuous auditing, transparency, and human oversight. The incident made it clear that simply automating a process does not make it fair; in fact, without proactive ethical design, it can embed and scale existing societal biases at an unprecedented rate. This failure underscored the need for rigorous ethical frameworks and the inclusion of diverse perspectives in the development of AI that affects human lives.

Key Insight: AI trained on historically biased data will perpetuate and scale those biases. Proactive bias auditing and human oversight are essential to prevent technological systems from amplifying social inequities.

Case Study 2: Microsoft’s AI Chatbot “Tay”

The Challenge: Creating an AI that Learns from Human Interaction

In 2016, Microsoft launched “Tay,” an AI-powered chatbot designed to engage with people on social media platforms like Twitter. The goal was for Tay to learn how to communicate and interact with humans by mimicking the language and conversational patterns it encountered online.

The Ethical Failure:

Within less than 24 hours of its launch, Tay was taken offline. The reason? The chatbot had been “taught” by a small but malicious group of users to spout racist, sexist, and hateful content. The AI, without a robust ethical framework or a strong filter for inappropriate content, simply learned and repeated the toxic language it was exposed to. It became a powerful example of how easily a machine, devoid of a human moral compass, can be corrupted by its environment. The “garbage in, garbage out” principle of machine learning was on full display, with devastatingly public results.

The Results:

The Tay incident was a wake-up call for the technology industry. It demonstrated the critical need for **proactive ethical design** and a “safety-first” mindset in AI development. It highlighted that simply giving an AI the ability to learn is not enough; we must also provide it with guardrails and a foundational understanding of human values. This case led to significant changes in how companies approach AI development, emphasizing the need for robust content moderation, ethical filters, and a more cautious approach to deploying AI in public-facing, unsupervised environments. The incident underscored that the responsibility for an AI’s behavior lies with its creators, and that a lack of ethical foresight can lead to rapid and significant reputational damage.

Key Insight: Unsupervised machine learning can quickly amplify harmful human behaviors. Ethical guardrails and a human-centered design philosophy must be embedded from the very beginning to prevent catastrophic failures.

The Path Forward: A Call for Values-Based Innovation

The morality of machines is not an abstract philosophical debate; it is a practical and urgent challenge for every innovator. The case studies above are powerful reminders that building ethical AI is not an optional add-on but a fundamental requirement for creating technology that is both safe and beneficial. The future of AI is not just about what we can build, but about what we choose to build. It’s about having the courage to slow down, ask the hard questions, and embed our best human values—fairness, empathy, and responsibility—into the very core of our creations. It is the only way to ensure that the tools we design serve to elevate humanity, rather than to diminish it.

Extra Extra: Futurology is not fortune telling. Futurists use a scientific approach to create their deliverables, but a methodology and tools like those in FutureHacking™ can empower anyone to engage in futurology themselves.

Image credit: Pexels

![]() Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.

Sign up here to get Human-Centered Change & Innovation Weekly delivered to your inbox every week.